Validating Ecological Risk Tools: How Stock Status Reports Shape Next-Generation Assessment Methods

This article provides a comprehensive framework for researchers and drug development professionals on validating ecological risk assessment (ERA) methodologies using stock status reports and other quantitative benchmarks.

Validating Ecological Risk Tools: How Stock Status Reports Shape Next-Generation Assessment Methods

Abstract

This article provides a comprehensive framework for researchers and drug development professionals on validating ecological risk assessment (ERA) methodologies using stock status reports and other quantitative benchmarks. It first explores the foundational principles of ERA, the mismatch between measurement and assessment endpoints, and introduces key screening tools like Productivity and Susceptibility Analysis (PSA) and the Sustainability Assessment for Fishing Effects (SAFE)[citation:1][citation:2]. The methodological section details comparative approaches for validating these data-poor tools against data-rich benchmarks such as Fishery Status Reports (FSR)[citation:1]. Subsequently, the troubleshooting section addresses common issues like over-precautionary outcomes and data scarcity, offering optimization strategies including the integration of fishers' knowledge[citation:4]. Finally, the article synthesizes empirical validation results—such as PSA's 27% and SAFE's 8% misclassification rates against FSR—to guide method selection and future development toward integrated, next-generation risk assessment paradigms[citation:1][citation:5].

Understanding Ecological Risk Assessment: Core Principles, Tools, and the Critical Need for Validation

An Ecological Risk Assessment (ERA) is a formal, scientific process for evaluating the likelihood that one or more environmental stressors may cause adverse ecological effects [1]. It is a critical tool for informing environmental management and policy, serving two primary purposes: prospective (predicting likelihood of future effects from proposed actions) and retrospective (evaluating the cause of observed effects from past or ongoing exposures) [1] [2].

The overarching objective is to provide risk managers with scientifically defensible information to support decisions—such as chemical regulation, habitat restoration, or remediation—that protect the health of ecosystems and the services they provide [1] [3]. This process is inherently iterative and tiered, designed to efficiently allocate resources by starting with conservative, screening-level assessments and progressing to more complex, realistic models only for risks deemed unacceptable at lower tiers [4] [2].

Within the context of a broader thesis on validation, ERAs share fundamental challenges with stock assessment models used in fisheries science: both rely on models to estimate unobservable quantities (e.g., ecological risk, spawning stock biomass) and must integrate multiple lines of evidence under uncertainty. Therefore, validation frameworks developed for stock assessments, particularly those focusing on prediction skill and model plausibility, offer valuable paradigms for strengthening the credibility of ERA outcomes [5].

Comparative Analysis of Tiered Assessment Approaches

The tiered approach is central to efficient ERA. It begins with simple, conservative screening and escalates in complexity, data requirements, and ecological realism. The table below compares the key characteristics, methodologies, and outputs across assessment tiers.

Table: Comparison of Tiered Ecological Risk Assessment Approaches

| Assessment Tier | Primary Objective | Key Methodologies & Models | Exposure & Effects Estimation | Risk Characterization Output | Typical Use Case/Context |

|---|---|---|---|---|---|

| Tier 1: Screening | To identify stressors and scenarios posing negligible risk or requiring further evaluation. Uses worst-case assumptions to ensure conservatism [2]. | Deterministic Risk Quotients (RQs) [2]. Standard models: T-REX, TerrPlant, BeeREX (terrestrial) [6]; Tier I Rice Model (aquatic) [6]. | Exposure: Single, high-end point estimate (e.g., maximum application rate). Effects: Laboratory toxicity endpoints (e.g., LC50, NOAEC) for standard test species [4] [2]. | Risk Quotient (RQ) compared to a Level of Concern (LOC). Binary outcome: "Risk" or "No Risk" [2]. | Initial regulatory review of pesticides; prioritization of sites for further investigation. |

| Tier 2: Refined (Deterministic) | To refine the risk estimate for concerns identified in Tier 1 by incorporating more realistic, but still simplified, exposure scenarios. | More sophisticated fate & transport models. Examples: PWC (Pesticide in Water Calculator) [6], KABAM (bioaccumulation) [6], AgDRIFT (spray drift) [6]. | Exposure: Refined estimates using real-world scenarios (e.g., specific crops, weather). Effects: May use species sensitivity distributions (SSDs) or multiple toxicity endpoints [6]. | Probabilistic outputs (e.g., distributions of exposure concentrations). A refined RQ or exceedance probability. | Refined assessment for specific use patterns; site-specific preliminary assessments. |

| Tier 3: Advanced (Probabilistic & Modeling) | To provide a realistic, population- or ecosystem-relevant risk estimate to inform complex management decisions [2]. | Mechanistic Effects Models: Population models (e.g., following Pop-GUIDE), MCnest (avian reproduction) [6]. Integrated Models: Coupling exposure models (e.g., PWC) with effects models. | Exposure: Probabilistic, temporally and spatially explicit simulations. Effects: Models translating individual-level effects to impacts on population growth, structure, or ecosystem services [2]. | Probabilistic estimates of population-level endpoints (e.g., risk of decline >20%). Quantitative characterization of uncertainty. | Endangered species assessments; complex remediation decisions; evaluating chronic, population-level risks [2]. |

Methodological Workflow: From Problem Formulation to Risk Characterization

The ERA process follows a structured, three-phase workflow initiated by a planning stage. This sequence ensures the assessment is focused, scientifically defensible, and aligned with management needs [1] [3].

Planning & Problem Formulation: This foundational phase translates a broad management problem into a concrete assessment plan. Key activities include integrating available information, selecting assessment endpoints (the ecological entity and its attribute to protect, such as "reproduction in largemouth bass populations"), and developing a conceptual model [4] [3]. The conceptual model diagrammatically links stressors to receptors via exposure pathways, forming risk hypotheses. The phase concludes with a detailed analysis plan [4].

Analysis: This phase separately evaluates exposure and ecological effects. The exposure assessment describes the stressor's path from source to receptor, its distribution in the environment, and the extent of contact [3]. For chemicals, this considers bioavailability, bioaccumulation, and timing relative to sensitive life stages [3]. The effects assessment (or stressor-response assessment) evaluates the relationship between the magnitude of exposure and the likelihood or severity of adverse effects, drawing from laboratory and field data [1] [3].

Risk Characterization: This phase integrates the analysis to estimate risk. It involves risk estimation (comparing exposure and effects) and risk description, which interprets the results, discusses ecological adversity, and, critically, summarizes all uncertainties and assumptions [1] [3]. The output must be clear enough to support a risk management decision.

The Tiered Assessment Framework in Practice

The tiered framework is a pragmatic implementation of the ERA workflow, where each cycle through the three phases increases in refinement.

Screening (Tier 1): This level uses highly conservative assumptions (e.g., maximum exposure, most sensitive toxicity value) to calculate a deterministic Risk Quotient (RQ). Its strength is speed and efficiency for clear low-risk scenarios. A major limitation is that it does not quantify risk probability or magnitude and can overestimate risk, triggering unnecessary further testing [2].

Refined (Tier 2): Assessments that exceed screening levels move to Tier 2, which replaces worst-case assumptions with more realistic data. Exposure estimates may incorporate regional weather data, specific application methods, or probabilistic distributions [6]. Effects assessment may use Species Sensitivity Distributions (SSDs). Outputs include probabilistic metrics, providing a better sense of the likelihood of adverse effects.

Advanced Modeling (Tier 3): For high-stakes or complex decisions, Tier 3 employs mechanistic models to understand how effects manifest at population or community levels. For example, the MCnest model projects the impact of pesticide exposure on avian annual reproductive success [6]. The most significant advancement is the use of population models (guided by frameworks like Pop-GUIDE) that integrate life-history traits, density-dependence, and exposure dynamics to predict impacts on population growth or extinction risk [2]. This moves risk characterization beyond simple quotients to ecologically relevant endpoints.

Validation Paradigms: Lessons from Fisheries Stock Assessment

A core challenge in ERA, as in fisheries science, is validating models when the true state of the system (e.g., population-level risk, true stock biomass) is unobservable [5]. Stock assessment science has developed rigorous validation paradigms that can inform ERA.

Table: Comparison of Validation Paradigms from Stock Assessment for ERA Application

| Validation Paradigm | Core Principle | Key Diagnostic Tools | Advantages | Challenges & Considerations | Potential Application in ERA |

|---|---|---|---|---|---|

| "Best Assessment" | Select a single "best" model based on statistical goodness-of-fit to historical data [5]. | Residual analysis; Retrospective analysis (checking stability of estimates over time) [5]. | Simplicity; provides a single answer for managers. | High risk of model misspecification; "cherry-picking" models to fit beliefs; ignores alternative plausible hypotheses [5]. | Analogous to selecting a single toxicity value or exposure model without exploring alternatives. |

| Model Ensemble | Combine outputs from multiple plausible models to represent structural uncertainty [5]. | Weighting schemes (e.g., based on AIC, prediction skill); diversity of model structures [5]. | Quantifies uncertainty from competing hypotheses; can improve prediction robustness. | Requires objective method to weight models; ensemble must be diverse and representative [5]. | Creating ensembles of exposure models (e.g., PWC scenarios) or effects models (e.g., different population model structures). |

| Management Strategy Evaluation (MSE) | Simulation-test the entire management cycle (assessment, decision rule, implementation) under many plausible "states of nature" [5]. | Operating Models (OMs) represent system truth; test Management Procedures (MPs) against OMs; prediction skill validation [5]. | Tests robustness of decisions, not just models; most comprehensive validation framework. | Computationally intensive; requires broad stakeholder buy-in to design OMs and MPs. | Testing the robustness of an ERA-based regulatory trigger (e.g., an RQ LOC) or a remediation goal across many simulated ecosystems. |

| Prediction Skill Validation | Assess a model's ability to predict data withheld from the fitting process (hindcasting) [5]. | Hindcast Analysis: Omit recent data, fit model, predict omitted values, compare to observations [5]. | Objectively measures predictive ability; strong tool for model selection and rejection. | Requires adequate time-series data. | Validating population models by hindcasting species abundance data; validating exposure models by hindcasting environmental monitoring data. |

The uncertainty grid approach used in stock assessments—systematically evaluating combinations of key uncertain parameters—is directly applicable to higher-tier ERA [5]. For instance, an ERA for a pesticide could run an ensemble of simulations varying parameters like degradation rate, application timing, and species sensitivity to understand how these uncertainties propagate to the final risk metric.

The Scientist's Toolkit: Key Research Reagent Solutions

Table: Essential Reagents, Models, and Tools for Ecological Risk Assessment Research

| Tool/Reagent Category | Specific Example(s) | Primary Function in ERA | Associated Tier/Phase |

|---|---|---|---|

| Exposure Fate & Transport Models | PWC (Pesticide in Water Calculator): Estimates pesticide concentrations in water bodies from land applications [6]. AgDRIFT/AGDISP: Predicts atmospheric deposition and spray drift from applications [6]. | Simulate the environmental fate of chemical stressors and estimate exposure concentrations for ecological receptors. | Tier 1-3, Analysis Phase. |

| Terrestrial Exposure & Effects Models | T-REX: Estimates pesticide residues on avian and mammalian food items [6]. MCnest: Integrates toxicity, timing, and life history to estimate impacts on bird population productivity [6]. | Translate pesticide use patterns into dose estimates for terrestrial species; project individual-level exposures to population-level consequences. | T-REX (Tier 1-2); MCnest (Tier 3). |

| Aquatic Bioaccumulation Models | KABAM: Estimates bioaccumulation of hydrophobic pesticides in aquatic food webs and risks to predators [6]. | Models trophic transfer and biomagnification of persistent chemicals, a key exposure pathway for fish, birds, and mammals. | Tier 2-3, Analysis Phase. |

| Population Modeling Framework | Pop-GUIDE (Population modeling Guidance, Use, Interpretation, and Development for ERA): A framework for developing fit-for-purpose population models [2]. | Provides standardized guidance for building, documenting, and interpreting mechanistic population models to assess ecological risks. | Tier 3, Analysis & Risk Characterization. |

| Probabilistic Risk Tools | EcoRisk View (Software Suite): An advanced program for conducting comprehensive multi-pathway probabilistic ecological risk assessments [7]. | Integrates exposure and effects distributions to compute probabilistic risk estimates, moving beyond deterministic RQs. | Tier 2-3, Risk Characterization. |

| Validation & Diagnostics Toolbox | Hindcast Analysis/Prediction Skill Metrics: Tools for omitting data, generating predictions, and comparing them to observations [5]. Uncertainty Grids: Structured sets of model scenarios covering key parameter uncertainties [5]. | Objectively evaluate model predictive performance and quantify the impact of parametric and structural uncertainty on assessment outcomes. | All Tiers, essential for model validation and reporting uncertainty. |

Ecological Risk Assessment (ERA) is the formal process used to evaluate the safety of manufactured chemicals and other anthropogenic stressors on the environment [8]. A fundamental and persistent challenge within this field is the disconnect between what is easily measured in controlled settings—measurement endpoints—and the ultimate ecological values society seeks to protect—assessment endpoints [8].

Measurement endpoints are the quantifiable biological responses (e.g., cell viability, organismal mortality, gene expression) observed in standardized laboratory tests. In contrast, assessment endpoints are explicit expressions of the actual environmental values to be protected, such as the sustainability of a fish population, the biodiversity of a stream community, or the integrity of an ecosystem service [8]. In most ERAs, the measurement endpoint is a toxicity value from a laboratory test on a model organism, while the assessment endpoint is a broader, often vaguely defined concept like "ecosystem function" [8]. This gap creates uncertainty, potentially leading to risk estimates that either underestimate threats (causing environmental degradation) or overestimate them (leading to unnecessary remediation costs) [8].

This guide compares the performance of contemporary strategies designed to narrow this endpoint gap. Framed within the broader thesis of validating ecological risk assessments with real-world ecosystem status reports, we objectively evaluate methods ranging from traditional bioassays to modern computational models, supported by experimental data.

Comparative Analysis of Methodological Approaches

This section provides a comparative evaluation of prominent methodologies, summarizing their core principles, advantages, limitations, and illustrative data.

Tiered Assessment Frameworks

Traditional ERA often employs a tiered framework, progressing from simple, conservative screens to complex, site-specific studies [8]. The table below compares the key characteristics of different tiers.

Table: Comparison of Tiers in Ecological Risk Assessment [8]

| Tier Level | Basic Description | Typical Risk Metric | Advantages | Limitations |

|---|---|---|---|---|

| Tier I | Conservative screening analysis to rule out negligible risks. | Hazard/risk quotient compared to a Level of Concern. | Rapid, cost-effective, requires minimal data. | Highly conservative; may overestimate risk; lacks probabilistic realism. |

| Tier II | Refined analysis incorporating variability and uncertainty. | Probability of adverse effect to an ecological receptor. | More realistic risk estimate; begins to quantify uncertainty. | Requires more robust exposure and effects data. |

| Tier III/IV | Highly refined or site-specific analysis with field studies. | Multiple lines of evidence from environmentally relevant data. | High ecological relevance; can directly address assessment endpoints. | Resource-intensive, time-consuming, complex to interpret. |

Bioassay-Based Screening Strategies

Bioassays are fundamental measurement tools. A 2025 comparative study evaluated the sensitivity of bioassays using unicellular organisms and vertebrate cell lines against 21 chemicals and 279 wastewater samples [9]. The performance data highlights significant differences in utility for screening.

Table: Comparative Performance of Bioassays for Detecting Chemical Toxicity [9]

| Bioassay Type | Test System | Sensitivity to 21 Ref. Chemicals | Responsiveness to 279 Env. Samples | Key Strengths | Primary Weaknesses |

|---|---|---|---|---|---|

| Algal Assay | Raphidocelis subcapitata | >80% detected | >92% responded | High sensitivity to broad chemicals; protein/lipid-free medium enhances bioavailability. | May not detect vertebrate-specific toxicities. |

| Bacterial Assay | Escherichia coli | Moderate | Data not specified | Rapid, cost-effective; sensitive to specific modes (e.g., antibiotics). | Lower overall sensitivity in complex mixtures. |

| Yeast Assay | Saccharomyces cerevisiae | Least responsive | Data not specified | Eukaryotic model; useful for fungicide detection. | Generally low sensitivity for broad screening. |

| Vertebrate Cell Viability | Various fish and mammalian cell lines | Variable by cell line | 21–53% responded | Detects vertebrate-specific pathway disturbances. | Medium composition can reduce bioavailability of lipophilic compounds. |

| Combined Assay | Algae + DR-EcoScreen cells | Not specified | 96.4% of toxicities captured | High-throughput, cost-effective strategy for broad screening. | Requires multiple assay platforms. |

The study concluded that a combined battery using an algal assay and a vertebrate cell line (DR-EcoScreen) captured 96.4% of detected toxicities in environmental samples, offering a powerful high-throughput screening strategy [9]. This aligns with the principle that no single measurement endpoint is sufficient [8].

Computational & Machine Learning Approaches

Computational methods, particularly machine learning (ML), are emerging as powerful tools for predicting toxicity and bridging data gaps. The ToxACoL paradigm represents a significant advance in acute toxicity assessment for multi-species and multi-endpoint prediction [10].

Table: Comparison of Machine Learning Paradigms for Acute Toxicity Prediction [10]

| ML Paradigm | Core Approach | Advantages | Limitations | Performance Note |

|---|---|---|---|---|

| Single-Task Learning (STL) | Models one toxicity endpoint independently. | Simple, interpretable models for data-rich endpoints. | Poor performance on data-scarce endpoints; ignores endpoint correlations. | Random Forest and Graph Neural Networks often perform well. |

| Multi-Task Learning (MTL) | Shares representation learning across multiple related endpoints. | Improves average performance across all endpoints via knowledge sharing. | Struggles with highly imbalanced data; may not improve scarce endpoints. | Better average performance than STL but can dilute focus. |

| Adjoint Correlation Learning (ToxACoL) | Models endpoint relationships via graph topology; learns compound and endpoint representations simultaneously. | Dramatically improves prediction for data-scarce endpoints (e.g., 43-87% for human endpoints); enables extrapolation. | Higher model complexity; requires careful graph construction. | Reduces needed training data by 70-80% for sparse endpoints, aligning with 3Rs principles. |

ToxACoL’s “endpoint-aware” learning explicitly models the relationships between different experimental conditions (species, route), which is crucial for extrapolating from standard test species to sensitive ecological receptors or humans [10].

Qualitative Ecosystem-Based Tools

In data-poor contexts, such as fisheries management, qualitative and semi-quantitative tools are vital for incorporating ecosystem information. The Ecological Risk Assessment for the Effects of Fishing (ERAEF) framework is one such approach [11].

Table: Comparison of Qualitative ERA Tools in Fisheries Management [12] [11]

| Tool / Approach | Application Context | Methodology | Output | Utility for Bridging the Gap |

|---|---|---|---|---|

| Scale Intensity Consequence Analysis (SICA) | Initial, qualitative screening of fishery impacts. | Expert judgment to rank risks based on scale, intensity, and consequence of impact. | Prioritizes issues for further assessment. | Incorporates broad ecosystem considerations early in the assessment process. |

| Productivity Susceptibility Analysis (PSA) | Semi-quantitative risk assessment for bycatch species. | Scores species based on productivity (life history) and susceptibility to the fishery. | Relative vulnerability ranking (Low, Moderate, High). | Translates limited population data into management priorities for non-target species. |

| Ecosystem-Based Risk Tables | Integrating qualitative ecosystem trends into single-species management. | Distills complex ecosystem information into qualitative advice for risk tolerance adjustment. | Informs flexible management decisions amidst uncertainty. | Directly incorporates ecosystem-level assessment endpoints (e.g., stability) into stock management. |

An application of SICA and PSA to an Amazonian shrimp trawl fishery identified 12 out of 47 bycatch species as highly vulnerable, directing future management and data collection efforts [11]. These tools make the link between the measurable (catch data) and the assessment goal (ecosystem sustainability) more transparent in data-limited situations [12].

Experimental Protocols for Key Studies

Objective: To identify the most sensitive bioassays for detecting a wide range of toxicological effects in environmental samples.

- Chemical Selection: 21 chemicals with diverse modes of action (e.g., herbicides, pharmaceuticals, metals) and known environmental relevance were selected.

- Bioassay Panel: Three unicellular organisms (the algae Raphidocelis subcapitata, bacterium Escherichia coli, and yeast Saccharomyces cerevisiae) and five vertebrate cell lines (including fish and mammalian cells) were cultured under standard conditions.

- Exposure & Measurement:

- Unicellular organisms: Exposed in 96-well plates; growth inhibition was measured via optical density or fluorescence.

- Vertebrate cells: Exposed for 24 hours; cell viability was measured using an MTS assay, which quantifies mitochondrial metabolic activity.

- Environmental Sampling: 279 wastewater treatment plant effluent samples were collected, concentrated, and screened using the most sensitive assays from the initial chemical testing.

- Data Analysis: Sensitivity was calculated as the percentage of chemicals or samples eliciting a significant toxic response. Complementarity between assays was analyzed to design an optimal screening battery.

Objective: To develop a machine learning model that accurately predicts data-scarce toxicity endpoints by learning relationships between multiple experimental conditions.

- Data Curation: A dataset of 59 acute toxicity endpoints (e.g., LD50, TDLo) covering over 80,000 compounds was assembled from public databases like TOXRIC. Endpoints varied by species, administration route, and measurement indicator.

- Graph Construction: A graph was built where nodes represent toxicity endpoints. Edges and their weights between nodes were established based on the similarity of experimental conditions and correlations in available toxicity data.

- Model Architecture: The ToxACoL model uses a dual-branch architecture:

- Compound Branch: A neural network processes molecular representations (e.g., SMILES strings).

- Endpoint Graph Branch: A graph convolution network propagates information across the endpoint relationship graph.

- Adjoint Correlation Mechanism: At each layer, the two branches interact, allowing the model to learn endpoint-aware compound representations.

- Training & Validation: The model was trained to predict toxicity values for all endpoints simultaneously. Performance was rigorously validated, with special attention to its accuracy on data-scarce human toxicity endpoints compared to state-of-the-art benchmarks.

Visualizing the Pathway from Measurement to Assessment

The Biological Hierarchy from Measurement to Assessment Endpoints

Modern Integrated Workflow for Validating ERA

The Scientist's Toolkit: Key Research Reagent Solutions

This table details essential materials and tools featured in the discussed approaches for conducting research aimed at bridging the endpoint gap.

Table: Essential Research Tools for Advanced Ecological Risk Assessment

| Tool/Reagent Category | Specific Example | Primary Function in Research | Relevance to Endpoint Gap |

|---|---|---|---|

| High-Sensitivity Bioassay Organisms | Raphidocelis subcapitata (Green Algae) | Serves as a sensitive, broad-spectrum toxicity sensor in screening batteries [9]. | Provides an efficient, ecologically relevant measurement endpoint for primary producers. |

| Vertebrate Cell Line Assays | DR-EcoScreen cells; various fish cell lines | Detects disturbances in vertebrate-specific biological pathways via viability (MTS) or reporter gene assays [9]. | Bridges in vitro measurements to potential impacts on higher organisms. |

| Computational Toxicity Models | ToxACoL Model Framework | Predicts multi-species acute toxicity and extrapolates to data-scarce endpoints using adjoint correlation learning [10]. | Directly models relationships between measurement endpoints to infer assessment-level risks. |

| Qualitative Risk Assessment Frameworks | SICA & PSA Tools (ERAEF) | Provides structured, expert-driven assessment of risk in data-poor contexts (e.g., fisheries bycatch) [11]. | Translates limited data into vulnerability rankings, linking operational data to population sustainability goals. |

| Ecosystem Service Models | InVEST Carbon Stock Model | Quantifies ecosystem functions (like carbon sequestration) based on land use/cover data [13]. | Connects landscape-scale measurements to assessment endpoints concerning climate regulation services. |

| Validated Alternative Test Methods | OECD Test Guidelines | Provides internationally accepted standardized procedures for chemical safety testing [14]. | Ensures measurement endpoints are reliable, reproducible, and fit for regulatory use in higher-tier assessments. |

The core challenge of bridging measurement and assessment endpoints cannot be solved by a single method. Validation of ecological risk assessment requires a convergent, multi-pronged strategy:

- Leverage Combined Bioassays: High-throughput screening batteries, such as combining algal and vertebrate cell assays, maximize the detection of bioactive substances with high ecological relevance [9].

- Embrace Computational Extrapolation: Advanced machine learning models like ToxACoL that explicitly learn relationships between endpoints are powerful for predicting risks for data-scarce species and scenarios, reducing uncertainty [10].

- Integrate Qualitative and Quantitative Data: In management contexts, frameworks like ERAEF successfully incorporate qualitative ecosystem information into decision-making, especially where quantitative data is limited [12] [11].

- Commit to Iterative Ground-Truthing: The ultimate validation of any approach lies in its ability to predict or correlate with real-world ecosystem status reports, whether from fishery stock assessments [11], carbon stock monitoring [13], or biodiversity surveys. This requires a continuous cycle of measurement, prediction, monitoring, and model refinement.

The future of robust ERA lies in the integrated application of these complementary tools, moving beyond reliance on isolated measurement endpoints toward a holistic, evidence-based prediction of true ecological outcomes.

This guide provides a comparative analysis of two critical screening tools used in ecological risk assessment (ERA): the Productivity and Susceptibility Analysis (PSA) and the Sustainability Assessment for Fishing Effects (SAFE). Framed within broader thesis research on validating ERA outcomes with empirical stock status reports, this guide objectively evaluates each tool's performance, supported by experimental data and detailed methodologies. The analysis is intended for researchers and scientists developing and applying risk assessment frameworks in environmental management and conservation.

Comparative Performance Analysis: PSA vs. SAFE

The core function of ERA screening tools is to prioritize ecological components or activities for more detailed assessment. The following table summarizes the key design principles, outputs, and validation contexts for PSA and SAFE.

Table 1: Core Design and Application of PSA and SAFE Frameworks

| Feature | Productivity and Susceptibility Analysis (PSA) | Sustainability Assessment for Fishing Effects (SAFE) |

|---|---|---|

| Primary Objective | A semi-quantitative, rapid-risk screening tool to evaluate the vulnerability of species to a specific fishery or pressure [15]. | A quantitative framework to assess the sustainability of fishing activities on target and non-target species, often integrating stock assessment models [15]. |

| Methodological Approach | Risk is scored based on attributes related to Productivity (e.g., growth rate, fecundity) and Susceptibility (e.g., overlap with gear, catchability) [15]. | Risk is calculated using defined sustainability indicators and reference points, often involving population modeling and catch data analysis [15]. |

| Key Outputs | A relative vulnerability score or rank, categorizing species as low, medium, or high risk [15]. | An estimate of whether fishing mortality rates are sustainable relative to biological reference points (e.g., FMSY) [15]. |

| Strengths | Rapid, requires less data than full assessments, effective for data-poor species, useful for multi-species comparisons [15]. | Provides quantitative, actionable management advice (e.g., catch limits), directly linked to stock status and sustainability metrics [15]. |

| Limitations | Semi-quantitative scores can be subjective; does not provide absolute estimates of risk or population impact [15]. | Requires robust catch and biological data; computationally intensive; less suitable for data-poor scenarios [15]. |

| Validation Context | Validation often involves comparing PSA risk rankings with independent population trends or outcomes from more complex quantitative models [15]. | Validation is inherent through comparison with stock status reports from formal assessments and monitoring of population trends against predictions [15]. |

Experimental Protocols and Validation Methodologies

Validation of ERA tools against real-world outcomes is a cornerstone of robust ecological science. The protocols below outline generalized methodologies for testing PSA and SAFE frameworks.

2.1 Protocol for Validating PSA Risk Rankings

This protocol describes a retrospective analysis to validate PSA outcomes against independent stock status data.

- Problem Formulation & Species Selection: Define the geographic region and fishery. Select a broad suite of species (both target and non-target) impacted by the fishing activity [1].

- PSA Application: For each species, collect data and score the predefined Productivity and Susceptibility attributes according to the standardized PSA framework [15]. Calculate the composite vulnerability score and assign a risk category (e.g., High, Medium, Low).

- Independent Status Data Collection: Collate time-series data on population biomass, abundance indices, or fishing mortality from stock assessments, scientific surveys, or fishery-independent monitoring programs. Categorize each species' population trend as "Declining," "Stable," or "Increasing" over a relevant period [15].

- Statistical Validation: Construct a contingency table comparing the PSA risk category (predictor) with the observed population trend category (response). Use statistical tests like Chi-square or calculation of correct classification rates to determine if higher PSA risk scores are significantly associated with declining population status [15].

- Sensitivity Analysis: Test the robustness of the PSA rankings by varying input data or scoring weights within plausible ranges to see if risk categories change significantly for key species [15].

2.2 Protocol for Validating SAFE Sustainability Indicators

This protocol tests the accuracy of SAFE framework outputs against subsequent observed stock status.

- Model Setup and Historical Analysis: Apply the SAFE framework to historical catch and biological data for a well-assessed stock. Estimate key sustainability indicators, such as the ratio of fishing mortality (F) to the target reference point (FMSY), for past years [15].

- Prediction and Benchmarking: Using data available up to a chosen historical year (e.g., 2010), run the SAFE model to estimate sustainability indicators for that year. Record the model's diagnosis (e.g., F > FMSY, indicating unsustainable fishing) [15].

- Comparison with "True" Status: Compare the SAFE diagnosis from Step 2 with the "accepted" stock status for that same year, as determined later by a comprehensive, peer-reviewed stock assessment that incorporated more complete data [15].

- Performance Metrics Calculation: Repeat the process for multiple stocks and time periods. Calculate diagnostic performance metrics for the SAFE framework, including:

- Sensitivity: Proportion of truly overfished stocks correctly identified by SAFE.

- Specificity: Proportion of truly sustainable stocks correctly identified by SAFE.

- Precision: Proportion of stocks flagged as overfished by SAFE that were truly overfished [15].

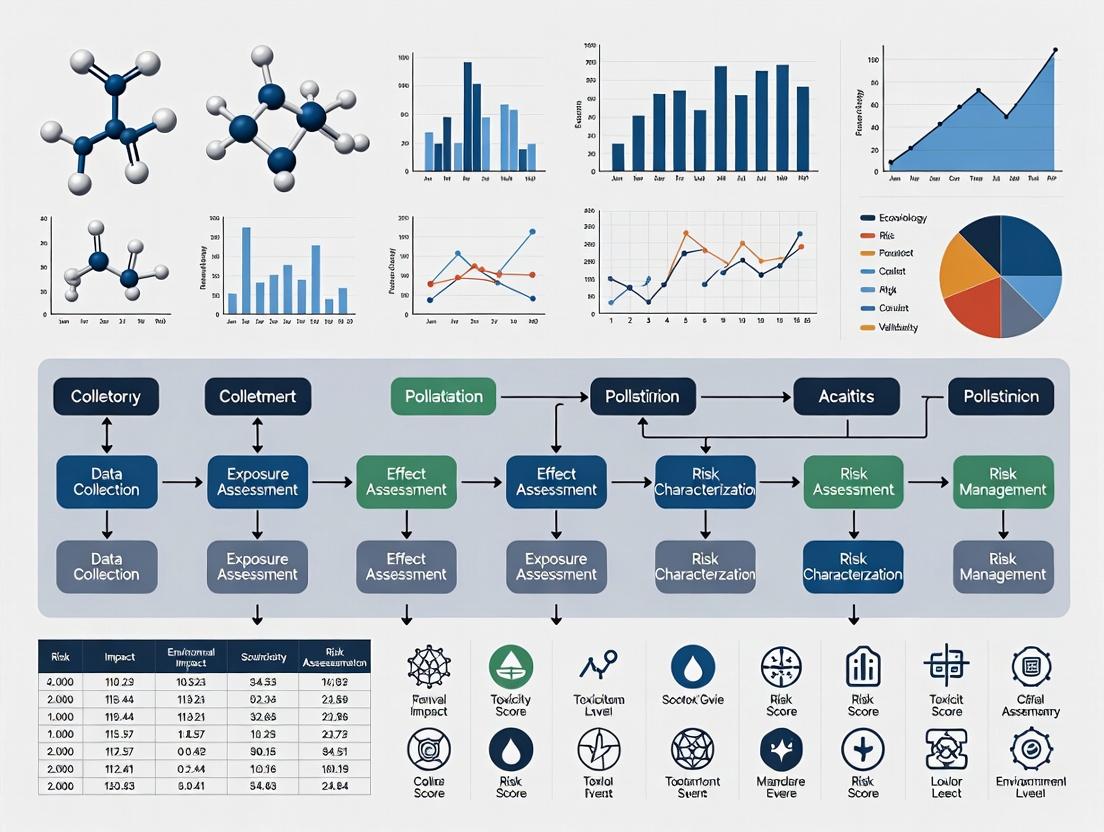

Visualizing Methodological Frameworks

Comparative ERA Tool Workflow & Validation

Conducting rigorous ERA tool development and validation requires specific data resources and analytical tools.

Table 2: Essential Resources for ERA Tool Development and Validation

| Resource Category | Specific Tool / Database | Function in ERA Tool Research |

|---|---|---|

| Biological Traits Data | FishBase, SeaLifeBase | Provides standardized species-level data on productivity attributes (e.g., growth rate, age at maturity) essential for PSA scoring and SAFE model parameterization [15]. |

| Fisheries Interaction Data | FAO Catch Databases, Regional Fishery Management Organization (RFMO) reports | Supplies time-series catch, bycatch, and effort data needed to calculate susceptibility in PSA and fishing mortality in SAFE frameworks [15]. |

| Stock Status Benchmarks | RAM Legacy Stock Assessment Database, IUCN Red List | Provides "gold standard" population trends and conservation statuses used as independent validation metrics to test the accuracy of PSA and SAFE outputs [15]. |

| Statistical & Modeling Software | R Statistical Environment (with packages like fishmethods, SAFR), AD Model Builder |

Enables the quantitative analysis for validation (e.g., classification error rates), running population models for SAFE, and conducting sensitivity analyses [15]. |

| Guidance & Frameworks | U.S. EPA EcoBox Toolkit, NOAA Fisheries PSA Guidelines [15] [16] [1] | Offers established protocols, conceptual models, and best practices for structuring ERA problems, defining assessment endpoints, and applying standardized tools like PSA. |

In summary, the selection between PSA and SAFE is contingent upon the assessment's objectives, data availability, and required management outputs. PSA serves as an efficient, data-limited screening tool to triage risks, while SAFE provides a quantitative, sustainability-focused assessment suitable for data-moderate situations. Validation against independent stock status reports, as outlined in the experimental protocols, is critical for advancing ERA science, testing tool reliability, and strengthening the evidence base for ecological management decisions. This comparative analysis provides a foundation for such thesis research, highlighting the complementary roles these tools play in a robust ecological risk assessment paradigm.

Within the framework of ecological risk assessment (ERA) validation, two critical constructs emerge: benchmark data and Stock Status Reports (FSRs). Benchmark data refers to standardized, quantitative values that represent chemical concentration thresholds below which adverse effects on ecological receptors are not expected [17]. These benchmarks, derived from toxicity studies on aquatic and terrestrial organisms, serve as foundational validation standards against which site-specific contamination data are compared to screen for potential risk [18].

An FSR (Stock Status Report), in this ecological context, is conceptualized as a comprehensive summary and synthesis. It integrates measured environmental concentrations (MECs) of chemicals of concern (COCs) with relevant ecological benchmark data to define the current "status" of a system—whether it is potentially impaired or within acceptable limits [19]. The overarching thesis posits that the iterative comparison of site data (MECs) against validated, hierarchical benchmarks forms the core of a defensible validation protocol for ERA. This process transforms raw monitoring data into a validated risk characterization, essential for informed decision-making in environmental remediation and protection [19] [20].

Different regulatory and research entities develop and curate ecological benchmark databases, each with distinct methodologies, taxonomic focuses, and intended applications. The selection of an appropriate benchmark source is a critical first step in validating an FSR. The table below provides a comparative overview of three prominent sources.

Table 1: Comparison of Major Ecological Benchmark Databases for Validation

| Source / Program | Primary Authority | Key Media Covered | Taxonomic Focus | Core Application in Validation | Update Frequency |

|---|---|---|---|---|---|

| Aquatic Life Benchmarks [18] | U.S. EPA Office of Pesticide Programs | Surface Water, Sediment | Freshwater & Marine Vertebrates/Invertebrates, Plants | Screening-level risk assessment for pesticide registration and monitoring; primary comparator for aquatic MECs. | Annual (last update Sept. 2025) |

| TCEQ Ecological Benchmark Tables [19] | Texas Commission on Environmental Quality | Surface Water, Sediment, Soil | Aquatic organisms, Soil invertebrates, Wildlife, Plants | State-level remediation projects under TRRP rule; used for Tier 1 & Tier 2 screening-level ecological risk assessments (SLERA). | Periodic (last update Aug. 2023) |

| Ecological Benchmark Tool [17] | Oak Ridge National Laboratory (ORNL) | Surface Water, Sediment, Soil, Biota | Aquatic organisms, Soil invertebrates, Mammals, Terrestrial plants | Comprehensive screening for a wide range of chemicals and receptors; often used in preliminary site investigations and research. | Not explicitly stated (compilation from multiple agencies) |

The experimental protocol for employing these benchmarks in FSR validation follows a consistent workflow. First, site investigation yields chemical-specific MECs for environmental media. Second, a benchmark selection process identifies the most appropriate, protective value (often the lowest chronic or acute value for the most sensitive relevant species) from a authoritative source like those above [18]. Third, a quantitative comparison is performed, typically by calculating a hazard quotient (HQ = MEC / Benchmark). An HQ > 1.0 indicates a potential risk, triggering further tiered assessment [19]. This direct comparison constitutes the fundamental validation check, determining if concentrations are "acceptable" against the standard.

Experimental Protocols for Benchmark Derivation and Application

The scientific validity of an FSR hinges on the rigor of the benchmarks it employs. These values are not arbitrary but are generated through standardized toxicity testing and quantitative assessment protocols.

Protocol for Benchmark Derivation (e.g., EPA Aquatic Life Benchmarks):

- Data Collection & Selection: Toxicity values are extracted from studies that comply with Harmonized Test Guidelines (e.g., 40 CFR 158) and the Evaluation Guidelines for Ecological Toxicity Data in the Open Literature [18]. Studies are evaluated for quality, relevance, and reliability.

- Species Sensitivity Analysis: For a given chemical, all acceptable toxicity endpoints (e.g., LC50 for acute effects, NOAEC/EC10 for chronic effects) are assembled for various species (fish, invertebrates, plants).

- Critical Value Determination: The benchmark is typically based on the most sensitive value from the available data for each taxonomic group (e.g., freshwater invertebrates) [18]. In some frameworks, statistical methods like species sensitivity distributions (SSDs) may be used to derive a protective concentration (e.g., HC5).

- Benchmark Specification: Final values are reported as concentrations (e.g., µg/L) for specific media, effect types (acute/chronic), and receptor groups [18].

Protocol for Site-Specific FSR Validation Using Benchmarks:

- Exposure Concentration Characterization: Representative environmental samples are collected and analyzed using approved analytical methods (e.g., EPA SW-846 methods) to determine MECs [19].

- Benchmark Alignment: Select benchmarks that match the site's media (water, soil), relevant ecological receptors (based on a site conceptual model), and appropriate exposure duration (acute vs. chronic).

- Risk Quantification: Calculate Hazard Quotients (HQs) or similar risk indices for each COC-receptor pair.

- Risk Characterization & FSR Compilation: Interpret HQ data. COCs with HQ < 1 across all receptors may be eliminated from further consideration. COCs with HQ > 1 are identified as potential drivers of risk, and their data form the core of the FSR, which summarizes the "status" of the site [19]. This report then undergoes peer review, a key validation step where independent experts assess the correctness of data application and interpretation [20].

The Scientist's Toolkit: Essential Reagents and Materials

The derivation and application of ecological benchmarks require specialized tools and data resources. The following table details key components of the methodological toolkit.

Table 2: Research Reagent Solutions for Ecological Benchmark Development and FSR Validation

| Tool / Material | Function in Validation Process | Example Source / Standard |

|---|---|---|

| Standardized Toxicity Test Organisms | Provide consistent, reproducible biological response data for benchmark derivation. Examples include fathead minnow (Pimephales promelas), water flea (Daphnia magna), and earthworm (Eisenia fetida). | EPA Ecological Effects Test Guidelines (OCSPP 850 series) |

| Analytical Reference Standards & Certified Materials | Ensure accurate quantification of chemical concentrations in environmental samples and dosing solutions in toxicity tests, forming the reliable numerator for HQ calculations. | Commercial chemical suppliers, NIST Standard Reference Materials |

| Ecological Benchmark Databases | Provide the validated denominator values (benchmarks) for risk calculations. They are the core reference for FSR compilation. | U.S. EPA Aquatic Life Benchmarks [18], TCEQ Ecological Benchmark Tables [19], ORNL Ecological Benchmark Tool [17] |

| Quality Assurance/Quality Control (QA/QC) Protocols | Govern sample collection, handling, analysis, and data management to ensure the integrity and defensibility of both toxicity test results and field MEC data. | EPA guidance (e.g., QA/R-5), individual laboratory QA/QC plans |

| Statistical Analysis Software | Used for deriving benchmarks (SSD modeling, uncertainty analysis) and analyzing site data (calculating representative concentrations, HQs, confidence intervals). | R, SSD Master, EPA ProUCL |

Visualizing the Validation Workflow and Conceptual Relationships

The validation of an FSR through benchmark comparison is a systematic process. The following diagram illustrates the core workflow from data generation to risk-based decision-making.

Validation Workflow for Ecological Stock Status Reports

The conceptual foundation for this workflow rests on aligning different forms of validity with assessment stages. The next diagram maps these key validity concepts onto the ERA framework.

Validity Concepts Mapped to Risk Assessment Stages

Ecological Risk Assessment (ERA) tools are critical for informing environmental management decisions, from chemical regulation to fisheries management [3]. The growing complexity of ecological challenges and the integration of novel data sources, such as ecosystem services and stock status reports, make the quantitative validation of these tools not just beneficial but imperative [21] [22]. Validation moves beyond conceptual appeal, providing a measurable assurance that a tool performs reliably within its defined scope, quantifying its precision, accuracy, and uncertainty [23]. This article establishes a validation framework and provides comparative performance data for contemporary ERA methodologies, framing the discussion within the essential integration of stock status information to achieve sustainable ecosystem management [24] [22].

Comparison Guide 1: Bayesian Integration of Multiple Evidence Lines

This quantitative framework synthesizes disparate data types—such as risk assessments, biomonitoring, and epidemiology studies—into a single, probabilistic risk estimate using Bayesian Markov Chain Monte Carlo (MCMC) methods [25].

- Performance Metrics & Data: The tool's output is a probability distribution of the Risk Quotient (RQ), allowing decision-makers to calculate the probability of exceeding a regulatory Level of Concern (LOC). A case study on insecticides demonstrated high precision in final risk estimates [25].

- Experimental Protocol: The validation of a Bayesian integration tool follows a defined protocol [25]:

- Define Parameter of Interest: Establish the risk metric (e.g., Risk Quotient for a specific population).

- Collect Prior Data: Gather all existing, relevant studies to construct an initial (prior) probability distribution.

- Model Likelihood: For each new line of evidence (study), develop a statistical model that describes the probability of the observed data given different parameter values.

- Compute Posterior via MCMC: Use computational sampling (MCMC) to combine the prior and the likelihood models, generating an updated (posterior) probability distribution.

- Validate with Hold-Out Data: Reserve a portion of the evidence or a subsequent study. Compare the tool's posterior predictive distribution against this independent data to assess calibration and accuracy.

Table 1: Performance Summary of Bayesian Evidence Integration Tool [25]

| Tool Name / Approach | Primary Output | Reported Performance (Case Study) | Key Uncertainty Metric |

|---|---|---|---|

| Bayesian MCMC Integration | Probability distribution of Risk Quotient (RQ) | Mean RQ (Malathion): 0.4386 (Variance: 0.0163)Mean RQ (Permethrin): 0.3281 (Variance: 0.0083)P(RQ > 1.0) for both: < 0.0001 | Posterior variance; Probability of exceeding threshold |

(Bayesian Evidence Integration Workflow)

Comparison Guide 2: Stock Status Plots (SSPs) for Fishery Trends

SSPs are a diagnostic and communication tool that classifies fishery stocks into status categories (e.g., developing, overexploited, collapsed) based on the trend of catch data relative to historical maximum catch [24].

- Performance Metrics & Data: Performance is measured by the diagnostic logic's consistency and its ability to reflect known stock histories. The tool's output is the annual percentage of stocks (or catch) in each status category, revealing trends in ecosystem exploitation [24].

- Experimental Protocol: Validating an SSP tool involves testing its classification logic against known stock histories or simulated data [24]:

- Define Stock & Criteria: Select a taxon meeting minimum data criteria (e.g., >5 consecutive years of catch, >1000 tonnes total) [24].

- Apply Classification Algorithm: For each year in the time series, apply the status criteria (e.g.,

if (year < max_year AND catch < 0.5*max_catch) then status = "Developing") [24]. - Generate Aggregate Plots: Tally the number of stocks in each status per year to create "stock-status" plots. Sum the catch by status for "stock-catch-status" plots [24].

- Validate with Expert Assessment: Compare the tool's classification timeline for well-documented, data-rich stocks (e.g., Atlantic herring) against independent expert assessments and management history to verify diagnostic accuracy [24].

Table 2: Performance Logic of Stock Status Plot (SSP) Tool [24]

| Tool Name / Approach | Primary Output | Classification Criteria (Example) | Key Diagnostic Utility |

|---|---|---|---|

| Stock Status Plots (SSP) | Percentage of stocks/catch by status category over time. | Developing: Year < max catch year AND catch ≤ 50% of max.Overexploited: Year > max catch year AND catch is 10-50% of max.Collapsed: Year > max catch year AND catch < 10% of max. | Tracks portfolio-level shifts from developing to overexploited/collapsed states, signaling biodiversity loss. |

Comparison Guide 3: Ecosystem Services-Integrated ERA (ERA-ES)

This novel method integrates Ecosystem Services (ES) as assessment endpoints, using cumulative distribution functions to quantify both risks and benefits to ES supply from human activities [21].

- Performance Metrics & Data: The tool outputs quantitative metrics: the probability of ES supply falling below a critical threshold (risk) or exceeding a beneficial threshold (benefit). A marine case study showed it can differentiate between development scenarios [21].

- Experimental Protocol: The validation of an ERA-ES tool requires scenario-based testing [21]:

- Define Scenario & ES: Select a human activity scenario (e.g., offshore wind farm) and a relevant ES (e.g., waste remediation via denitrification).

- Quantify ES Supply: Model or measure the ES supply metric (e.g., denitrification rate) under baseline and intervention conditions.

- Fit Probability Distributions: Construct cumulative distribution functions (CDFs) for the ES supply metric in both states.

- Calculate Risk & Benefit Metrics: Define critical (Rc) and beneficial (Bc) thresholds. Calculate Risk as P(ESsupply < Rc) and Benefit as P(ESsupply > Bc).

- Sensitivity Analysis: Test how the risk/benefit metrics respond to changes in threshold values and input parameter uncertainty to establish the robustness of the conclusions.

Table 3: Performance Summary of ERA-ES Tool for Offshore Scenarios [21]

| Tool Name / Approach | Primary Output | Reported Performance (Marine Case Study) | Key Differentiating Output |

|---|---|---|---|

| ERA-ES Method | Probabilities of ES supply risk and benefit. | Offshore Wind Farm: Altered sediment, moderate change in waste remediation service.Mussel Cultivation: Significant increase in service supply (benefit).Multi-Use Scenario: Combined effect; net benefit calculable. | Quantifies both detrimental and beneficial outcomes, enabling trade-off analysis for sustainable design. |

(ERA-ES Method Workflow)

Comparative Synthesis of ERA Tool Performance

The choice of an ERA tool depends on the assessment's objective, data availability, and the required form of decision support.

Table 4: Comparative Overview of Quantitative ERA Tools

| Tool | Best Application Context | Key Strength | Primary Limitation | Validation Focus |

|---|---|---|---|---|

| Bayesian Integration [25] | Synthesizing disparate, uncertain evidence for chemical/health risk. | Provides a full probabilistic risk estimate with quantified uncertainty. | Requires formal statistical expertise and computational resources. | Calibration of posterior predictions against independent evidence. |

| Stock Status Plots (SSP) [24] | Communicating historical trends and portfolio status of fishery stocks. | Simple, intuitive visual communication of complex stock trends. | Retrospective; relies solely on catch data, not population dynamics. | Diagnostic accuracy against known stock assessment histories. |

| ERA-ES Method [21] | Assessing trade-offs in managed ecosystems (e.g., offshore development). | Quantifies both risks and benefits, linking ecology to human well-being. | Data-intensive; requires robust ES quantification models. | Sensitivity of risk/benefit outcomes to threshold and model choices. |

The Scientist's Toolkit: Essential Research Reagent Solutions

Quantitative validation of ERA tools relies on specific "reagents"—standardized datasets, software, and conceptual models.

Table 5: Essential Reagents for ERA Tool Development and Validation

| Research Reagent | Function in Validation | Example Application |

|---|---|---|

| Long-Term Stock Catch Time Series Data | Serves as the ground truth for testing diagnostic tools like SSPs. | Validating SSP classification logic against well-documented fisheries (e.g., Atlantic herring) [24]. |

| Pesticide Toxicity & Exposure Databases | Provides the prior and likelihood data for Bayesian integration models. | Integrating risk assessment, biomonitoring, and epidemiology studies for insecticides [25]. |

| Ecosystem Service Indicators & Models | Enables the quantification of ES supply for risk-benefit analysis. | Modeling denitrification rates for waste remediation service in marine sediments [21]. |

| Bayesian Statistical Software (e.g., Stan, JAGS) | The computational engine for performing MCMC sampling and generating posterior distributions. | Calculating the probability distribution of a Risk Quotient [25]. |

| Conceptual Model Diagrams | Maps hypothesized relationships between stressors, ecosystems, and endpoints, framing the assessment. | Linking ecosystem drivers to stock productivity for inclusion in assessment uncertainty [22]. |

Experimental Protocols for ERA Tool Validation

Adopting rigorous, standardized experimental protocols is fundamental to establishing the validation imperative. These protocols should be tailored to the tool's function but share common principles of objectivity, reproducibility, and relevance to decision contexts [23].

1. Protocol for Validating Diagnostic Accuracy (e.g., SSPs):

- Objective: To determine the correct classification rate of a status-diagnosis tool.

- Procedure: a. Assemble a reference dataset of stock histories with expert-validated status transitions (e.g., from "fully exploited" to "overexploited" in a specific year). b. Run the tool to generate predicted status classifications for each stock-year. c. Construct a confusion matrix comparing predicted vs. reference status. d. Calculate performance metrics: Overall Accuracy, Precision, and Recall for each status category [23].

- Acceptance Criterion: Tool accuracy must exceed a pre-defined threshold (e.g., >85%) for critical management categories (e.g., "collapsed").

2. Protocol for Validating Predictive Uncertainty (e.g., Bayesian Integration):

- Objective: To assess the calibration and sharpness of a probabilistic risk tool.

- Procedure: a. Use a sequential hold-out method. Train the tool on an initial set of evidence, predict the posterior for a subsequent, independent study. b. Compare the predicted probability distribution with the observed outcome from the hold-out study. c. Assess calibration: Do events predicted with X% probability actually occur X% of the time? (e.g., via probability integral transform plots). d. Assess sharpness: How narrow (informative) are the predictive intervals? [25]

- Acceptance Criterion: The predictive distributions are statistically calibrated (e.g., p > 0.05 in calibration tests) and sufficiently sharp for decision-making.

(Ecosystem-Informed Stock Assessment Pathway)

Validation in Practice: A Stepwise Framework for Comparing ERA Outcomes with Stock Status Benchmarks

Ecological Risk Assessment (ERA) provides a structured framework for evaluating the likelihood and magnitude of adverse ecological effects from human activities, such as chemical exposure or fishing pressure [8]. A core challenge in the field is validating the outputs of standardized ERA tools—often risk scores or classifications—against benchmark status determinations derived from more intensive, data-rich methods. This validation is critical for determining whether these tools, frequently employed in data-poor scenarios, correctly prioritize management action and accurately reflect true ecological risk [26] [2].

This guide is framed within a broader thesis on validating ERA methodologies against established status reports. It provides a comparative analysis of two prominent ERA tools used in fisheries—Productivity and Susceptibility Analysis (PSA) and the Sustainability Assessment for Fishing Effects (SAFE)—benchmarked against official Fishery Status Reports (FSR) and quantitative stock assessments. We present experimental data, detailed protocols, and research resources to inform researchers and professionals on designing and executing robust validation studies [26].

Comparative Performance of ERA Tools Against Benchmarks

A foundational 2016 comparative study offers critical quantitative data on the performance of PSA and SAFE methods [26]. The study validated the risk classifications from these tools against two independent benchmarks: 1) Stock status classifications from official Australian Fishery Status Reports (FSR), and 2) Outcomes from data-rich quantitative stock assessments.

Table 1: Performance of PSA and SAFE Against Fishery Status Report (FSR) Classifications [26]

| ERA Tool | Overall Misclassification Rate | Nature of Misclassifications | Key Performance Insight |

|---|---|---|---|

| Productivity & Susceptibility Analysis (PSA) | 27% (26 of 96 stocks) | All cases overestimated risk (false positive). | Highly precautionary; may flag many stocks as medium/high risk that are not classified as overfished. |

| Sustainability Assessment for Fishing Effects (SAFE) | 8% (59 of 96 stocks) | 3% overestimated risk; 5% underestimated risk (false negative). | More balanced but not perfectly accurate; small risk of missing stocks in trouble. |

Table 2: Performance of PSA and SAFE Against Data-Rich Quantitative Stock Assessments [26]

| ERA Tool | Overall Misclassification Rate | Nature of Misclassifications | Key Performance Insight |

|---|---|---|---|

| Productivity & Susceptibility Analysis (PSA) | 50% (9 of 18 stocks) | All cases overestimated risk. | High rate of false positives against a more precise benchmark. |

| Sustainability Assessment for Fishing Effects (SAFE) | 11% (2 of 18 stocks) | Both cases overestimated risk. | Demonstrated significantly higher alignment with quantitative assessments. |

Key Comparative Takeaways:

- PSA is intentionally precautionary, erring on the side of overestimating risk. This design makes it a effective screening tool to ensure high-risk species are not missed, but at the cost of potentially diverting management resources to lower-priority stocks [26].

- SAFE, which uses continuous quantitative data in its calculations rather than PSA's ordinal scoring, showed substantially better alignment with both status report and stock assessment benchmarks. It provided a more accurate reflection of risk with a lower rate of both over- and under-estimation [26].

- The study underscores that the choice of benchmark is crucial. Validation against more rigorous, quantitative stock assessments revealed larger performance gaps than validation against the broader FSR classifications [26].

Experimental Protocols for Validation Studies

The following methodology is adapted from the seminal comparative study to provide a template for designing a validation study [26].

Phase 1: Tool Comparison and Data Harmonization

- Objective: Systematically compare the foundational assumptions, input data requirements, and risk computation algorithms of the ERA tools under review.

- Procedure:

- Document each tool's conceptual model for translating species traits (e.g., productivity, susceptibility, distribution) into a risk score.

- Create a data equivalence table. Both PSA and SAFE, for instance, use similar life history and fishery interaction data, but PSA downgrades continuous data into ordinal scores (1-3), while SAFE uses continuous variables [26].

- For a given set of species or stocks, compile the identical underlying data set required to run both tools.

Phase 2: Alignment with Status Report Classifications

- Objective: Quantify the agreement between ERA risk categories (e.g., low, medium, high) and official stock status classifications (e.g., not overfished, overfished).

- Procedure:

- Apply ERA Tools: Run the harmonized data set through each ERA tool (e.g., PSA, SAFE) to generate independent risk scores/classifications for each stock.

- Compile Benchmark Data: Obtain the official status classification for the same stocks from the benchmark source (e.g., Fishery Status Reports). These reports typically use a weight-of-evidence approach, combining catch data, surveys, and formal assessments [26].

- Cross-Tabulation and Analysis:

- Create a confusion matrix comparing ERA classification vs. benchmark status.

- Calculate the misclassification rate (total incorrect / total stocks).

- Differentiate between overestimation (ERA risk higher than benchmark) and underestimation (ERA risk lower than benchmark) errors, as their management implications are profoundly different [26].

Phase 3: Validation Against Quantitative Stock Assessments

- Objective: Validate ERA outputs against the most data-intensive and statistically rigorous benchmarks available.

- Procedure:

- Identify Overlap Stocks: Select a subset of stocks that have been assessed by both the ERA tools and formal quantitative stock assessments (e.g., statistical catch-at-age models) [26].

- Define Assessment Benchmark: Use the output from the quantitative assessment (e.g., stock biomass relative to sustainable targets) as the "true" status benchmark.

- Statistical Comparison: Perform the same cross-tabulation and error analysis as in Phase 2. This typically reveals the highest-fidelity performance metrics for the ERA tools, as it removes the subjectivity inherent in some status report determinations [26].

Validation Study Workflow for ERA Tool Performance

Conducting a robust validation study requires specific conceptual and data resources. The following toolkit outlines key components.

Table 3: Research Reagent Solutions for ERA Validation Studies

| Item / Concept | Function in Validation Study | Notes & Examples |

|---|---|---|

| ERA Toolbox Frameworks | Provides the structured, hierarchical methodology for applying different risk tools. | The Ecological Risk Assessment for the Effects of Fishing (ERAEF) framework employs tools like SICA, PSA, and SAFE [11]. |

| Benchmark Status Classifications | Serves as the "ground truth" against which ERA outputs are validated. | Fishery Status Reports (FSR), national agency stock assessments, or IUCN Red List categories. |

| Quantitative Stock Assessment Models | Provides high-confidence, data-rich benchmarks for a subset of stocks. | Models like Stock Synthesis or age-structured production models [26]. |

| Harmonized Biological & Fishery Datasets | Ensures consistent inputs for comparing different ERA tools. | Datasets containing life history traits (growth, reproduction), spatial distribution, and fishery susceptibility parameters [26]. |

| Measurement vs. Assessment Endpoint Clarification | Critical for framing the study's objective and interpreting mismatch. | A measurement endpoint is the quantified output of the ERA tool (e.g., a PSA score). The assessment endpoint is the real-world value being protected (e.g., sustainable population) [8]. Validation studies test the link between these. |

| Uncertainty/Safety Factor Protocols | Provides context for interpreting conservative biases in ERA tools. | Understanding how default uncertainty factors (e.g., applying a 10x safety factor) are embedded in tools like PSA explains observed overestimation of risk [27]. |

Logical Framework for ERA Tool Validation

Validation studies are essential for calibrating trust in ERA tools and guiding their evolution. Based on the comparative data and protocols presented, practitioners should:

- Acknowledge Inherent Tool Bias: Recognize that different tools have different philosophical bases. PSA is a precautionary screening tool, whereas SAFE is designed for more quantitative risk estimation [26].

- Select Benchmarks Appropriately: Validate against the most rigorous benchmark available (e.g., quantitative assessments > status reports). Transparently report which benchmark is used and its potential limitations [26] [2].

- Report Full Error Profiles: Move beyond simple accuracy rates. Distinguishing between overestimation and underestimation errors is critical for risk managers, as the consequences of missing an at-risk species (false negative) are typically more severe than unnecessary precaution (false positive) [26].

- Drive Iterative Improvement: Use validation results to refine ERA tools. For instance, findings from studies like the one cited have informed subsequent modifications to PSA and SAFE methodologies to improve their accuracy and reduce bias [26] [11].

The ongoing development of ERA frameworks, including their application in new ecosystems like the Amazon Continental Shelf, continues to underscore the need for rigorous, standardized validation against agreed-upon benchmarks to ensure ecological management is both effective and efficient [11].

In the domain of ecological risk assessment for fisheries, the necessity to evaluate the sustainability of numerous data-limited species has spurred the development of rapid assessment tools. Frameworks like the Productivity Susceptibility Analysis (PSA) represent a class of qualitative risk assessments designed to prioritize management and research efforts for target and non-target species [28]. Positioned as a secondary tier in hierarchical ecological risk assessment frameworks, PSA aims to identify species at medium or high risk, who are then candidates for more rigorous, data-intensive quantitative stock assessments [28].

However, the widespread application of such tools—PSA has been applied to over 1,000 fish populations—precedes a robust, quantitative evaluation of their foundational assumptions and predictive performance [28]. This analysis is critical within the broader thesis of validating ecological risk assessments against established stock status reports. If the screening tools used to prioritize resources are fundamentally flawed, the entire management edifice is compromised. This article performs a direct tool-to-tool analysis, focusing on the assumptions and data processing of the PSA framework. It examines a key quantitative evaluation of PSA to elucidate its operational logic, test its performance against simulated population dynamics, and discuss its position relative to more quantitative alternatives like the Sustainability Assessment for Fishing Effects (SAFE) approach.

Foundational Assumptions and Methodological Frameworks

Deconstructing the PSA Framework

The PSA methodology is predicated on the assumption that a species' vulnerability to overfishing is a function of two composite properties: Productivity (P) and Susceptibility (S) [28].

- Productivity encompasses life-history characteristics that determine the intrinsic rate of population increase. The standard PSA evaluates seven attributes: mean age at maturity, fecundity, mean maximum age, maximum size, von Bertalanffy growth parameter K, natural mortality, and trophic level [28].

- Susceptibility reflects the interaction between the population and the fishery that influences mortality. It is assessed through four attributes: availability, encounterability, selectivity, and post-capture mortality [28].

For each attribute, a species is assigned a categorical risk score of 1 (low risk), 2 (medium risk), or 3 (high risk) based on pre-defined threshold values. The overall Productivity score (P) is the arithmetic mean of the seven attribute scores. The overall Susceptibility score (S) is calculated as the geometric mean of its four attributes, reflecting an assumption of multiplicative interaction [28]. The final vulnerability score (V) is derived as the Euclidean distance from the origin: V = √(P² + S²). This score, ranging from 1.41 to 4.24, is then categorized as Low, Medium, or High risk [28].

Table 1: PSA Productivity Attributes and Scoring Criteria

| Productivity Attribute | Low Risk (Score=1) | Medium Risk (Score=2) | High Risk (Score=3) |

|---|---|---|---|

| Mean Age at Maturity (years) | < 5 | 5 – 15 | > 15 |

| Fecundity (eggs/year) | > 20,000 | 100 – 20,000 | < 100 |

| Maximum Age (years) | < 10 | 10 – 30 | > 30 |

| Maximum Size (cm) | < 50 | 50 – 200 | > 200 |

| Growth Parameter (K) | > 0.2 | 0.1 – 0.2 | < 0.1 |

| Natural Mortality (/year) | > 0.2 | 0.1 – 0.2 | < 0.1 |

| Trophic Level | > 3.5 | 3.0 – 3.5 | < 3.0 |

The SAFE Methodology: A Quantitative Counterpart

The Sustainability Assessment for Fishing Effects (SAFE) framework represents a more quantitative risk assessment pathway. While a detailed deconstruction is limited by the available search results, SAFE is recognized in the literature as a quantitative method that typically involves estimating the potential depletion of a stock under a given fishing pressure by comparing the fishing mortality rate to biological reference points [28]. It moves beyond categorical scoring towards population dynamics modeling, even if simplified. This fundamental difference in approach—qualitative categorical aggregation versus quantitative modeling—defines the core of the comparison.

Comparative Analysis: Assumptions and Data Processing

A critical examination reveals profound differences in the logical structure and informational requirements of the two frameworks.

- Nature of Assumptions: PSA relies on fixed categorical thresholds (e.g., maturity at >15 years is always high risk) that assume a universal, context-independent relationship between a single life-history trait and population resilience. It also assumes that averaging scores across disparate attributes (from fecundity to trophic level) yields a meaningful composite metric. In contrast, SAFE is built on dynamic, mathematical relationships derived from fisheries science, such as the correlation between life-history invariants, which explicitly model how biological parameters interact to determine population growth and response to fishing.

- Data Processing Logic: PSA’s data processing is a linear, deterministic pathway from trait measurement to risk category. The use of an arithmetic mean for P and a geometric mean for S are normative choices not derived from population theory. The final Euclidean distance calculation implies an equal weighting of productivity and susceptibility. SAFE’s processing is inherently model-based, integrating parameters to simulate population trajectories or estimate key metrics like the ratio of fishing mortality to natural mortality (F/M), with uncertainty explicitly acknowledged.

- Output and Interpretation: PSA outputs a static, ordinal risk ranking (Low, Medium, High). Its connection to actual management benchmarks (e.g., biomass limits) is ambiguous. SAFE aims to produce a probabilistic statement about risk, such as the probability of depletion below a defined limit reference point over a specified time horizon, which can directly inform precautionary catch limits.

Table 2: Core Comparison of PSA and SAFE Methodological Paradigms

| Aspect | Productivity Susceptibility Analysis (PSA) | Sustainability Assessment for Fishing Effects (SAFE) |

|---|---|---|

| Primary Classification | Qualitative, categorical risk assessment. | Quantitative, model-based risk assessment. |

| Core Assumption | Vulnerability can be decomposed into independent, scorable attributes whose averaged scores reflect population risk. | Population dynamics can be simulated or approximated using established theoretical relationships to estimate sustainability metrics. |

| Data Processing | Deterministic scoring and weighted averaging (arithmetic/geometric). Final score calculated via Euclidean distance. | Application of population models (e.g., surplus production, age-structured) or estimation of reference points (e.g., FMSY, Fcrash). |

| Key Output | Static vulnerability score (1.41-4.24) and ordinal risk category (Low, Medium, High). | Estimates of sustainability indicators (e.g., F/M, depletion level) often with associated uncertainty. |

| Management Link | Indirect; used for prioritization. Does not directly advise on acceptable catch levels. | More direct; can be used to set provisional catch limits or fishing mortality targets based on risk tolerance. |

Experimental Validation and Performance

A pivotal quantitative evaluation by Hordyk and Carruthers (2018) tested the PSA framework by mapping its logic onto a conventional age-structured population dynamics model [28]. This experiment serves as a critical validation protocol.

Experimental Protocol: Simulation-Based Validation

- Model Construction: A deterministic age-structured model was developed, parameterized by the same life-history traits used in PSA (e.g., maturity, growth, natural mortality).

- PSA Scoring Simulation: Virtual populations with a wide range of life-history combinations were generated. Each was scored using the standard PSA algorithm to obtain a vulnerability score and risk category.

- Dynamic Performance Benchmark: The same virtual populations were subjected to simulated fishing pressure within the dynamics model. Their performance was measured using a quantitative benchmark: the ratio of the fishing mortality rate at equilibrium that drives the population to 20% of its unfished biomass (F20%) to the natural mortality rate (M). This F20%/M ratio is a robust, theory-based measure of resilience.

- Correlation Analysis: The experimentally derived F20%/M values were compared against the PSA-predicted vulnerability scores to test the predictive capacity of the PSA framework [28].

Key Findings and Limitations of PSA

The simulation experiment revealed significant shortcomings:

- Poor Predictive Performance: The correlation between the PSA vulnerability score and the quantitative F20%/M benchmark was weak. Populations with identical PSA scores exhibited a very wide range of actual biological resilience. Conversely, populations with similar resilience could receive very different PSA scores [28].

- Inappropriate Averaging Assumptions: The study found that the PSA's method of averaging attribute scores was not aligned with population dynamics theory. The impact of life-history traits on resilience is not additive or multiplicative in the way PSA assumes [28].

- Misleading Risk Categorization: As a consequence, the PSA risk categories (Low, Medium, High) did not reliably correspond to actual risk levels as determined by the population model. This raises the possibility of both false positives (prioritizing robust species) and false negatives (failing to identify at-risk species) [28].

The conclusion was stark: the information required to score a fishery using PSA—detailed life-history parameters—is largely sufficient to populate a simple but dynamic operating model. The latter approach, while requiring similar data, provides a more credible, transparent, and reproducible characterization of risk [28].

PSA Framework Workflow and Data Processing

Table 3: Key Research Reagent Solutions for Ecological Risk Assessment

| Tool/Resource | Primary Function | Relevance to PSA/SAFE Comparison |

|---|---|---|