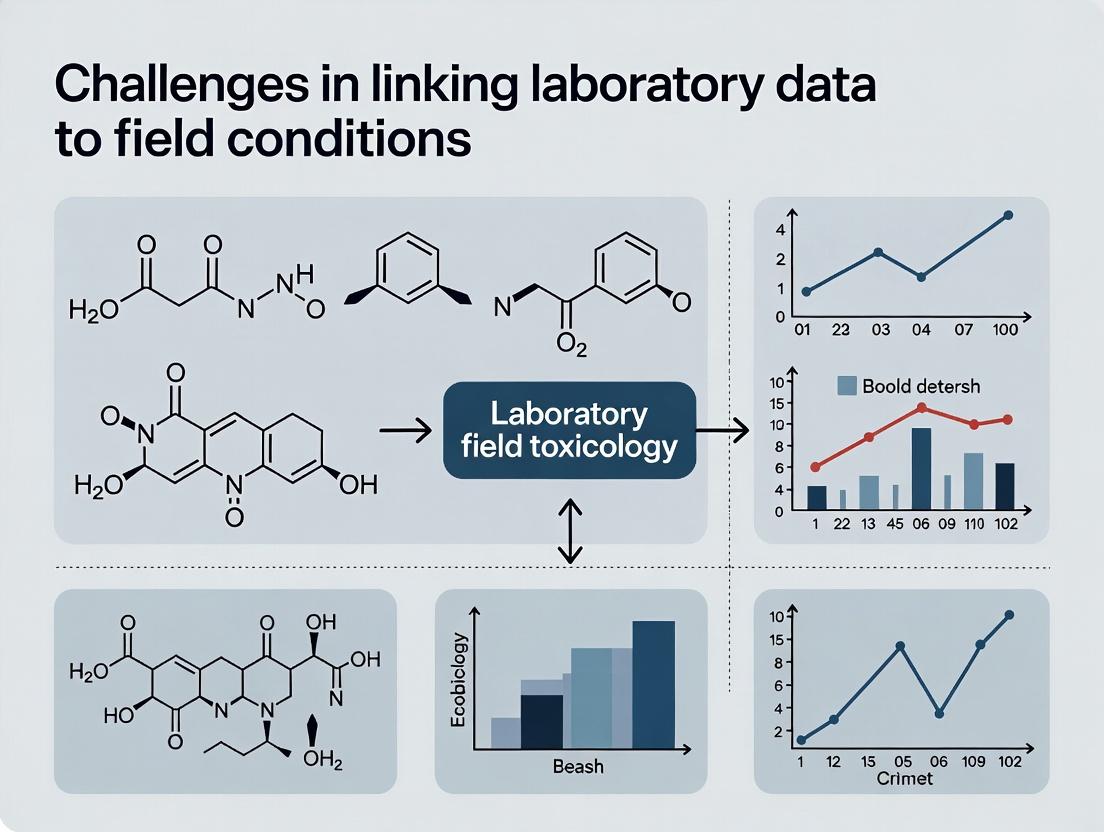

Bridging the Gap: Key Challenges and Solutions in Linking Laboratory Data to Field Conditions

This article provides a comprehensive analysis of the challenges in linking controlled laboratory data to complex real-world field conditions, tailored for researchers, scientists, and drug development professionals.

Bridging the Gap: Key Challenges and Solutions in Linking Laboratory Data to Field Conditions

Abstract

This article provides a comprehensive analysis of the challenges in linking controlled laboratory data to complex real-world field conditions, tailored for researchers, scientists, and drug development professionals. It begins by exploring the foundational obstacles of data heterogeneity, interoperability, and privacy. It then examines methodological advancements in data linkage, AI integration, and standardization. The discussion extends to practical troubleshooting strategies for data quality and optimization, followed by frameworks for rigorous validation and comparative analysis of linked data models. The full scope synthesizes technical, clinical, and regulatory perspectives to guide robust data-driven research and translational science.

Foundational Insights: Exploring the Core Obstacles in Laboratory-Field Data Linkage

Integrating Medical Laboratory Data (MLD) with field-based or real-world research data presents a critical challenge in translational science. While MLD—encompassing clinical tests, biomolecular omics, and physiological monitoring—offers deep, multidimensional insights into patient biology, its effective linkage to broader field conditions (such as environmental exposures, lifestyle factors, and long-term health outcomes) is often hampered by systemic and technical barriers [1]. This technical support center is designed to assist researchers, scientists, and drug development professionals in diagnosing, troubleshooting, and overcoming these integration challenges. The guidance herein is framed within the essential thesis that bridging the gap between controlled laboratory measurements and complex, dynamic field conditions is paramount for advancing predictive medicine, robust clinical trials, and effective public health interventions.

A foundational understanding of MLD's composition is the first step in troubleshooting integration issues. MLD is not a monolithic data type but a complex ecosystem derived from diverse sources, each with distinct characteristics that influence its integration potential [1].

Core Dimensions and Sources of Medical Laboratory Data (MLD): The following table categorizes the primary sources of MLD, their typical data formats, and key integration challenges when linking to field research data.

| MLD Category | Description & Examples | Common Data Formats | Primary Integration Challenges with Field Data |

|---|---|---|---|

| Clinical Laboratory Tests | High-volume, routine testing of bodily fluids (blood, urine). Examples: Complete Blood Count (CBC), metabolic panels, microbiology cultures [1]. | Quantitative values (numeric), categorical results (positive/negative), text-based interpretations [1]. | Lack of standardized coding (e.g., LOINC) across sites; temporal misalignment between lab draw time and field event recording [2]. |

| Biomolecular Omics Data | High-dimensional data from genomics, proteomics, metabolomics assays. Provides insights into molecular mechanisms [1]. | FASTQ, VCF (genomics); mass spectrometry peak lists (proteomics/metabolomics); complex image data [1]. | Immense data volume and complexity; requires specialized bioinformatics pipelines; difficult to correlate with less granular field observations [1]. |

| Physiological Monitoring Data | Continuous or frequent sampling from wearables and medical devices. Examples: ECG, continuous glucose monitoring, inpatient telemetry [1]. | Time-series waveforms, structured numeric streams (e.g., heart rate per minute) [1]. | High-frequency data streams require different handling than episodic field data; device-specific calibration and validation issues [3]. |

| Pathology & Imaging Data | Digital slides (histopathology) and medical imaging (MRI, CT) often analyzed for quantitative features. | DICOM (imaging), whole-slide image files (e.g., .svs); derived feature tables [1]. | File sizes are extremely large; linking image-derived phenotypes to field covariates requires robust, version-controlled metadata [4]. |

The multidimensional nature of MLD is defined by several key characteristics that directly impact integration efforts [1]:

- Heterogeneity: MLD exists in structured numeric, categorical, text, image, and waveform formats, necessitating multimodal analysis approaches [1].

- Temporal Dynamics: Lab data is a time-series snapshot. Its value is amplified when precisely aligned with field-collected data on medication use, symptom onset, or environmental changes [1].

- High-Dimensionality: Especially true for omics data, where the number of features (genes, proteins) can vastly exceed the number of patient samples, creating statistical challenges for correlation with field variables [1].

- Context Dependency: A lab value is meaningless without metadata: the assay method, instrument, unit of measurement, and reference range. This context is often lost during data extraction [2].

Technical Support: Troubleshooting Common MLD Integration Problems

This section addresses frequent, specific issues encountered when working with MLD in integrated research.

FAQ: Data Acquisition & Harmonization

Q1: Our multi-site study has inconsistent lab test codes and units. How can we harmonize this data for analysis? A: This is a prevalent issue stemming from the use of local laboratory information systems (LIS). The solution involves a multi-step harmonization protocol [2]:

- Audit and Map: Create a master list of all analytes across sites. Map each local test code and name to a standard terminology, primarily Logical Observation Identifiers Names and Codes (LOINC). For units, establish a target unit system (e.g., SI units) for each analyte.

- Transform Values: Apply validated conversion formulas to transform all values to the target units. Crucially, document all mappings and transformations in a reusable, version-controlled code script (e.g., in Python or R) to ensure reproducibility [5].

- Implement Checks: Post-harmonization, run statistical summaries (range, mean) by analyte and former source site to flag potential transformation errors or persistent site-specific biases.

Q2: We are integrating high-frequency wearable data with episodic lab results. How do we temporally align these datasets? A: The misalignment of temporal scales requires a strategic "resampling" or "feature extraction" approach.

- For a Direct Temporal Match: If the research question requires a lab value and wearable state at the same moment, define a precise time window (e.g., ±1 hour around the lab draw). Extract the wearable metrics from that window, using summary statistics like the median heart rate during that period.

- For Trend Analysis: If the question relates to how wearable trends predict lab changes, segment the continuous wearable data into epochs (e.g., 24-hour periods before each lab draw). From each epoch, engineer relevant features such as circadian rhythm amplitude, sleep duration, or activity variance, and use these features as covariates in your model alongside the lab result [1].

FAQ: Analysis & Modeling

Q3: My model linking omics data to field questionnaires is overfitting. What are my options? A: Overfitting is common when the number of omics features (p) far exceeds the number of samples (n). Mitigation strategies include [1]:

- Dimensionality Reduction First: Apply unsupervised methods like Principal Component Analysis (PCA) on the omics data and use the top principal components as model inputs instead of raw features.

- Employ Regularized Models: Use algorithms designed for high-dimensional data, such as LASSO (L1 regularization) or Elastic Net regression, which perform feature selection by shrinking irrelevant coefficients to zero.

- Prioritize External Validation: Never rely solely on internal cross-validation. Hold out an entire site or cohort from the start as a strict external validation set to test the generalizability of your discovered associations [1].

Q4: How can we handle the "batch effect" from samples processed in different lab runs or at different centers? A: Batch effects are technical confounders that can be stronger than biological signals. A standard experimental and analytical protocol is essential:

- Experimental Design: If possible, randomly allocate samples from different field study groups across processing batches.

- Statistical Correction: Post-hoc, use methods like ComBat (empirical Bayes) or limma's

removeBatchEffectfunction to adjust the data. Always visualize data with PCA or similar before and after correction to assess efficacy. Note: Correction is safest when applied to technical replicates; over-correction can remove real biological signal.

Troubleshooting Guide: Protocol for Resolving Failed Data Linkage

Problem: After merging MLD and field datasets using a patient ID, the final sample size is much smaller than expected due to many "unmatched" records.

| Step | Action | Expected Outcome & Next Step |

|---|---|---|

| 1. Diagnose | Perform an anti-join to isolate records from each source that failed to merge. Examine the IDs for these records. | Identification of mismatch pattern: e.g., leading zeros, appended suffixes ("_01"), or typographical errors. |

| 2. Clean | Create a consistent ID cleaning protocol (e.g., strip whitespace, standardize case, remove non-alphanumeric characters). Apply it to both datasets and re-attempt the merge. | Increased match rate. If problem persists, proceed to step 3. |

| 3. Investigate | If using a secondary key (like date of birth), check for formatting inconsistencies (MM/DD/YYYY vs. DD-MM-YYYY). For date-time linkages, ensure time zones are aligned. | Reconciliation of format discrepancies. |

| 4. Validate | For a sample of successfully matched and unmatched records, perform a manual audit against the primary source (e.g., EHR or master subject log) to verify the correctness of your linking logic. | Confirmation that the automated linkage is accurate. High error rates indicate a flaw in the core logic, not just formatting. |

| 5. Document | Record the exact cleaning rules, merge logic, and the final match rate. Archive the code used. This is critical for auditability and protocol replication [5] [6]. | A reproducible, documented data linkage pipeline. |

Implementing Solutions: Protocols for Robust MLD Integration

Protocol: Establishing an MLD-Field Data Integration Pipeline

Objective: To create a scalable, reproducible workflow for merging, cleaning, and curating MLD with field research data for analysis.

Materials: Source MLD (e.g., from EHR, LIS, omics core), Source Field Data (e.g., REDCap, eCRF, sensor databases), Secure computational environment (e.g., HIPAA-compliant server or cloud), Data manipulation tools (R, Python, SQL).

Methodology:

- Pre-Merge Curation:

- MLD: Standardize test names to LOINC. Convert all units to a common standard. Flag values that are outside physiologically plausible ranges for review [7].

- Field Data: Harmonize categorical variables (e.g., smoking status: "current" vs. "yes"). Resolve date-time formats to ISO 8601 standard (YYYY-MM-DD).

- Deterministic Linkage:

- Merge datasets using a trusted, study-assigned primary key (Subject ID).

- For temporal linkage, define a decision rule (e.g., "assign the field survey completed closest to, but before, the lab draw date").

- Post-Merge Quality Control (QC):

- Generate a QC report detailing: final sample count, number of missing values per key variable, summary statistics for key analytes by study group.

- Visually inspect distributions (histograms, boxplots) of key MLD variables before and after merge to detect obvious linkage errors that create bias.

- Versioned Archiving:

Protocol: Validating an AI/ML Model Built on Integrated MLD and Field Data

Objective: To rigorously assess the performance and generalizability of a predictive model using integrated data before clinical or field application [1].

Methodology:

- Data Partitioning: Split the integrated dataset into three distinct sets: Training (70%), Validation (15%), and Hold-out Test (15%). The split must be performed at the subject level to prevent data leakage and should preserve the distribution of the outcome variable (stratified sampling).

- Model Training & Tuning: Train the model on the Training set. Use the Validation set for hyperparameter tuning and feature selection. Do not allow any information from the Test set to influence this process.

- Performance Assessment: Evaluate the final, tuned model only once on the Hold-out Test Set. Report standard metrics (AUC-ROC, accuracy, precision, recall, F1-score) with confidence intervals.

- External Validation (Gold Standard): To test true field generalizability, obtain performance metrics on a completely external dataset from a different institution, geographic region, or patient population [1]. A significant drop in performance indicates overfitting to site-specific artifacts in your original integrated data.

- Bias & Fairness Audit: Evaluate model performance across key demographic subgroups (e.g., sex, race, age) within your test and external sets to identify potential disparate impact [1].

| Tool / Resource Category | Specific Examples & Standards | Primary Function in MLD Integration |

|---|---|---|

| Data Standards & Terminologies | LOINC (lab test codes), SNOMED CT (clinical findings), CDISC SDTM/ADaM (clinical trial data structure) [7], HL7 FHIR (data exchange). | Provides common vocabulary for data elements, enabling interoperability and consistent meaning across different sources [4] [7]. |

| Data Management Systems | Laboratory Information Management System (LIMS), Clinical Data Management System (CDMS) like Oracle Clinical or Medidata Rave [7], Electronic Health Record (EHR). | Source systems for MLD and clinical data; modern systems offer APIs for structured data extraction, which is preferable to unstructured export [2]. |

| Computational & Analysis Environments | R (with tidyverse, limma, caret packages), Python (with pandas, scikit-learn, PyTorch/TensorFlow libraries), Secure Cloud Platforms (AWS, GCP, Azure with BAA). |

Provide the environment for data wrangling, harmonization, statistical analysis, and machine learning model development on integrated datasets [1]. |

| Repository & Sharing Platforms | General: GitHub (code), Figshare, Zenodo (datasets). Biomedical: dbGaP, EGA, The Cancer Imaging Archive (TCIA). Protocols: protocols.io [5]. | Facilitate sharing of analysis code, de-identified datasets, and detailed experimental protocols, which is critical for replicability and collaborative science [5]. |

| Quality Control & Profiling Tools | Great Expectations (Python), dataMaid (R), OpenRefine. | Automate data validation checks, generate data quality reports, and identify outliers or inconsistencies in the integrated dataset before analysis [2]. |

A foundational challenge in biomedical and clinical research is the translational gap between controlled laboratory findings and real-world field applications. Research conducted in controlled laboratory settings is characterized by standardized protocols, homogeneous samples, and managed variables, which are essential for establishing internal validity and clear causal relationships [8]. In contrast, field research—encompassing real-world evidence from clinical settings, wearables, and population health data—operates within environments defined by data heterogeneity, system complexity, and dynamic changes over time [9] [10]. The core thesis of modern translational science argues that failing to account for these three key characteristics when using laboratory data can lead to models and conclusions that are not generalizable, potentially resulting in ineffective diagnostics or therapies in real-world conditions [11] [8].

This Technical Support Center is designed to assist researchers, scientists, and drug development professionals in navigating these specific challenges. The following guides and resources provide actionable methodologies for data integration, troubleshooting for common analytical pitfalls, and frameworks to strengthen the validity of research that bridges the laboratory-field divide.

Core Data Challenges: Definitions and Impact

Effectively managing data for translational research requires a clear understanding of the three interdependent challenges. The table below summarizes their definitions, primary causes, and consequences for research outcomes.

Table 1: Core Data Challenges in Translational Research

| Characteristic | Definition | Primary Causes | Impact on Research |

|---|---|---|---|

| Data Heterogeneity | The high degree of variability in data formats, structures, sources, and semantic meaning [9]. | Use of disparate software systems (LIS, EHR, imaging archives) [9]; Lack of standardized terminology (e.g., LOINC, SNOMED CT) [11]; Regional and institutional protocol differences. | Creates "data silos"; impedes data pooling and meta-analysis; introduces noise that masks true biological signals [12]. |

| Complexity | The multidimensional nature of data arising from numerous interacting variables, scales, and data types [9] [10]. | Multimodal data (numerical, text, image, signal) [11]; High-dimensional omics data; Interaction of genetic, environmental, and social determinants of health. | Makes causal inference difficult; risks model overfitting; requires sophisticated analytical methods (e.g., AI/ML) and substantial computational resources. |

| Dynamic Changes Over Time | The non-static nature of data, where distributions, relationships, and patterns evolve [12] [10]. | Disease progression; Patient mobility and changing lifestyles; Evolution of clinical protocols and assay technology; Societal and environmental shifts. | Leads to "model drift" where predictive performance decays; threatens the long-term validity of research conclusions and clinical decision support tools. |

Technical Support: Troubleshooting Guide

This guide addresses common operational problems encountered when working with heterogeneous and complex real-world data. Follow the steps sequentially for each issue.

Issue 1: Inability to Integrate or Analyze Disparate Datasets

- Problem: Data from different sources (e.g., separate lab systems, historical vs. new records) cannot be combined for a unified analysis [9].

- Diagnosis: This is typically caused by a lack of syntactic and semantic interoperability.

- Resolution Path:

- Audit Data Sources: Catalogue all data sources, noting their native formats, coding systems, and governance policies [9].

- Map to Standard Terminologies: Implement a process to map local test codes to universal standards like LOINC (Logical Observation Identifiers Names and Codes) for laboratory data. Be aware that automated mapping tools can have error rates of 4.6% to 19.6% and may require expert validation [11].

- Utilize Interoperability Frameworks: Employ standardized data exchange protocols and models, such as HL7 (Health Level Seven) or FHIR (Fast Healthcare Interoperability Resources), to structure the data pipeline [13].

- Build a Canonical Data Model: Create or adopt a unified data model (e.g., OMOP CDM) within a Clinical Data Warehouse (CDW). This transforms heterogeneous sources into a common format suitable for analysis [9].

Issue 2: Machine Learning Model Performance Degrades on New or External Data

- Problem: A model trained on one dataset performs poorly when validated on data from a different time period, location, or patient population [12].

- Diagnosis: The problem is likely due to data heterogeneity causing a non-IID (Identically and Independently Distributed) data environment and temporal drift [12].

- Resolution Path:

- Test for Data Shift: Use statistical tests (e.g., Kolmogorov-Smirnov) to compare feature distributions between your training set and the new deployment data.

- Employ Robust Modeling Techniques:

- For spatial/population heterogeneity, consider Federated Learning (FL). FL trains algorithms across decentralized devices/servers without sharing raw data, helping models generalize across heterogeneous sites [12].

- Use algorithms designed for non-IID data, such as FedProx, which modifies the Federated Averaging (FedAvg) algorithm to handle heterogeneity more effectively [12].

- Implement Continuous Validation & Retraining: Establish a pipeline to regularly monitor model performance with incoming data and trigger retraining cycles with updated datasets to mitigate temporal drift.

Issue 3: Results Are Not Reproducible or Generalizable

- Problem: Findings from a controlled study cannot be replicated in a different setting or scaled to a broader population [8] [10].

- Diagnosis: The Modifiable Areal Unit Problem (MAUP) and scale-dependence of patterns may be at play. Conclusions drawn at one level of data aggregation (e.g., a specific hospital lab) may not hold at another (e.g., a national registry) [10].

- Resolution Path:

- Frame Analysis with Explicit Context: Always document the spatial, temporal, and demographic context of your data. Conduct multiscale analysis where possible to see if patterns persist across different levels of aggregation [10].

- Harmonize Laboratory Results: Ensure comparability across sites by focusing on standardization (using reference methods and materials) and harmonization (adjusting results to make them comparable) [11].

- Adopt Hybrid Research Designs: Bridge the gap by using linked laboratory-field studies. Generate hypotheses in controlled lab settings and validate them in real-world field studies, and vice-versa [8].

Detailed Experimental Protocols

Protocol 1: Data Harmonization for Multi-Center Laboratory Studies

Objective: To integrate quantitative laboratory test results from multiple institutions for joint analysis. Background: Direct comparison of test results across labs is confounded by differences in assays, instruments, and calibrators [11]. Materials: Raw lab data from each partner; Reference method and material information; Statistical software (R, Python). Procedure:

- Terminology Alignment: Map all local test codes to a common standard (LOINC) [11].

- Bias Assessment: For each test (LOINC code), have all labs assay a panel of common reference samples. Use linear regression to determine systematic differences (slope, intercept) between each lab's method and the chosen reference method.

- Result Transformation: Apply the derived calibration equations to transform each institution's patient results into a harmonized scale.

- Quality Control: Establish ongoing quality assurance using shared control materials to monitor for future drift.

Protocol 2: Simulating and Mitigating Heterogeneity in Federated Learning

Objective: To evaluate and improve the robustness of an AI model trained on heterogeneous, distributed medical imaging data. Background: In Federated Learning, data heterogeneity across clients (e.g., hospitals) can significantly degrade global model performance [12]. Materials: A partitioned medical imaging dataset (e.g., COVIDx CXR-3 [12]); FL simulation framework (e.g., PySyft, NVIDIA FLARE). Procedure:

- Partition Data: Split the dataset into

Nclient pools to simulate realistic heterogeneity:- IID (Control): Shuffle and randomly allocate data to clients.

- non-IID (Experimental): Partition data by label (disease type), source institution, or acquisition time to create skewed client distributions [12].

- Baseline Training: Train a model using the standard Federated Averaging (FedAvg) algorithm on the non-IID data. Record convergence rate and final accuracy.

- Intervention Training: Train an identical model architecture using the FedProx algorithm, which adds a proximal term to the local loss function to constrain client updates and reduce drift [12].

- Evaluation: Compare the validation accuracy, convergence stability, and fairness (performance across all client types) between the FedAvg and FedProx models.

Frequently Asked Questions (FAQs)

Q1: Our historical clinical data is messy and stored in old formats. Is it worth integrating, or should we focus only on new, clean data? A: Historical data is invaluable for studying long-term trends and rare outcomes [9]. The key is a structured integration process: start with a pilot project to assess quality, use automated "data scrubbing" tools for formatting and error correction [9], and integrate it into a modern CDW. The value of longitudinal insights often outweighs the cleanup cost.

Q2: What is the most common mistake in standardizing laboratory data for big data research? A: The most common mistake is assuming that mapping local codes to LOINC is a one-time, solved problem. Studies show persistent error rates in LOINC mapping (e.g., 4.6%-19.6%) [11]. Relying solely on automated tools without expert clinical and laboratory review leads to semantic errors that corrupt the entire dataset. Regular audits of code mappings are essential.

Q3: How can we protect patient privacy when sharing data or models across institutions for research? A: Beyond traditional anonymization, which can reduce data utility [9], consider privacy-preserving technologies:

- Federated Learning (FL): Only model updates (weights/gradients) are shared, not patient data [12].

- Synthetic Data Generation: Create artificial datasets that mimic the statistical properties of the real data without containing any real patient records.

- Secure Multi-Party Computation (MPC): Allows computation on encrypted data across institutions.

Q4: Our field-collected sensor data is extremely noisy and has many missing intervals. How can we make it usable for linking to precise lab results? A: This is a classic complexity challenge. Develop a robust preprocessing pipeline:

- Signal Processing: Apply filters to remove high-frequency noise and artifacts.

- Imputation with Context: Use advanced imputation methods (e.g., MICE, deep learning models) that consider temporal patterns and correlations with other sensor streams, rather than simple mean replacement.

- Feature Engineering: Extract stable, summary features (e.g., circadian rhythm parameters, variability indices) over meaningful epochs instead of relying on raw, moment-to-moment readings. This can create more reliable variables for correlation with lab values.

The Scientist's Toolkit

Table 2: Essential Tools & Resources for Managing Translational Data Challenges

| Tool/Resource Category | Specific Examples | Primary Function |

|---|---|---|

| Terminology Standards | LOINC [11], SNOMED CT [11], UMLS | Provides universal codes for medical concepts, enabling semantic interoperability across datasets. |

| Interoperability Frameworks | HL7 FHIR [13], DICOM, OMOP CDM | Defines APIs and data models for exchanging healthcare information electronically between systems. |

| Privacy-Preserving Analytics | Federated Learning Frameworks (e.g., PySyft, TensorFlow Federated) [12], Differential Privacy Tools | Enables collaborative model training and analysis without centralizing or directly sharing sensitive raw data. |

| Data Quality & Harmonization | R (*pointblank*, *validate* packages), Python (*great_expectations*), CAP surveys [11] |

Profiles data, validates against rules, and assesses inter-laboratory variability to enable result calibration. |

| Workflow & Pipeline Management | Nextflow, Snakemake, Apache Airflow | Orchestrates complex, reproducible data preprocessing and analysis pipelines across heterogeneous computing environments. |

Visual Guides to Processes and Relationships

Data Integration and Modeling Workflow for Federated Learning

The Federated Learning Cycle for Privacy-Preserving Analysis

A fundamental challenge in applied sciences, from environmental engineering to drug development, is translating validated laboratory findings into effective real-world solutions [14]. This "lab-field disconnect" arises because controlled experimental environments inevitably simplify the complex, multivariate conditions of the natural world [14]. A striking example is the attempted use of cloud seeding to mitigate severe air pollution in India's National Capital Region. Despite scientific principles suggesting low atmospheric moisture would prevent success, the project proceeded based on laboratory confidence, resulting in predictable failure and no measurable improvement in air quality [14]. This incident underscores a critical thesis: successful translation requires more than robust lab data; it demands rigorous validation of contextual feasibility, anticipation of variable field conditions, and systematic troubleshooting to bridge the gap between theory and practice [14].

This Technical Support Center is designed to help researchers, scientists, and drug development professionals anticipate, diagnose, and solve problems that arise when moving experiments from the controlled lab to the variable field. The guidance below provides a structured troubleshooting methodology, detailed experimental protocols for validation, and essential resources to build resilience into your translational research.

Systematic Troubleshooting Methodology

Effective troubleshooting is a core scientific skill that moves from observation to corrective action through logical deduction [15] [16]. The following six-step framework, adapted for the lab-field context, provides a disciplined approach to diagnosing translational failures [16].

Table 1: Six-Step Troubleshooting Framework for Lab-Field Translation

| Step | Key Action | Application to Lab-Field Disconnect |

|---|---|---|

| 1. Identify | Define the specific failure without assuming cause. | State the observed discrepancy between expected (lab) and actual (field) results precisely. |

| 2. Hypothesize | List all plausible root causes. | Consider environmental variables, scale-up effects, material differences, and procedural drift. |

| 3. Investigate | Gather existing data and historical context. | Review all lab and field logs, environmental data, and prior similar translations. |

| 4. Eliminate | Rule out causes contradicted by evidence. | Use collected data to narrow the list of hypotheses to the most probable few. |

| 5. Test | Design & execute targeted diagnostic experiments. | Conduct small-scale, controlled field tests or simulated stress tests in the lab. |

| 6. Resolve | Implement fix and update protocols. | Apply the solution, document the change, and adjust standard operating procedures (SOPs) to prevent recurrence. |

Implementing the Framework: When a problem arises, convene a focused "Pipettes and Problem Solving" session [15]. A team leader presents the failed field scenario and mock data. The group must then collaboratively propose the most informative diagnostic experiments to identify the root cause, with the leader providing mock results for each proposed test. This exercise builds critical thinking and emphasizes efficient, evidence-based deduction over guesswork [15].

Troubleshooting Scenarios & Experimental Protocols

Here are three common translational challenges, with specific diagnostic protocols to identify their root causes.

Scenario 1: Inconsistent Analytical Results in Field-Deployed Sensors

- Problem: Sensors calibrated in the lab show high variance, drift, or signal loss when deployed for environmental monitoring (e.g., air/water quality).

- Diagnostic Protocol:

- Co-location Test: Deploy a known, lab-verified reference sensor immediately adjacent to the failing field unit under identical conditions for 24-72 hours [14].

- Controlled Contamination Check: In the lab, expose a duplicate sensor to a clean control matrix and a matrix spiked with potential field interferents (e.g., dust, humidity, specific chemicals known to be present).

- Power & Data Log Audit: Install a continuous logger to monitor the field sensor's power supply voltage and data transmission integrity, checking for correlations between signal anomaly and power or data dropout events.

- Expected Outcomes: A discrepancy in the co-location test points to a unit-specific fault. Consistent failure in the contamination test reveals a material interference. Correlation with power/data issues identifies an infrastructural problem.

Scenario 2: Failed Biological Agent Delivery in Open Environments

- Problem: A biological control agent (e.g., bacteria, enzyme) effective in lab assays fails to degrade its target pollutant in a field trial [14].

- Diagnostic Protocol:

- Field Sample Viability Assay: Retrieve a sample of the deployed agent from the field site at regular intervals (0h, 6h, 24h). Immediately assay its activity in vitro using the standard lab protocol.

- Environmental Stressor Simulation: In the lab, subject the agent to individual and combined field conditions (e.g., specific UV intensity, pH range, temperature flux, presence of non-target chemicals) and measure activity loss over time.

- Formulation & Delivery Check: Replicate the exact field formulation and application method (e.g., spraying apparatus, carrier solution) in a contained, controlled outdoor mesocosm to isolate delivery failure from environmental factors.

- Expected Outcomes: Rapid loss of activity in retrieved samples suggests environmental degradation. Success in the mesocosm but failure in the open field confirms a critical, uncontained environmental variable as the cause.

Scenario 3: Loss of Data Integrity in Field Data Collection

- Problem: Data collected for secondary research use in the field is incomplete, inconsistent, or poorly documented, making analysis unreliable [17].

- Diagnostic Protocol (Root Cause Analysis):

- Process Shadowing: Observe and document the actual field data collection and entry workflow without intervention. Compare it to the official written SOP.

- Data Traceability Audit: Select a random sample of field records. Attempt to trace each data point back to its raw source (e.g., instrument readout, manual entry log) and forward through all processing steps.

- Stakeholder Interview: Briefly interview personnel involved at different stages (collection, entry, validation) about their understanding of the data fields, common problems, and workarounds they use [17].

- Expected Outcomes: This workflow analysis will reveal root causes such as ambiguous field definitions, impractical SOPs, software design flaws enabling inconsistent entry, or lack of training [17]. The solution often involves process redesign, not just personnel correction.

Table 2: Summary of Key Diagnostic Protocols

| Scenario | Primary Diagnostic | Key Metric | Indicates |

|---|---|---|---|

| Sensor Variance | Co-location Test | Correlation Coefficient (R²) | Instrument fault vs. environmental effect |

| Agent Failure | Field Sample Viability Assay | Activity Half-life (t½) | Agent degradation kinetics in situ |

| Data Integrity | Process Shadowing | SOP Deviation Frequency | Problems in workflow design or training |

The Scientist's Toolkit: Research Reagent & Resource Solutions

Equipping field research properly is essential for robust data. Below are key solutions for common translational challenges.

Table 3: Essential Research Reagent & Resource Solutions

| Item / Solution | Function & Rationale | Example Application |

|---|---|---|

| Stable Isotope-Labeled Tracers | Provides an internal, chemically identical standard that is distinguishable by mass spectrometry. Controls for recovery losses and matrix effects during field sample analysis. | Quantifying the environmental degradation rate of a pharmaceutical compound in wastewater. |

| Encapsulated/Protected Reagents | Physical or chemical barriers (e.g., liposomes, silica gels) protect active ingredients (enzymes, bacteria) from premature environmental degradation (UV, pH) [14]. | Delivering a bioremediation agent to a specific soil depth before release. |

| Field-Portable Positive Controls | Lyophilized or stabilized materials that generate a known signal. Used for daily verification of field instrument and assay performance on-site. | Validating a lateral flow assay for pathogen detection at a remote agricultural site. |

| Electronic Laboratory Notebooks (ELN) with Offline Mode | Ensures consistent, timestamped, and structured data capture in the field, which syncs when connectivity is restored. Prevents data loss and transcription errors [17]. | Documenting ecological survey data or clinical sample collection in low-connectivity areas. |

| Modular Environmental Simulation Chambers | Small, portable chambers that allow controlled application of single stressors (e.g., light, temperature) to field samples in situ before full-scale deployment. | Testing the relative impact of UV vs. temperature on a new solar panel coating's efficiency. |

Frequently Asked Questions (FAQs)

Q1: Our field study failed despite exhaustive lab testing. What should we analyze first? A1: Begin with a rigorous review of contextual feasibility, which is often overlooked [14]. Systematically compare every condition assumed in the lab (e.g., stable temperature, pure reagents, uniform application) with the measured realities of the field site. The largest discrepancy is often the primary suspect.

Q2: How can we design better lab experiments to predict field outcomes? A2: Employ "Stress Testing" in your lab phase. Do not just test under ideal conditions. Design experiments that introduce key field variables one at a time and in combination (e.g., temperature cycles, impure substrates, intermittent application). This builds a performance envelope for your system.

Q3: What is the most common source of data quality issues when moving to field studies? A3: Inconsistent documentation and process drift [17]. In the field, protocols are often adapted on the fly. The solution is to use simplified, field-optimized SOPs with mandatory single-point data entry (like a streamlined digital form) and clear rules for documenting any deviation immediately [17].

Q4: How do we manage undefined or highly variable field inputs in our assays? A4: Use a standard addition method or an internal standard. By spiking field samples with known quantities of the target analyte and measuring the change in signal, you can account for matrix effects that interfere with quantification.

Q5: Who should be involved in troubleshooting a major translational failure? A5: Form an ad-hoc team spanning domains [14]. Include the lab scientists who developed the technology, the field engineers who deployed it, and a domain expert for the field environment (e.g., an atmospheric scientist, an agronomist) [14]. Avoid having decisions driven solely by one perspective [14].

Visualizing the Workflow: From Lab Validation to Field Translation

The following diagrams map the critical pathways for successful translation and systematic troubleshooting.

Diagram 1: Ideal Translation & Troubleshooting Pathway. This workflow shows the staged progression from lab to field, with explicit return loops for troubleshooting at each stage if failure criteria are met.

Diagram 2: Systematic Troubleshooting Logic Flow. This logic tree outlines the decision-making process within the six-step framework, emphasizing the iterative cycle between forming hypotheses and testing them with evidence.

Technical Support Center: Troubleshooting Translational Research Data Integration

This technical support center addresses the critical barriers that researchers, scientists, and drug development professionals face when linking controlled laboratory data with complex real-world field or clinical data. Isolated data, incompatible systems, and stringent regulations can stall translational research. The following guides and FAQs provide practical strategies to diagnose, troubleshoot, and overcome these challenges.

Troubleshooting Guide: Common Data Integration Failures

Issue 1: Inaccessible or Isolated Laboratory Data (Data Silos)

- Symptoms: Inability to access another department's experimental data; duplicate data entry across spreadsheets and systems; conflicting conclusions from different teams analyzing similar data [18] [19].

- Root Cause Analysis: Silos often form from decentralized technology purchases, rapid organizational growth, or a culture where departments view data as a proprietary asset [19] [20]. Legacy systems lacking integration capabilities are a major contributor.

- Diagnostic Check:

- Map your primary data sources: How many separate systems (LIMS, ELN, EHR, CRM) hold critical research data?

- Conduct a data audit to identify inconsistencies in sample IDs, units, or terminologies for the same entity across systems [19].

- Resolution Protocol:

- Short-term: Establish a cross-functional data governance committee with representatives from research, clinical, and IT to define data ownership and access policies [19].

- Medium-term: Implement a central data catalog or warehouse to create a unified view without initially moving all data [18] [19]. Enforce standardized data entry templates with required fields (e.g., sample ID format, measurement units) [21].

- Long-term: Develop a master data management (MDM) strategy. Invest in an Integration Platform as a Service (iPaaS) or middleware to create scalable, automated connections between core systems like LIMS and EHRs [20].

Issue 2: Failure to Exchange or Interpret Data (Interoperability Gaps)

- Symptoms: Manual re-entry of lab results into clinical trial databases; inability to automatically ingest patient biomarker data from a hospital's EHR into research analysis tools; errors or lost metadata during data transfer [22] [23].

- Root Cause Analysis: Systems use different data formats, lack standard APIs, or implement standards like HL7 or LOINC with local customizations, rendering them unable to "speak the same language" [23] [13].

- Diagnostic Check:

- Identify the data standards (e.g., HL7 FHIR, CDISC, LOINC) used by your internal systems and required by external partners (e.g., clinical trial networks, regulatory submissions).

- Test a sample data transfer for a key data element (e.g., serum creatinine value) from source to destination and verify the value, unit, and associated metadata are preserved.

- Resolution Protocol:

- Short-term: For critical studies, develop and document project-specific data dictionaries and mapping specifications to ensure consistency.

- Medium-term: Advocate for and adopt core interoperability standards within your organization. Prioritize systems that offer robust, standards-based APIs (e.g., HL7 FHIR APIs) for external connectivity [22] [23].

- Long-term: Design a system architecture with interoperability as a core principle. Use Fast Healthcare Interoperability Resources (FHIR) standards to structure data and APIs for exchange, facilitating easier integration with healthcare ecosystems [23].

Issue 3: Compliance Hurdles in Data Sharing for Research

- Symptoms: Aborting a multi-regional trial due to inability to transfer patient data across borders; lengthy delays securing IRB/ethics approval for data reuse; uncertainty over proper legal basis for secondary data analysis [24].

- Root Cause Analysis: Navigating a complex patchwork of evolving privacy regulations (GDPR, HIPAA, CCPA, etc.) with differing requirements for consent, anonymization, and cross-border data transfer [24].

- Diagnostic Check:

- Classify the data involved: Does it contain direct identifiers, is it pseudonymized, or truly anonymized per relevant regulations?

- Trace the data flow: Identify all geographic jurisdictions where data is collected, processed, and stored.

- Resolution Protocol:

- Short-term: Conduct a focused Legitimate Interest Assessment (LIA) for GDPR or a similar evaluation under other laws to justify necessary data processing for research purposes [24].

- Medium-term: Implement "privacy by design" in study protocols. This includes data minimization, clear data retention schedules, and pre-planning anonymization pipelines for data intended for sharing or biobanking [24].

- Long-term: Develop institutional expertise or partnerships to navigate international data transfer mechanisms (e.g., EU Standard Contractual Clauses, adequacy decisions). Establish a process for continuous monitoring of regulatory changes in key operational regions [24].

Frequently Asked Questions (FAQs)

Q1: Our lab still uses paper notebooks and spreadsheets. What's the first, most impactful step we can take to reduce data errors and improve sharing? A1: The highest-impact first step is implementing a Laboratory Information Management System (LIMS). A LIMS standardizes data collection with required fields, automates data capture from instruments to eliminate manual transcription errors, and uses barcode tracking to prevent sample mix-ups [21]. This creates a single, reliable digital source for experimental data, forming the foundation for future integration.

Q2: We have an EHR for clinical data and a LIMS for lab data, but they don't talk to each other. Is full system replacement the only solution? A2: No, a full replacement is often unnecessary and highly disruptive. A more feasible strategy is to use integration technologies. Application Programming Interfaces (APIs), particularly those using the FHIR standard, can enable secure communication between disparate systems [23] [13]. Middleware or an iPaaS can act as a translation hub, connecting your existing EHR, LIMS, and other data repositories without replacing them [20].

Q3: Can we share and use patient clinical data for our translational research under new privacy laws? A3: Yes, but it requires careful planning and legal justification. Regulations like GDPR do not prohibit research but establish strict conditions. Key pathways include obtaining specific patient consent for research use, or leveraging provisions for scientific research which may allow use of existing data under safeguards like pseudonymization and approved ethics protocols [24]. Always consult with legal and compliance experts.

Q4: What is a "data silo" and why is it specifically harmful for drug development research? A4: A data silo is an isolated repository of data controlled by one department and inaccessible to others [19]. In drug development, this is critically harmful because it:

- Breaks the translational pipeline: Prevents linking early biomarker discovery (lab) with patient response data (clinical trial).

- Increases cost and risk: Leads to redundant experiments, missed safety signals, and delays. Fixing a data error after it propagates through a siloed system can cost 100x more than correcting it at entry [21].

- Hinders innovation: Artificial intelligence and machine learning models require large, integrated datasets to identify complex patterns, which silos actively prevent [18] [20].

The following tables summarize key quantitative data on the prevalence and impact of the fundamental barriers discussed.

Table 1: Documented Business Costs of Data Silos

| Cost Category | Metric / Finding | Primary Impact | Source |

|---|---|---|---|

| Productivity Loss | Employees spend up to 12 hours/week searching for or reconciling data. | Delayed research timelines, inefficient use of skilled staff. | [18] |

| Revenue Impact | Bad data quality costs companies an average of $12.9 million annually. | Reduced R&D efficiency, missed commercialization opportunities. | [19] |

| Error Amplification | The 1-10-100 rule: It costs $1 to validate data at entry, $10 to clean it later, and $100 if an error causes a faulty analysis or decision. | Exponential increase in cost to rectify errors in research or regulatory submissions. | [21] |

Table 2: Interoperability Adoption and Gaps in Healthcare Data

| Metric | Adoption/Performance Level | Implication for Research | Source |

|---|---|---|---|

| EHR Adoption | 96% of acute care hospitals use certified EHRs. | High digital penetration provides a data source, but access for research is not guaranteed. | [22] |

| Core Interoperability | Only 45% of US hospitals can find, send, receive, and integrate electronic health information. | Significant technical and procedural hurdles remain before seamless data exchange is commonplace. | [22] |

| Standardized Data Exchange | Health Information Exchanges (HIEs) use standards like HL7 and FHIR to ensure interoperability. | Adopting these same standards is key for research systems to connect with clinical data networks. | [13] |

Table 3: Overview of Key Evolving Privacy Regulations Affecting Research

| Regulation (Region) | Key Scope for Research | Status & Relevance | |

|---|---|---|---|

| GDPR/UK GDPR (EU/UK) | Governs processing of personal data of individuals in the EEA/UK. Requires a lawful basis (e.g., consent, public interest in research) and provides special protections for scientific research. | In effect. Major impact on multi-regional clinical trials and data sharing with European partners. | [24] |

| CCPA/CPRA (California, USA) | Grants consumers rights over their personal information. Research may be exempt under certain conditions, but requirements differ from HIPAA. | In effect. Complicates data governance for US-based studies with Californian participants. | [24] |

| PIPL (China) | Omnibus data privacy law with strict rules on cross-border transfer of personal information, requiring a security assessment or certification. | In effect. A critical consideration for clinical research and collaborations involving data from China. | [24] |

Detailed Methodologies: Key Experiment Protocols

Protocol 1: Qualitative Analysis of Interoperability Barriers This protocol is based on a peer-reviewed study investigating stakeholder perspectives on interoperability challenges [22].

- Objective: To identify technological, organizational, and environmental barriers to health data interoperability from a multi-stakeholder perspective.

- Design: Cross-sectional qualitative study using semi-structured interviews.

- Sampling: Stratified purposive sampling of key informants (n=24) from four groups: hospital leaders, primary care providers, behavioral health providers, and regional Health Information Exchange (HIE) network leaders. Includes rural and urban subsamples [22].

- Data Collection: Conduct 45-60 minute interviews guided by a framework covering EHR implementation, policy alignment, and interoperability challenges. Record and transcribe interviews verbatim.

- Analysis: Employ directed content analysis using the Technology-Organization-Environment (TOE) framework as a guide to code transcripts and identify themes [22].

- Output: Thematic report detailing barriers (e.g., mismatched system capabilities, lack of leadership support) and facilitators (e.g., strategic alignment with value-based care) for interoperability.

Protocol 2: Systematic Review of Integration Technologies in Laboratory Systems This protocol outlines the methodology for synthesizing evidence on integration technologies, as performed in a systematic review [13].

- Objective: To synthesize empirical studies on the use of integration technologies for software-to-software communication in Laboratory Information Systems (LIS).

- Data Sources: Systematic search of PubMed database following PRISMA 2020 guidelines.

- Eligibility Criteria: Include empirical studies focusing on integration technologies (APIs, middleware, standards like HL7) enabling communication between LIS and other health information systems.

- Study Selection: Three-phase process: (1) Scoping analysis to define field; (2) Methodological analysis of study designs; (3) Gap analysis to identify research needs.

- Data Extraction: From 28 included studies, extract data on: integration methodologies, data standards used, communication protocols, reported outcomes, and implementation challenges.

- Synthesis: Thematic analysis to identify common successful technologies (e.g., HL7/FHIR), persistent challenges (e.g., data incompatibility, security), and gaps in the literature (e.g., lack of long-term validation studies).

Visualizing Solutions: Workflows and Frameworks

Data Integration Workflow for Translational Research

Framework for Diagnosing and Solving Data Barriers

The Scientist's Toolkit: Essential Solutions & Standards

Table 4: Key Research Reagent Solutions for Data Integration

| Tool Category | Specific Solution / Standard | Primary Function in Translational Research |

|---|---|---|

| Core Data Management | Laboratory Information Management System (LIMS) | Centralizes and standardizes experimental data capture, manages samples, and enforces workflows to ensure data integrity at the source [21]. |

| Interoperability Standards | HL7 Fast Healthcare Interoperability Resources (FHIR) | A modern API-based standard for exchanging healthcare data. Allows research systems to request and receive clinical data (e.g., lab results, patient demographics) from EHRs in a structured format [23] [13]. |

| Terminology Standards | LOINC & SNOMED CT | LOINC: Provides universal codes for identifying lab tests and clinical observations. SNOMED CT: Standardizes clinical terminology for diagnoses, findings, and procedures. Using these ensures consistent meaning of data across systems [23]. |

| Integration Technology | Integration Platform as a Service (iPaaS) / Middleware | Acts as a "central hub" to connect disparate applications (LIMS, EHR, analytics tools) without custom point-to-point coding. Manages data transformation, routing, and API orchestration [20]. |

| Data Storage & Analytics | Cloud Data Warehouse (e.g., Snowflake, BigQuery) | Provides a scalable, centralized repository for integrating structured and semi-structured data from multiple sources. Enables powerful analytics and machine learning on combined lab and clinical datasets [18] [19]. |

| Compliance & Security | Data Anonymization/Pseudonymization Tools | Software that applies techniques like masking, generalization, or perturbation to remove direct and indirect identifiers from personal data, facilitating sharing for research under privacy regulations [24]. |

The Critical Impact of Data Quality and Completeness on Linkage Feasibility

Technical Support Center: Troubleshooting Data Linkage in Translational Research

This technical support center addresses common data quality challenges that researchers face when attempting to link controlled laboratory experiments with complex field or clinical observations. Success in translational science depends on this linkage, yet it is frequently compromised by underlying data issues [25].

Frequently Asked Questions (FAQs) & Troubleshooting Guides

Q1: Our attempt to link laboratory biomarker data with patient field records failed because too many records were excluded for missing values. How do we diagnose and fix this "completeness" problem?

- Diagnosis: This is a classic data completeness issue, where essential data points required for linkage and analysis are absent [26]. The first step is to determine if the problem is with specific attributes (e.g., missing patient IDs or timestamps) or entire records [26].

- Troubleshooting Guide:

- Profile Your Data: Use data profiling tools or scripts to calculate the percentage of missing values in each critical field (e.g., sample ID, collection date, subject identifier) [26].

- Identify Patterns: Determine if missingness is random or systematic (e.g., data always missing from a specific clinic site or for a particular assay).

- Assess Impact: Use the metrics in Table 1 to quantify the problem. A high "Number of Empty Values" or low "Data Completeness Score" will confirm the severity [27] [28].

- Implement Fixes:

- Standardize Collection: Re-train staff on protocols and implement mandatory field checks in Electronic Lab Notebooks (ELNs) and Clinical Data Management Systems [26].

- Define Business Rules: Clearly classify data fields as "mandatory for linkage" versus "optional." [29]

- Consider Imputation Carefully: For subsequent analysis, statistical imputation of missing values may be an option, but it must be documented and justified, as it can introduce bias [25].

Q2: We merged datasets from two different clinical sites, but the same patient appears with different identifiers. Now our linked data has duplicates. How do we resolve this?

- Diagnosis: This is a problem of inconsistent data (different formats/rules per site) leading to a failure in uniqueness [25] [29]. The underlying entity (the patient) is not represented by a single, unique record.

- Troubleshooting Guide:

- Measure Duplication: Calculate the "Duplicate Record Percentage" for key entities (Patient ID, Sample ID) [27].

- Conduct Rule-Based Deduplication:

- Create a matching logic using multiple fields (e.g., name, date of birth, site code) to identify records likely representing the same entity.

- Use deterministic or probabilistic matching algorithms to cluster duplicate records.

- Establish a Master Reference: Create a golden record for each unique patient and link all related data to it. Maintain this master list for all future linkages.

- Prevent Recurrence: Implement and enforce a standard global identifier schema (e.g., a study-specific subject ID) across all sites and laboratory systems before data collection begins [25].

Q3: Our machine learning model, trained on pristine lab data, performs poorly when predicting outcomes based on real-world field data. Could data quality be the cause?

- Diagnosis: Almost certainly. This disconnect often stems from a mismatch in data accuracy, consistency, and validity between the controlled lab environment and the messy field environment [25]. Gartner predicts that through 2026, 60% of AI projects will fail due to issues with data readiness [25].

- Troubleshooting Guide:

- Audit Field Data Against Lab Standards: Check field data for violations of assumptions held in the lab (e.g., measurement units, time scales, detection limits). Use "Data Transformation Error" rates as a metric [27].

- Check for Temporal Decay: Field data can become outdated quickly. Measure "Data Update Delays" to ensure you're using relevant information [27].

- Assess for Hidden Bias: The field data may be biased (e.g., under-representing certain patient subgroups), which the lab data did not account for [25]. Analyze the demographic and clinical characteristic distributions.

- Remediate with Continuous Monitoring: Implement a data observability tool or pipeline checks to monitor the quality of incoming field data against your lab-derived model's expected input specifications [25].

Q4: We are designing a new study to link genomic lab data with longitudinal patient health records. What is the most critical data quality factor to prioritize from the start?

- Answer: While all dimensions are important, completeness and consistency are paramount for linkage feasibility. You cannot link data that is missing the necessary join keys (e.g., a stable participant ID). Furthermore, inconsistent representation of variables (like using "M/F" in one dataset and "Male/Female" in another) will break automated linkage scripts.

- Preventive Protocol:

- Implement a Data Governance Plan: Before collecting the first sample, define clear data standards, formats, and mandatory fields for all systems [25] [28].

- Use a Structured Framework: Define your study using the PICO framework (Population, Intervention/Indicator, Comparison, Outcome) to explicitly identify the key data elements you must have [30]. For a linkage study, "Population" must include a reliable linking identifier.

- Automate Validation at Entry: Build validation rules (for format, range, and type) into data entry forms to prevent invalid data at the source [29] [28].

Quantifying the Problem: Key Data Quality Metrics

To move from qualitative description to actionable science, you must measure data quality. Below are key metrics adapted for a research context [27] [28].

Table 1: Key Data Quality Metrics for Research Linkage Feasibility

| Metric Name | Description | Calculation Example | Impact on Linkage Feasibility |

|---|---|---|---|

| Data Completeness Score | Percentage of required fields populated with non-null values [29] [28]. | ( # of complete patient records / Total # of records ) x 100. | Low score directly reduces the number of records available for linkage and analysis. |

| Duplicate Record Percentage | Proportion of records that refer to the same real-world entity [27]. | ( # of duplicate participant IDs / Total # of IDs ) x 100. | Inflates subject counts, corrupts statistical analysis, and misrepresents the population. |

| Data Consistency Rate | Percentage of times a data item is the same across linked sources [29] [28]. | ( # of matching biomarker values between lab and EHR / Total # of comparisons ) x 100. | Low rates indicate reconciliation is needed before datasets can be trusted as unified. |

| Data Time-to-Value | The latency between data collection and its availability for linkage/analysis [27]. | Average time from sample assay to result entry in linkable database. | High latency reduces data freshness, making linkages less relevant to current conditions. |

| Data Transformation Error Rate | Frequency of failures when converting data to a unified format for linkage [27]. | ( # of failed format standardization scripts / Total # of scripts run ) x 100. | High rates block the data integration process entirely, preventing linkage. |

Experimental Protocols for Assessing and Ensuring Data Quality

Protocol 1: Pre-Study Data Quality Requirements Definition This protocol must be completed before participant recruitment or sample collection begins.

- Convene a Data Governance Team: Include lab scientists, clinical researchers, data managers, and biostatisticians [28].

- Define the "Golden Record": For the core entity (e.g., study participant), specify the exact set of attributes that constitute a complete and unique record (e.g., StudyID, Informed Consent Date, Baseline Demographics) [29].

- Specify Business Rules: Document allowed values, formats, and ranges for every key variable. For example, "Serum pH must be a numerical value between 6.5 and 8.0" [29] [28].

- Design the Linkage Key: Establish the primary identifier(s) for linking datasets. Plan for secure hashing or tokenization if using personal health information.

- Document in a Data Management Plan (DMP): This living document will be the reference standard for all data quality activities [28].

Protocol 2: Ongoing Data Quality Monitoring During a Study This protocol ensures quality is maintained throughout the data lifecycle.

- Automate Profiling Checks: Schedule weekly automated scripts to run against incoming data, calculating the metrics in Table 1 [25].

- Implement Threshold Alerts: Set thresholds for each metric (e.g., "Alert if completeness for Sample ID falls below 99%"). Configure alerts to notify data stewards [28].

- Perform Routine Audits: Manually audit a random 5% sample of records each month against the business rules defined in Protocol 1.

- Maintain an Issue Log: Document all data quality incidents, their root cause, and the remediation action taken. This log is critical for regulatory compliance and scientific transparency [25].

Protocol 3: Pre-Linkage Data Reconciliation This protocol must be executed immediately before performing the final linkage for analysis.

- Isolate and Cleanse: Extract the datasets to be linked into a dedicated cleaning environment.

- Run a Comprehensive Quality Report: Generate a final report using all metrics from Table 1 for each source dataset.

- Reconcile Inconsistencies: For every variable present in both datasets, identify inconsistent values. Form a committee to determine the correct value based on source verification rules (e.g., "Prioritize lab instrument readout over manual nurse entry").

- Final Deduplication: Apply your matching logic one final time to ensure entity uniqueness within and across datasets.

- Document the Process: The methods and decisions from this reconciliation protocol must be detailed in the methods section of any resulting publication.

Visualizing the Data Linkage Challenge and Solution

Diagram 1: How Poor Data Quality Blocks Lab-to-Field Linkage This diagram illustrates the critical failure points in the research pipeline where data quality issues can make linkage infeasible.

Diagram 2: Data Quality Assurance Workflow for Linkage Readiness This workflow provides a systematic path to diagnose and remedy data quality issues before linkage is attempted.

The Scientist's Toolkit: Essential Reagents for Data Linkage

This toolkit lists essential methodological "reagents" for ensuring data quality in linkage studies.

Table 2: Research Reagent Solutions for Data Quality

| Tool/Reagent | Primary Function | Application in Lab-Field Linkage |

|---|---|---|

| Data Profiling Software | Automatically scans datasets to discover patterns, statistics, and anomalies (e.g., % nulls, value distributions) [25] [26]. | Provides the initial diagnostic metrics (Table 1) for both lab and field datasets before linkage is attempted. |

| Business Rules Engine | A system to define and execute validation rules (e.g., "Subject_Age must be > 18") [29] [28]. | Enforces consistency and validity at the point of data entry or during integration, preventing garbage-in. |

| Deterministic Matching Logic | A defined set of rules for identifying duplicates (e.g., "Match if FirstName, LastName, and DOB are identical") [29]. | The first-pass method for deduplicating records within a single dataset (e.g., cleaning the clinical roster). |

| Probabilistic Matching Algorithm | Uses statistical likelihood (weighted scores across multiple fields) to identify potential duplicate records [29]. | Crucial for linking datasets without a perfect common key, where minor discrepancies (e.g., "Jon" vs "John") exist. |

| Data Lineage Tracker | Documents the origin, movement, transformation, and dependencies of data over its lifecycle [25]. | Critical for audit trails, reproducibility, and understanding how a final linked variable was derived from raw sources. |

| Standardized Vocabulary (Ontology) | A controlled set of terms and definitions (e.g., SNOMED CT, LOINC) [25]. | Ensures consistency by providing a common language for encoding diagnoses, lab tests, and observations across disparate systems. |

Methodological Approaches: Techniques for Effective Laboratory-Data Integration

Core Principles and Comparative Analysis

This section defines the fundamental concepts of record linkage and compares the performance characteristics of deterministic and probabilistic methods, providing a foundation for method selection in research.

What are the basic definitions of deterministic and probabilistic record linkage? Deterministic linkage classifies record pairs as matches based on predefined, exact agreement rules on identifiers (e.g., social security number, or first name, surname, and date of birth) [31]. It operates on a binary decision framework. Probabilistic linkage, most commonly based on the Fellegi-Sunter model, uses statistical theory to calculate match weights (scores). These weights aggregate the evidence from multiple, potentially imperfect identifiers to estimate the likelihood that two records belong to the same entity [32] [31].

What are the key performance trade-offs between deterministic and probabilistic linkage? A simulation study evaluating 96 real-life scenarios found that each method has distinct strengths. Deterministic linkage typically achieves higher Positive Predictive Value (PPV), meaning a lower rate of false matches. In contrast, probabilistic linkage generally achieves higher sensitivity, meaning it misses fewer true matches [33]. The choice involves a direct trade-off between linkage precision and completeness.

Table 1: Comparative Performance of Linkage Methods [33]

| Performance Metric | Deterministic Linkage | Probabilistic Linkage | Key Implication |

|---|---|---|---|

| Sensitivity | Lower | Higher | Probabilistic finds more true matches. |

| PPV (Precision) | Higher | Lower | Deterministic creates fewer false links. |

| Data Quality Sweet Spot | Excellent quality data (<5% error) | Poorer quality, real-world data | Method choice is data-dependent. |

| Computational Speed | Faster (<1 minute in tested case) | Slower (2 min to 2 hours) | Deterministic is more resource-efficient. |

How do data quality and identifier characteristics influence method choice? The intrinsic rate of missing data and errors in the linkage variables is the key deciding factor [33]. Deterministic linkage is a valid and efficient choice only when data quality is exceptionally high, with error rates below 5% [33] [34]. Probabilistic linkage is the superior and more robust choice for typical real-world data containing errors, typos, missing values, or where only non-unique identifiers (like name and address) are available. Its ability to quantify match uncertainty and use partial agreement is critical in these settings [31].

What is a critical misconception about probabilistic linkage? A common myth is that probabilistic linkage outputs a direct probability that a record pair is a match. In reality, the Fellegi-Sunter model calculates match weights, which are scores that correlate with the likelihood of a match under certain assumptions. These weights are not formal probabilities, and the method is not inherently "imprecise" [31]. With a unique, error-free identifier, probabilistic linkage can, in theory, achieve perfect accuracy.

Table 2: Error Trade-Offs in Probabilistic Linkage [34]

| Linkage Threshold Setting | False Match Rate | Missed True Match Rate | Use Case Example |

|---|---|---|---|

| Conservative (High threshold) | <1% | ~40% | Research where data purity is paramount. |

| Balanced | Moderate | Moderate | General purpose longitudinal studies. |

| Liberal (Low threshold) | ~30% | ~10% | Public health screening (e.g., capturing 90% of matches for cancer screening programs). |

Experimental Protocols and Implementation

This section provides detailed, step-by-step methodologies for implementing probabilistic and deterministic linkage, including advanced handling of missing data.

What is a standard protocol for implementing a probabilistic Fellegi-Sunter linkage? The following protocol outlines the core steps for a probabilistic linkage project, such as deduplicating a health information exchange database or linking clinical trial records to administrative claims [32].

- Preprocessing & Standardization: Clean and format all identifier fields (e.g., names, dates, addresses) consistently across datasets. This includes correcting case, removing punctuation, and standardizing date formats [34].

- Blocking: Apply blocking strategies to reduce the computationally impossible number of pairwise comparisons. For example, only compare records that share the same first name initial and year of birth [32] [35]. Multiple blocking passes (e.g., on different variable combinations) are often used to ensure completeness.

- Field Comparison & Weight Calculation: Within each block, compare record pairs on selected matching variables.

- Score Record Pairs: For each record pair, sum the agreement or disagreement weights across all comparison variables to obtain a total match score [35].

- Threshold Setting & Classification: Establish upper and lower score thresholds. Pairs above the upper threshold are classified as links, below the lower as non-links, and those in between are potential links for clerical review [31].

- Validation: Assess linkage quality using metrics like sensitivity, PPV, and F1-score against a manually reviewed "gold standard" subset of data [32].

What advanced protocol handles missing data in probabilistic linkage? A data-adaptive FS model protocol improves upon the common but flawed "missing as disagreement" (MAD) approach [32].

- Assume Missing at Random (MAR): Incorporate the assumption that data is Missing At Random conditional on the true match status. This avoids the bias introduced by treating missing values as direct disagreements [32].

- Data-Driven Field Selection: Instead of relying solely on expert opinion, use algorithms to select the optimal subset of matching fields that maximize discriminative power while minimizing redundancy and dependence between fields [32].

- Parameter Estimation: Use the Expectation-Maximization (EM) algorithm to estimate m- and u-probabilities from the data itself, accounting for the MAR assumption in the selected fields [32].

- Performance Evaluation: Validate the model using the F1-score (the harmonic mean of sensitivity and PPV). Studies show that combining the MAR assumption with data-driven field selection optimizes the F1-score across diverse use cases [32].

What is the protocol for a hierarchical deterministic linkage? This protocol, used by agencies like the Canadian Institute for Health Information, applies a cascade of exact-match rules [34].

- Define Rule Hierarchy: Create a sequence of deterministic matching rules, ordered from most to least restrictive and reliable (e.g., involving unique IDs).

- Execute Sequential Passes:

- Pass 1: Link all records agreeing exactly on the most reliable identifiers (e.g., health card number, full date of birth, sex).

- Pass 2: Link remaining unlinked records using a slightly relaxed rule (e.g., allowing a minor typo in health card number but exact agreement on other fields).

- Pass N: Continue with progressively looser rules (e.g., agreement on first initial, last name, and full date of birth).

- Remove Linked Records: After each pass, remove successfully linked records from the pool for subsequent passes to prevent duplicate linking.

- Assign Match Grade: Record the "match rank" (the pass number) as an indicator of linkage confidence [31].

Visualizing Linkage Workflows

The following diagrams illustrate the logical structure and decision pathways of the core linkage methodologies.

Title: Record Linkage Decision Workflow

Title: Blocking Strategy to Reduce Comparisons

Title: Fellegi-Sunter Model Process

The Researcher's Toolkit: Essential Components for Record Linkage

Table 3: Research Reagent Solutions for Record Linkage

| Tool/Component | Primary Function | Application Notes |

|---|---|---|

| String Comparators (Jaro-Winkler, Levenshtein) | Quantifies similarity between text strings (e.g., names, addresses) allowing for typos and minor spelling variations [34]. | Critical for probabilistic linkage. Jaro-Winkler is often preferred for names. |

| Phonetic Encoding (Soundex, Metaphone) | Reduces names to a phonetic code, matching names that sound alike but are spelled differently (e.g., "Smith" vs. "Smyth") [34]. | Useful in a preprocessing or parallel matching step to catch variations. |

| Blocking Variables | Fields used to partition data into smaller, comparable sets, reducing N² comparisons to a feasible number [32] [35]. | Common choices: year of birth, postal code, first name initial. Multiple blocking strategies are often combined. |

Fellegi-Sunter Model Software (e.g., RecordLinkage in R) |

Implements the core probabilistic linkage algorithm, including weight calculation and estimation [36]. | The foundational statistical model for most probabilistic linkages in health research. |

| Bloom Filters with Similarity Comparisons | A privacy-preserving technique that encodes identifiers into bit arrays, allowing for approximate similarity comparisons (e.g., Sørensen-Dice) without exposing raw data [35]. | Essential for secure, multi-party linkages. Jaccard similarity on Bloom filters is a common effective method [35]. |

| Tokenization Service | Generates a persistent, de-identified token from patient identifiers, enabling privacy-preserving linkage across different databases over time [37]. | Key for linking clinical trial data to real-world data sources like EHRs and claims for long-term follow-up [37]. |

Troubleshooting Guides and FAQs

Linkage yields too many false matches (low precision). What should I check?

- Review Thresholds: Your upper match threshold may be set too low. Increase the threshold to make the linking criteria more stringent [31].

- Check Identifier Quality: Examine the discriminating power of your matching variables. Common identifiers like gender or common last names provide little power on their own. Introduce more unique variables if possible [33].

- Verify Blocking: If blocks are too large or poorly defined, many non-matching pairs are being compared, increasing false match potential. Refine blocking criteria (e.g., use full date of birth instead of just year) [32].

- Assess Data Preprocessing: Inconsistent formatting (e.g., "St." vs. "Street") can cause false agreements. Standardize your data more rigorously [34].

Linkage is missing too many true matches (low sensitivity/recall). What can I do?

- Lower Thresholds: Your upper threshold may be too high, or a lower threshold may be needed to capture more tentative matches [31].

- Implement Approximate Matching: Switch from exact string matching to approximate comparators (e.g., Jaro-Winkler) for names and addresses to catch typos [34] [35].