A Practical Framework for Evaluating Ecotoxicity Studies: Enhancing Reliability, Relevance, and Regulatory Confidence for Biomedical Research

This article provides researchers, scientists, and drug development professionals with a comprehensive framework for evaluating the reliability and relevance of ecotoxicity studies, a critical component in environmental risk assessment and...

A Practical Framework for Evaluating Ecotoxicity Studies: Enhancing Reliability, Relevance, and Regulatory Confidence for Biomedical Research

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive framework for evaluating the reliability and relevance of ecotoxicity studies, a critical component in environmental risk assessment and chemical safety. We begin by establishing the foundational principles and regulatory drivers demanding robust study appraisal [citation:1][citation:2]. The article then details methodological applications, including systematic reliability frameworks and predictive computational models like QSAR and machine learning [citation:7][citation:9]. We address common challenges in study evaluation and mixture toxicity assessment, offering troubleshooting and optimization strategies [citation:4][citation:6]. Finally, we compare and validate different predictive models and appraisal tools, guiding professionals in selecting the most appropriate methods for their needs. This integrated approach aims to enhance the transparency, consistency, and regulatory acceptance of toxicity data used in biomedical and environmental sciences.

Foundations of Ecotoxicity Study Appraisal: Understanding Core Principles and Regulatory Imperatives

The Critical Role of Study Reliability in Ecological Risk Assessment and Toxicity Value Development

The foundation of robust ecological risk assessment (ERA) and the derivation of defensible toxicity values rests upon the quality of the underlying ecotoxicity studies. As the field has evolved from evaluating single chemicals in small-scale environments to assessing complex stressors across entire landscapes, the demand for high-quality, reliable data has intensified [1]. Regulatory frameworks globally mandate the evaluation of study reliability—the inherent quality of a test report relating to its methodology and reporting—and relevance—the appropriateness of the data for a specific hazard identification or risk characterization [2]. Inconsistent evaluation of these criteria can lead directly to divergent hazard assessments, resulting in either unnecessary mitigation costs or underestimated environmental risks [2]. This guide objectively compares the established and emerging methodologies for ensuring study reliability, from traditional evaluation frameworks to modern computational models, providing researchers and assessors with the experimental data and protocols needed to navigate this critical scientific landscape.

Comparative Analysis of Traditional Study Evaluation Frameworks

The evaluation of individual ecotoxicity studies for use in regulatory decision-making has long been guided by established criteria. The dominant methods differ significantly in their approach, granularity, and consistency, as shown in the comparative data below.

Table 1: Comparison of Klimisch and CRED Study Evaluation Methods [2]

| Characteristic | Klimisch Method (1997) | CRED Method (2016) |

|---|---|---|

| Primary Focus | Reliability only. | Reliability and relevance. |

| Number of Evaluation Criteria | 12-14 for ecotoxicity. | 20 reliability criteria, 13 relevance criteria. |

| Guidance Detail | Limited; high dependence on expert judgment. | Detailed guidance provided for each criterion. |

| Result Consistency (Ring Test) | Lower consistency among assessors. | Higher consistency among assessors. |

| Typical Evaluation Time | Perceived as shorter, but less thorough. | Efficient and practical for the detail provided. |

| Handling of GLP/OECD Studies | Often automatically deemed reliable, potentially overlooking flaws. | Judged against explicit criteria regardless of test protocol. |

The Klimisch method categorizes studies as "reliable without restrictions," "reliable with restrictions," "not reliable," or "not assignable" [2]. While pioneering, it has been criticized for lack of detail, insufficient guidance for relevance, and for fostering inconsistency between evaluators [2]. Ring tests revealed that its reliance on expert judgment could lead to the same study being categorized differently by different risk assessors [2].

Developed to address these shortcomings, the Criteria for Reporting and Evaluating ecotoxicity Data (CRED) method provides a more transparent and structured framework [2]. A major international ring test involving 75 assessors from 12 countries demonstrated its advantages: participants found it more accurate, consistent, and less dependent on subjective judgment than the Klimisch method [2]. The CRED method's explicit separation and detailed assessment of both reliability and relevance strengthen the scientific defensibility of subsequent risk assessments.

Regulatory agencies have developed parallel frameworks. The U.S. EPA's Office of Pesticide Programs employs detailed guidelines for screening open literature toxicity data [3]. Studies must pass minimum criteria to be accepted, including that effects are from a single chemical, reported on whole organisms, with explicit exposure durations and concentrations, and compared to an acceptable control [3]. This process emphasizes the "best professional judgment" of the reviewer within a structured protocol [3].

Experimental Protocol: CRED Evaluation Ring Test [2]

- Objective: To compare the consistency, accuracy, and practicality of the Klimisch and CRED evaluation methods.

- Design: A two-phase ring test. In Phase I, participants evaluated the reliability and relevance of two out of eight selected ecotoxicity studies using the Klimisch method. In Phase II, a different set of participants evaluated two different studies from the same pool using a draft version of the CRED method.

- Materials: Eight peer-reviewed aquatic ecotoxicity studies covering different taxonomic groups (e.g., algae, crustaceans, fish) and chemical classes (pesticides, pharmaceuticals).

- Procedure: Studies were assigned based on participant expertise. Evaluations in the two phases were performed independently by different individuals at different institutes to prevent bias. Participants used standardized scoring sheets for both methods.

- Outcome Measures: Categorization of study reliability/relevance, time taken for evaluation, and participant feedback on method clarity and usability via questionnaire.

- Key Finding: The CRED method yielded more consistent evaluations between assessors and was perceived as providing a more transparent and detailed assessment than the Klimisch method.

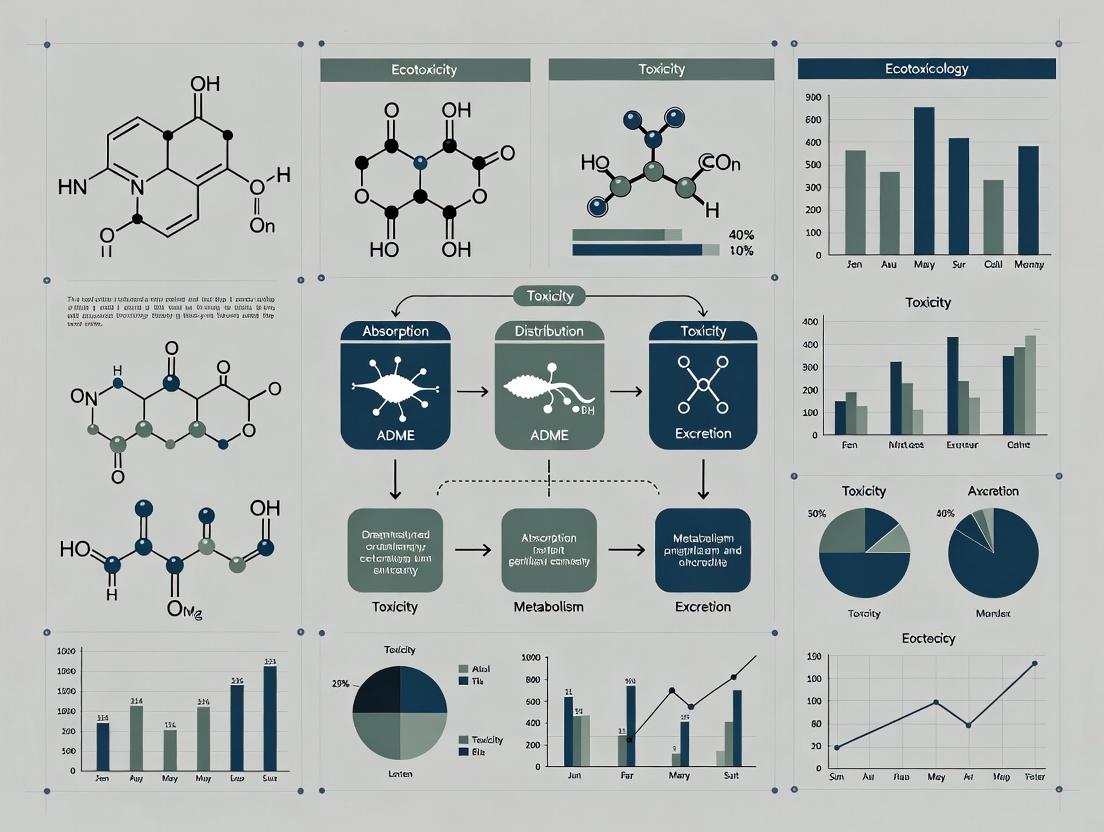

Diagram: Traditional Ecotoxicity Study Reliability and Relevance Evaluation Workflow. The process bifurcates into parallel assessments of reliability (methodological quality) and relevance (fitness for purpose), with combined results determining a study's use in formal assessments [2] [3].

The Impact of Data Quality on Derived Toxicity Values and Standards

The reliability of individual studies directly influences the accuracy of higher-order toxicity values, such as Environmental Quality Standards (EQSs) or Predicted No-Effect Concentrations (PNECs), which are often derived using Species Sensitivity Distributions (SSDs). An SSD is a statistical model that estimates the concentration of a chemical that is hazardous to a specified percentage of species (e.g., HC₅) [4].

Research quantitatively demonstrates that adding even a single high-quality ecotoxicity test to a small dataset can significantly alter the derived EQS [4]. The direction and magnitude of change depend on:

- The size of the original dataset: Smaller datasets are more volatile.

- The sensitivity of the newly added test species: A highly sensitive species lowers the EQS; a tolerant species raises it.

- The variability in the original dataset: Higher variability increases uncertainty.

Table 2: Impact of Additional Data on Derived Environmental Quality Standards (EQS) [4]

| Scenario | Impact on EQS (HC₅) | Management Consequence | Key Condition |

|---|---|---|---|

| Addition of a test with a tolerant species | EQS increases (less stringent) | Reduced remediation scope and costs; material may be deemed acceptable. | Most likely when existing data is limited and biased towards sensitive species. |

| Addition of a test with a sensitive species | EQS decreases (more stringent) | Increased remediation scope and costs; potential need for stricter emission controls. | Highlights a previously unrepresented vulnerability. |

| Addition of a test that improves taxonomic representativeness | EQS becomes more robust and credible | Increases confidence in management decisions; may increase or decrease value. | Strengthens the ecological relevance of the SSD. |

A case study on contaminated freshwater sediment management showed that a slight increase in the EQS (due to additional data) could result in a large reduction of sediment remediation costs without compromising environmental protection levels [4]. This creates a compelling economic and scientific argument for investing in reliable, high-quality testing to refine toxicity benchmarks, especially for chemicals where large volumes of material are managed close to the current standard [4].

Quantitative Structure-Activity/Structure-Toxicity Relationship (QSAR/QSTR) models have emerged as critical tools for predicting toxicity, filling data gaps, and supporting the evaluation of chemical safety without additional animal testing [5] [6] [7]. These are mathematical models that correlate a chemical's molecular descriptors (e.g., hydrophobicity, electronic properties) with its biological activity or toxicity [5].

Table 3: Validation Performance of Modern QSTR Models for Toxicity Prediction

| Model / Approach | Endpoint & Species | Key Validation Metric | Performance & Notes | Source |

|---|---|---|---|---|

| Multi-task QSTR (Machine Learning) | Acute toxicity, Daphnia magna | Cross-validation q² | 0.74 – 0.77 | Demonstrates strong predictive accuracy for a key ecotoxicity indicator species. [8] |

| Multi-task QSTR (Machine Learning) | Acute toxicity, Daphnia magna | External validation set q² | 0.79 – 0.81 | Indicates excellent predictive power for new, unseen chemicals. [8] |

| QSTR & q-RASTR (Quinoline derivatives) | Acute oral toxicity, Rat | Internal & External Validation | High goodness-of-fit, robustness, and predictive power. | Follows OECD validation principles; model is interpretable and has a broad applicability domain. [9] |

The reliability of QSAR predictions is governed by rigorous validation principles established by the Organisation for Economic Co-operation and Development (OECD), which require a model to have a defined endpoint, an unambiguous algorithm, a defined domain of applicability, and appropriate measures of goodness-of-fit and predictive ability [6] [7]. Models are validated internally (e.g., cross-validation) and externally using a separate test set of compounds [6]. The applicability domain (AD) is a crucial concept, defining the chemical space for which the model's predictions are reliable [5].

Experimental Protocol: Development and Validation of a QSTR Model [8] [9]

- Objective: To develop a predictive computational model for chemical toxicity.

- Step 1 – Data Curation: A dataset of chemicals with high-quality, experimental toxicity values (e.g., LC₅₀, NOEC) is assembled. For example, a model for Daphnia magna acute toxicity was built using 2,678 compounds [8].

- Step 2 – Descriptor Calculation: Numerical descriptors representing the chemical structures (e.g., molecular weight, log P, topological indices, 3D electrostatic fields) are calculated for each compound.

- Step 3 – Model Training: Machine learning algorithms (e.g., Random Forest, Neural Networks, Partial Least Squares) are used to find the mathematical relationship between the descriptors and the toxicity endpoint. The dataset is split into a training set (e.g., 80%) to build the model.

- Step 4 – Validation:

- Internal Validation: Techniques like leave-one-out cross-validation are performed on the training set to assess robustness.

- External Validation: The final model is used to predict toxicity for a hold-out test set (e.g., 20% of data not used in training) to evaluate real predictive power [8].

- Applicability Domain: The chemical space of the training set is characterized to identify new chemicals for which predictions are extrapolations and thus less certain.

- Step 5 – Deployment: The validated model can predict toxicity for new chemicals within its applicability domain, supporting priority setting, risk assessment, and guiding experimental design.

Diagram: QSTR Model Development and Validation Workflow. The process begins with curated experimental data and progresses through descriptor calculation, model training, and rigorous internal/external validation before deployment for prediction [6] [8] [7].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Key Research Reagent Solutions for Reliability in Ecotoxicology

| Reagent / Material | Primary Function in Ecotoxicity Studies | Role in Ensuring Reliability |

|---|---|---|

| Standard Reference Toxicants (e.g., KCl, NaCl, CuSO₄, DMSO) | Used in periodic tests with reference species (e.g., Daphnia magna, fathead minnow). | Verifies the consistent health and sensitivity of test organism cultures over time, a key reliability criterion [2] [3]. |

| Analytical Grade Test Chemicals & Certified Standards | Provides the contaminant or chemical of concern for exposure treatments. | Ensures exposure concentrations are accurate and verifiable, fundamental for dose-response assessment and study reproducibility [2] [3]. |

| Formulation Blanks & Carrier Controls | Controls for the effects of solvents or carriers (e.g., acetone, methanol) used to dissolve test chemicals. | Isolates the toxic effect to the chemical itself, a mandatory requirement for a study to be considered reliable [2] [3]. |

| Cultured, Certified Test Organisms | Provides genetically and physiologically consistent organisms for testing (e.g., algal batches, cladoceran clones). | Reduces inter-individual variability, leading to more precise and reproducible results. Species identity must be verified [3]. |

| Water Quality Verification Kits (for pH, hardness, dissolved oxygen, ammonia) | Monitors the physicochemical parameters of dilution water and test solutions. | Confirms that test conditions remain within specified ranges throughout exposure, preventing confounding stressor effects [2]. |

Diagram: Interconnected Ecosystem of Reliability Assessment Methodologies. Traditional study evaluation feeds into ecological risk assessment and standard derivation, while computational QSAR tools both inform and are validated by these processes, creating an integrated system for data generation and evaluation [2] [3] [4].

The evaluation of chemical hazards for environmental and human health protection operates through two historically independent streams: Ecotoxicology Risk Assessment (ERA) and Human Health Risk Assessment (HHRA). While both share the fundamental goal of determining safe exposure levels, they have developed distinct methodologies, data requirements, and quality appraisal frameworks [10]. This divergence creates a significant gap, hindering the efficient sharing of data, best practices, and the development of a holistic understanding of chemical risks. A critical review of existing frameworks reveals that none currently satisfy the needs of a common system capable of evaluating both toxicity and ecotoxicity data [10]. This comparison guide objectively analyzes the performance of these parallel assessment paradigms within the broader thesis of evaluating the reliability and relevance of ecotoxicity studies. It highlights how standardized appraisal criteria are not merely an academic exercise but a practical necessity for robust, transparent, and integrated chemical safety decision-making.

Comparative Analysis of Methodological Frameworks

The core process for both ERA and HHRA is conceptually aligned around a multi-step sequence. The foundational framework, as outlined in regulatory guidelines, typically involves four steps: hazard identification, dose-response assessment, exposure assessment, and risk characterization [11]. However, the execution of these steps differs substantially in focus and detail between the two fields.

Table 1: Core Methodological Framework for Risk Assessment [11]

| Assessment Step | Ecotoxicology (ERA) Focus | Human Health (HHRA) Focus |

|---|---|---|

| 1. Hazard Identification | Identify inherent ecotoxicological properties. Focus on effects across ecosystem receptors: aquatic life (algae, daphnia, fish), soil organisms, sediment dwellers, and top predators [11]. | Identify inherent health toxicological properties. Focus on chronic human health endpoints: carcinogenicity, mutagenicity, reproductive toxicity, and specific organ damage [11]. |

| 2. Dose-Response Assessment | Derives a Predicted No-Effect Concentration (PNEC). Based on ecotoxicity endpoints (e.g., LC50, EC50, NOEC) divided by an assessment factor [11]. | Derives a safe threshold dose (e.g., Tolerable Daily Intake). Based on a No-Observed-Adverse-Effect Level (NOAEL) or equivalent, divided by uncertainty factors [11]. |

| 3. Exposure Assessment | Estimates concentration of chemical in environmental compartments (water, soil, air). Considers point-source and regional-scale exposure [11]. | Estimates total human exposure via inhalation, ingestion, and dermal contact. Considers exposure for sensitive sub-populations [11]. |

| 4. Risk Characterization | Compares Predicted Environmental Concentration (PEC) to PNEC. A PEC/PNEC ratio >1 indicates potential risk [11]. | Compares Estimated Human Exposure to the safe threshold dose (e.g., TDI). An exposure > safe dose indicates potential risk [11]. |

Appraising Data Quality: Reliability and Relevance Criteria

A pivotal point of divergence lies in the formal frameworks used to evaluate the quality of individual scientific studies. Data Quality Assessment (DQA) is essential for weighting evidence, yet existing schemes are typically siloed, with little crossover between ERA and HHRA [10]. Reliability pertains to the internal soundness of a study (methodology, reporting clarity), while relevance refers to its applicability to the specific assessment context (test species, endpoint, exposure regimen) [10].

Table 2: Comparison of Selected Data Reliability Evaluation Methods [12]

| Method (Source) | Primary Domain | Evaluation Categories | Number of Criteria/Questions | Key Characteristics |

|---|---|---|---|---|

| Klimisch et al. | Toxicity & Ecotoxicity | Reliable without restrictions, Reliable with restrictions, Not reliable, Not assignable | 12 (acute ecotoxicity), 14 (chronic ecotoxicity) | Systematic approach; widely referenced in regulatory contexts (e.g., REACH). |

| Durda & Preziosi | Ecotoxicity | High, Moderate, Low quality, Not reliable, Not assignable | 40 | Based on US EPA, OECD, ASTM standards; includes both recommended and mandatory criteria. |

| Hobbs et al. | Ecotoxicity | High, Acceptable, Unacceptable quality | 20 | Developed for the Australasian ecotoxicity database; uses a scoring system (0-10). |

| Schneider et al. (ToxRTool) | Toxicity (in vivo/in vitro) | Reliable without restrictions, Reliable with restrictions, Not reliable, Not assignable | 21 | Assesses both reliability and relevance; includes mandatory questions and automatic scoring. |

A critical analysis indicates that a frequent shortcoming across frameworks is the lack of clear separation between reliability and relevance criteria, which can introduce subjectivity [10]. For ecotoxicity data from open literature, agencies like the U.S. EPA employ stringent screening criteria. Studies must meet minimum standards, including reporting a single chemical exposure, a defined biological effect on whole organisms, a concurrent measured concentration, and an explicit exposure duration, to even be considered for assessment [3].

Experimental Protocols: From Standard Tests to Biomarker Integration

Experimental methodologies form the empirical backbone of both fields. HHRA has traditionally relied on standardized mammalian in vivo tests (e.g., OECD TG) for chronic endpoints, with an increasing role for high-throughput in vitro and in silico methods to fill data gaps [13]. In contrast, ERA employs a battery of standardized tests across trophic levels (algae, invertebrate, fish) and environmental compartments (water, soil) [11].

Emerging, more integrative ecotoxicological protocols go beyond standard mortality assays to measure sub-lethal biomarker responses at multiple biological levels. These provide early warning signals and mechanistic insight. A representative protocol for anuran amphibians, a sentinel species, illustrates this approach [14]:

- Organismal Level: Assess body condition indices (e.g., scaled mass index) calculated from body weight and snout-vent length measurements.

- Biochemical Level: Measure oxidative stress enzymes (e.g., catalase, glutathione S-transferase) in tissue homogenates to evaluate metabolic disruption.

- Genetic Level: Perform the comet assay (single-cell gel electrophoresis) on erythrocytes or other cell types to quantify DNA strand breaks as a marker of genotoxicity.

- Histological Level: Conduct histopathological analysis of liver or gonadal tissues to identify tissue damage and dysfunction.

This multi-scale approach provides a more comprehensive toxicity profile than any single endpoint [14]. While powerful, such non-standard methods face greater scrutiny in regulatory DQA due to variability, highlighting the need for standardized appraisal criteria to judge their reliability and relevance for risk assessment [10].

Visualizing Assessment Pathways and Data Evaluation

The following diagrams illustrate the integrated risk assessment workflow and the parallel data evaluation processes in ecotoxicology and human health.

Integrated Risk Assessment Workflow with DQA [11] [10]

Data Quality Assessment for Eco and Human Health Studies [10] [12]

The Scientist's Toolkit: Essential Research Reagents and Materials

The execution of robust ecotoxicology studies, particularly those employing biomarker approaches, requires specific reagents and materials. The following table details key solutions used in advanced ecotoxicological methodologies, as exemplified in multi-scale anuran assessments [14].

Table 3: Research Reagent Solutions for Ecotoxicological Biomarker Assessment [14]

| Item Name | Function in Experimental Protocol | Typical Application / Notes |

|---|---|---|

| Phosphate Buffered Saline (PBS) | A physiological pH buffer used for tissue rinsing, cell suspension, and as a diluent for various biochemical reagents. | Prevents osmotic shock and pH changes during tissue handling and cell preparation. |

| Homogenization Buffer | A specialized buffer (often containing sucrose, EDTA, protease inhibitors) for rupturing cells and tissues to release intracellular components without degrading enzymes. | Critical for preparing tissue homogenates for subsequent analysis of oxidative stress enzymes and other biomarkers. |

| Substrate for Enzyme Assays | Specific chemical compounds that are converted by target enzymes (e.g., Catalase, Glutathione S-transferase). The rate of conversion is measured spectrophotometrically. | Used to quantify the activity of key oxidative stress enzymes, indicating metabolic disruption. |

| Comet Assay Reagents | A suite including low-melting-point agarose, lysing solution (high salt, detergents), alkaline unwinding/electrophoresis buffer, and fluorescent DNA stain (e.g., ethidium bromide). | Enables the visualization and quantification of DNA single/double-strand breaks in individual cells (genotoxicity). |

| Histological Fixative | A preserving agent like neutral buffered formalin that stabilizes tissue architecture by cross-linking proteins, preventing decay and autolysis. | Used immediately after dissection to fix tissues (liver, gonad) for later histopathological processing and analysis. |

| Oxidative Stress Indicator Dyes | Cell-permeable fluorescent probes (e.g., DCFH-DA for ROS, specific lipid peroxidation probes) that react with reactive oxygen species or their byproducts. | Can be used in live cells or tissues to detect and quantify real-time oxidative stress responses. |

The evaluation of chemical safety for ecosystems is not dictated by scientific curiosity alone but is fundamentally structured by a complex, evolving global regulatory landscape. Regulations such as the European Union’s Registration, Evaluation, Authorisation and Restriction of Chemicals (REACH), the U.S. Environmental Protection Agency (EPA) mandates, and the Organisation for Economic Co-operation and Development (OECD) Test Guidelines form the authoritative backbone that defines what data must be generated, how it must be produced, and the standards for its acceptance [15] [16]. This guide objectively compares how these key regulatory drivers shape specific testing requirements, data evaluation, and methodological innovation. Framed within a broader thesis on the reliability and relevance of ecotoxicity studies, this analysis highlights that regulatory stringency directly correlates with market growth—projected to reach $2.5 billion by 2030—and dictates the direction of scientific advancement, pushing the field toward New Approach Methodologies (NAMs) and high-throughput strategies [15] [17].

Comparative Analysis of Major Regulatory Drivers and Their Testing Mandates

Global regulatory systems share the common goal of protecting environmental health but differ significantly in their legal mechanisms, specific data requirements, and philosophical approaches to risk management. The following table compares three of the most influential systems.

Table 1: Comparison of Key Global Regulatory Drivers in Ecotoxicity Testing

| Regulatory System | Geographic Scope | Core Legal Instrument | Primary Testing Philosophy | Key Ecotoxicity Data Requirements | 2025 Notable Update |

|---|---|---|---|---|---|

| European Chemicals Agency (ECHA) | European Union | REACH Regulation, CLP, Biocidal Products Regulation (BPR) | Hazard-based, Precautionary Principle. Extensive data required for market access. | Base set for ≥1 tonne/yr: aquatic toxicity (algae, daphnia, fish), degradation, bioaccumulation. Higher tonnage triggers long-term toxicity, sediment, and terrestrial tests [17] [18]. | REACH 2.0 proposal: 10-year registration validity, Digital Product Passport, mandatory polymer notification [18]. ECHA’s 2025 report prioritizes NAMs for neurotoxicity and immunotoxicity [17]. |

| U.S. Environmental Protection Agency (EPA) | United States | Toxic Substances Control Act (TSCA), Federal Insecticide, Fungicide, and Rodenticide Act (FIFRA) | Risk-based, Cost-Benefit Analysis. Testing mandated via enforceable rules or consent orders. | Case-specific, often triggered by risk-based concerns. Standard requirements for pesticides include aquatic and terrestrial toxicity, avian testing, and sediment assays [19]. | Updated guidance on whole sediment toxicity testing for pesticide registration (Aug 2025) [19]. Proposal to rescind Greenhouse Gas Endangerment Finding signals shifting priorities [20]. |

| Organisation for Economic Co-operation and Development (OECD) | 38+ Member Countries, global de facto standard | OECD Test Guidelines (TGs), Mutual Acceptance of Data (MAD) System | Harmonization and International Standardization. Promotes animal welfare (3Rs). | Provides the standardized test methods (e.g., TG 201, TG 210) accepted by all member countries. Data generated using OECD TGs under GLP is mutually accepted [21]. | June 2025 update: 56 new/revised TGs. Introduced TG 254 (Mason Bee Acute Contact Test) and integrated omics data collection into fish and rodent tests [21]. |

The regulatory philosophy critically influences the type and volume of testing. The EU’s hazard-based approach under REACH generates a consistently high volume of standardized data, making it a primary driver of the $1.3 billion environmental concentration testing market [15]. In contrast, the U.S. EPA’s risk-based approach can lead to more targeted, but potentially variable, testing regimes. The OECD is not a regulator but a standard-setter; its Test Guidelines are the technical “how-to” documents that underpin regulatory compliance globally. The June 2025 updates explicitly aim to “strengthen the application of the Replacement, Reduction and Refinement (3Rs) principles,” directly shaping study designs toward alternative methods [21].

Regulatory-Driven Experimental Protocols: A Comparative Guide

Specific testing requirements are detailed in regulatory guidelines. Below is a comparison of two critical and currently evolving testing areas: sediment toxicity and pollinator testing.

Table 2: Comparison of Regulatory-Driven Experimental Protocols

| Test Focus | Governing Regulation/ Guideline | Test Organisms & Duration | Key Endpoints Measured | Recent Regulatory Driver & Change | Data Used For |

|---|---|---|---|---|---|

| Whole Sediment Toxicity Testing | U.S. EPA - 40 CFR Part 158 (Pesticides) [19]; OECD TG 218 (Sediment-Water Chironomid). | Benthic invertebrates (e.g., Chironomus riparius, Hyalella azteca). Typically 10-28 day exposure. | Survival, growth, emergence (for insects), reproduction. | EPA’s 2025 guidance memo now “routinely requires” these tests for pesticide registration actions, providing a detailed framework for integration into risk assessments [19]. | Assessing risk to benthic ecosystems from pesticides and other contaminants that partition to sediment. |

| Pollinator (Bee) Toxicity Testing | EU - BPR/EFSA Guidance; OECD TG 213 (Honeybee), TG 254 (2025 - Mason Bee). | Apis mellifera (honeybee) - acute & chronic. Osmia spp. (mason bee) - acute contact (new). | Acute mortality (LD50), chronic effects on survival, behavior, and larval development. | OECD’s 2025 introduction of TG 254 for solitary mason bees addresses biodiversity protection, a key research need identified by ECHA [21] [17]. | Risk assessment for insecticides and biocides. Protection of a wider range of pollinator species. |

| Fish Embryo Acute Toxicity (FET) | OECD TG 236 (Fish Embryo). | Zebrafish (Danio rerio) embryos, 96-hour exposure. | Lethality and sublethal morphological malformations. | Updated in 2025 to permit tissue sampling for omics analysis, enabling molecular-level investigation of toxicity pathways [21]. | A replacement alternative for acute fish testing (TG 203) under certain regulations, supporting the 3Rs. |

Detailed Protocol: OECD TG 254 - Mason Bee (Osmia sp.) Acute Contact Toxicity Test [21]

- Objective: To assess the acute contact toxicity of a chemical to adult mason bees, a solitary pollinator species.

- Regulatory Driver: Directly responds to ECHA’s identified need to assess non-bee pollinators [17] and the broader push for biodiversity protection.

- Test Organism: Adult female mason bees (Osmia cornuta or O. bicornis), less than 24 hours post-emergence.

- Procedure:

- Bees are briefly anesthetized with CO₂.

- A single, sublethal dose of the test substance in a defined carrier (e.g., acetone) is applied topically to the thorax using a microsyringe.

- Control bees receive the carrier only.

- Treated bees are held individually in small containers with a supply of food (sugar water) and maintained under controlled conditions (temperature, humidity, darkness).

- Duration & Observations: Mortality is recorded at 4, 24, 48, and 72 hours after treatment. Sublethal effects on behavior are also noted.

- Data Analysis: The lethal dose for 50% of the population (LD50) is calculated using appropriate statistical methods (e.g., probit analysis).

- Significance: This protocol generates standardized data for a previously unprotected species, directly influencing the ecological relevance of pesticide risk assessments and potentially leading to more restrictive regulations for certain chemicals.

The Evolving Toolkit: From Traditional Bioassays to NAMs and Omics

Regulatory priorities are catalyzing a transformation in the scientist’s toolkit. While traditional whole-organism tests remain the regulatory gold standard, the demand for faster, cheaper, and more mechanistic data is driving the adoption of advanced tools.

Figure 1: Regulatory and Technological Drivers Reshaping the Ecotoxicity Research Toolkit.

Table 3: The Scientist's Toolkit: Essential Solutions for Regulatory Ecotoxicity Studies

| Tool/Reagent Category | Specific Example | Primary Function in Regulatory Context | Regulatory Driver & Relevance |

|---|---|---|---|

| Standardized Test Organisms | Daphnia magna (Cladocera), Danio rerio (Zebrafish), Eisenia fetida (Earthworm). | Provide reproducible, internationally comparable biological response data for hazard classification and risk assessment. | Mandated by OECD Test Guidelines (e.g., TG 202, TG 236, TG 222). Their use is a prerequisite for Mutual Acceptance of Data (MAD) [21]. |

| Reference Toxicants | Potassium dichromate (for fish/daphnia), Copper sulfate (for algae). | Used to confirm the health and sensitivity of test organisms, ensuring the validity and reliability of each bioassay. | Required by quality assurance sections of OECD TGs. Critical for demonstrating laboratory proficiency during regulatory audits. |

| Omics Analysis Kits | RNA/DNA extraction kits, cDNA synthesis kits, targeted PCR or microarray panels for stress genes. | Enable molecular endpoint collection (transcriptomics) to understand mechanisms of toxicity, as now permitted in updated OECD TGs [21]. | Driven by the need for mechanistic data to support AOP development and NAM validation, as highlighted in ECHA’s 2025 research needs [17]. |

| In Vitro Bioassay Systems | Fish gill cell line assays (e.g., RTgill-W1), estrogen receptor transactivation assays. | Screen for specific toxic effects (e.g., acute fish toxicity, endocrine disruption) without whole animals, aligning with 3Rs. | ECHA identifies developing these for short-term fish toxicity as a key research need to reduce vertebrate testing [17]. |

| High-Throughput Screening (HTS) Platforms | Microfluidic droplet systems, automated imaging plate readers. | Increase testing throughput and reduce cost per sample, enabling testing at environmentally relevant concentrations [15]. | Addresses the market and regulatory need to assess more chemicals and complex mixtures faster, as seen in the $300M high-concentration testing segment [15]. |

| Predictive In Silico Tools | QSAR models, read-across frameworks, PBPK modeling software. | Fill data gaps via non-testing methods, support category formation, and prioritize chemicals for testing. | Central to ECHA’s “Analogical Reasoning” research topic. Their regulatory acceptance is a major focus to reduce animal testing under REACH [17]. |

The integration of omics technologies into updated OECD guidelines (e.g., TG 203, 210, 236) is a pivotal change [21]. It allows researchers to freeze tissue samples from standard tests for later genomic, transcriptomic, or proteomic analysis. This generates deep mechanistic data from the same animals, enhancing the relevance of studies by linking apical endpoints to molecular initiating events, without increasing animal use—directly addressing regulatory goals [17].

Data Evaluation and the Path Toward Global Harmonization

The final step in the regulatory chain is the evaluation of study reliability and relevance. This process is itself guided by regulatory criteria.

Figure 2: Core Regulatory Criteria for Evaluating Ecotoxicity Study Reliability.

The Mutual Acceptance of Data (MAD) system by the OECD is the cornerstone of global harmonization [21]. It guarantees that a safety test conducted in accordance with OECD Test Guidelines and Good Laboratory Practice (GLP) in one member country must be accepted for assessment by regulators in all other member countries. This eliminates redundant testing, saving the chemical industry an estimated €309 million annually and creating a unified market for testing services. However, challenges remain:

- Fragmentation: Regional regulations like REACH, TSCA, and China’s MEE requirements have unique data triggers and timelines, complicating global product registration [16].

- NAM Integration: While NAMs are a major research focus, their full regulatory acceptance for decision-making is still evolving. ECHA’s 2025 report explicitly notes challenges in using NAMs as “independent information” for classification under CLP rules [17].

- Mixture Assessment: The upcoming Mixture Assessment Factor (MAF) under REACH 2.0 aims to account for combined chemical exposure but will require new testing and evaluation strategies for complex substances [18].

The trajectory of ecotoxicity study evaluation is being actively shaped by several convergent regulatory trends:

- Digital Transformation: The EU’s move toward digital Safety Data Sheets (SDS) and the Digital Product Passport (DPP) will revolutionize data submission and supply chain communication, increasing transparency and potentially evaluation speed [18].

- Focus on Specific Pollutants: Broad restrictions on PFAS (per- and polyfluoroalkyl substances) and heightened scrutiny of polymers and nanomaterials are creating specialized testing and evaluation niches [17] [18].

- Systematic Integration of NAMs: Regulatory agencies are transitioning from merely accepting NAMs to actively defining their strategic use. The goal is building integrated, animal-free chemical hazard assessment systems anchored on in vitro and computational methods [17].

Figure 3: The Regulatory-Driven Evolution of Ecotoxicity Study Evaluation.

For researchers and product developers, the imperative is clear: reliable and relevant studies are those that not only follow the letter of current guidelines but also anticipate these shifts. Investing in mechanistic understanding (via omics), proficiency in in silico tools, and familiarity with digital compliance systems will be essential. The regulatory landscape is evolving from a checklist of tests to a holistic, evidence-driven framework where study evaluation increasingly weighs predictive power and biological plausibility alongside traditional test validity. Success in this environment requires navigating a path defined equally by rigorous science and proactive regulatory intelligence.

Evaluating the reliability and relevance of scientific evidence is a cornerstone of robust environmental risk assessment. Within ecotoxicity research, this evaluation hinges on three interconnected pillars: the internal validity of a study's design and conduct, the rigorous assessment of its risk of bias, and the determination of whether data are truly fit for purpose for a specific regulatory or research question [22]. This framework moves beyond simply accepting published findings, providing researchers, scientists, and drug development professionals with a structured approach to critically appraise evidence. A study may be statistically sound but irrelevant to the ecosystem in question, or it may address a pertinent question but be compromised by systematic errors that invalidate its conclusions [23]. This guide compares key methodologies and tools—from established bias assessment principles like FEAT to modern data fitness frameworks like SPIFD and benchmark datasets like ADORE—that empower professionals to distinguish robust, actionable evidence from potentially misleading results [24] [25] [22].

Internal Validity and Risk of Bias in Ecotoxicology

Internal validity refers to the extent to which a study's design and execution prevent systematic error (bias), ensuring that the observed effects can be reliably attributed to the experimental treatment rather than other factors [24] [23]. In ecotoxicology, where test organisms exhibit inherent biological variability, safeguarding internal validity is particularly challenging. For instance, in avian reproduction studies, intrinsic biological variability and typical lab variation can account for 64.9% to 93.4% of the total variability in responses [26]. This high background "noise" complicates the detection of true treatment signals.

Table 1: Key Variability Factors and Endpoints in Ecotoxicity Studies

| Factor | Description | Impact on Internal Validity & Common Endpoints |

|---|---|---|

| Biological Variability | Natural variation in response among test organisms within a population [26]. | Increases random error, can mask or mimic treatment effects. Affects all endpoints (ECx, LOEC, NOEC). |

| Endpoint Type | The quantitative measure of effect derived from study data [26]. | ECx (e.g., EC50): Derived from dose-response regression, uses all data. LOEC/NOEC: Statistically derived, highly sensitive to test concentration spacing and variability. |

| Study Design & Power | Number of test concentrations, replicates, and organisms [26]. | Underpowered designs (few replicates/treatments) increase risk of false negatives (Type II error) or false positives from chance control group extremity. |

| Historical Control Data (HCD) | Compiled control data from previous studies under similar conditions [26]. | Provides context for concurrent control results, helping distinguish background variability from treatment effect. Underutilized in ecotoxicology. |

Assessing risk of bias is the practical method for evaluating internal validity. The FEAT principles (Focused, Extensive, Applied, Transparent) provide a framework for this assessment [24] [23]. A review of environmental systematic reviews found that 64% omitted risk of bias assessments entirely, and those that included them often missed key sources of bias [24]. This highlights a critical gap in evidence evaluation practice.

Experimental Protocol: Utilizing Historical Control Data (HCD)

- Objective: To contextualize the results of a concurrent control group within the range of normal historical variability for a specific test species and standardized guideline (e.g., OECD Test Guideline 203 for fish) [26].

- Data Compilation: Assemble control group endpoint data (e.g., survival, reproductive output) from all previous studies conducted in the same laboratory using the same species, strain, and test guideline [26].

- Analysis: Calculate the central tendency (mean, median) and range (min, max, percentiles) of the historical data. Graphically plot the concurrent control result against the historical distribution (e.g., as a time-series or frequency histogram) [26].

- Interpretation: If the concurrent control result falls within the expected historical range, it supports the assumption of a normal test system. A result outside the historical range signals potential issues with the test organisms or conditions, requiring caution in interpreting treatment-related effects against that control [26].

Evaluating Ecotoxicity Studies: A Workflow

Fitness for Purpose: From Data to Decision

Fitness for purpose ensures that a data source or study design is not just reliable, but also relevant and sufficient to answer a specific research or regulatory question [22]. This concept bridges the gap between a study's internal validity and its practical utility. The Structured Process to Identify Fit-For-Purpose Data (SPIFD) framework operationalizes this assessment, guiding users from a defined research question to the selection of appropriate data [22].

Table 2: Comparison of Ecotoxicological Data Sources for Fitness-for-Purpose Assessment

| Data Source | Primary Use Case | Key Strengths | Key Limitations for ML/Fitness |

|---|---|---|---|

| ECOTOX Database (US EPA) | Regulatory hazard assessment, literature data aggregation. | Extensive, public, covers >12,000 chemicals & >14,000 species [25]. | Requires significant curation; can be noisy; variable data quality [25]. |

| ADORE Benchmark Dataset | Developing & benchmarking ML models for acute aquatic toxicity prediction [25]. | Expert-curated, includes chemical & species features, defined train/test splits for reproducibility [25]. | Focused on acute mortality for fish, crustaceans, algae; not for chronic or terrestrial effects [25]. |

| Laboratory-Generated Data (GLP Studies) | Chemical registration, regulatory decision-making. | High internal validity, controlled conditions, compliant with OECD guidelines. | Costly, time-consuming, ethical concerns, may have lower external validity (real-world relevance). |

| Real-World Evidence (RWE) / Monitoring Data | Post-registration environmental monitoring, exposure assessment. | High external validity, reflects complex real-world conditions. | Often lacks control, confounding factors high, data reliability can be variable [22]. |

The SPIFD framework is applied after defining the research question and minimal criteria for a valid study design. It involves a structured, multi-step assessment [22].

Table 3: The SPIFD Framework for Identifying Fit-for-Purpose Data [22]

| SPIFD Step | Core Action | Key Questions for Ecotoxicity |

|---|---|---|

| Step 1 | Operationalize and rank the minimal criteria needed to answer the research question. | Is a specific taxonomic group (e.g., Daphnia magna) required? What is the required precision (e.g., EC50 vs. NOEC)? |

| Step 2 | Systematically evaluate potential data sources against the ranked criteria. | Does the ECOTOX database have sufficient entries for the chemical class? Does the ADORE dataset contain the required endpoint? |

| Step 3 | Assess operational and logistical feasibility of using the data source. | Is the data format machine-readable? What is the time required to clean and curate the data? |

| Step 4 | Select the optimal data source and transparently document the justification. | Why was a curated benchmark dataset chosen over raw database exports for an ML project? |

The SPIFD Framework for Data Identification

Experimental Protocol: Curating a Benchmark Dataset (ADORE Workflow)

- Source Data Extraction: Download the raw data from the primary source (e.g., the pipe-delimited ASCII files from the US EPA ECOTOX database) [25].

- Filtering by PECO Elements:

- Population: Filter by

ecotox_groupto include only "Fish", "Crusta", or "Algae". Remove entries with missing taxonomic classification [25]. - Exposure & Outcome: For acute toxicity, filter by effect (

MOR,ITX,GROetc.) and exposure duration (≤96 hours). Focus on standard endpoints like LC50/EC50 [25]. - Comparator: Ensure entries have valid negative control data.

- Population: Filter by

- Data Harmonization: Map diverse chemical identifiers (CAS, DTXSID) to a standard (e.g., InChIKey). Assign canonical SMILES strings for chemical representation [25].

- Curation & Splitting: Remove duplicates and implausible outliers. Create non-random train-test splits based on chemical scaffolds or properties to rigorously test model generalizability and avoid data leakage [25].

The Scientist's Toolkit: Key Research Reagent Solutions

Table 4: Essential Materials and Resources for Ecotoxicity Study Evaluation

| Item | Function in Evaluation | Example/Standard |

|---|---|---|

| Standard Test Organisms | Provide biologically relevant and consistent response models for toxicity. | Fish: Danio rerio (Zebrafish); Crustacean: Daphnia magna; Algae: Raphidocelis subcapitata [25]. |

| OECD Test Guidelines | Ensure study design reproducibility and baseline internal validity for regulatory acceptance. | OECD TG 203 (Fish Acute Toxicity), OECD TG 202 (Daphnia sp. Acute Immobilization), OECD TG 201 (Algal Growth Inhibition) [25]. |

| Historical Control Data (HCD) Repository | Provides lab-specific background response ranges to contextualize study results [26]. | Internal laboratory databases compiled from GLP studies; not yet standardized across ecotoxicology [26]. |

| Risk of Bias Assessment Tool | Provides a structured checklist to systematically evaluate internal validity (risk of bias) [24] [23]. | Tools based on FEAT principles; domain-specific tools for ecological studies [24]. |

| Curated Benchmark Datasets (e.g., ADORE) | Enable reproducible development, validation, and benchmarking of predictive models (e.g., QSAR, ML) [25]. | ADORE contains acute toxicity data for fish, crustaceans, and algae with chemical and species features [25]. |

| Chemical Identifier Mapping Service | Links chemical records across databases using standard identifiers, crucial for data merging and curation. | US EPA CompTox Chemicals Dashboard (DTXSID), PubChem (CID), International Chemical Identifier (InChIKey) [25]. |

The critical evaluation of ecotoxicity studies demands a multi-faceted approach that rigorously separates signal from noise. Internal validity, assessed through structured risk of bias tools adhering to the FEAT principles, is the non-negotiable foundation for trusting a study's results [24] [23]. However, a valid study on the wrong species or endpoint lacks utility. Therefore, the explicit assessment of fitness for purpose, guided by frameworks like SPIFD and empowered by modern, curated resources like the ADORE dataset, is essential for aligning evidence with decision-making contexts [25] [22]. For researchers and regulators, the integrated application of these concepts—leveraging historical control data to understand variability, transparently appraising bias, and systematically selecting fit-for-purpose data—transforms evidence evaluation from a subjective exercise into a robust, reproducible, and defensible scientific process. This is the cornerstone of constructing reliable knowledge and making informed decisions for environmental protection.

Methodologies in Action: Applying Systematic Frameworks and Predictive Models to Ecotoxicity Data

The foundation of robust ecological risk assessment (ERA) and the development of protective environmental quality standards is high-quality, reliable ecotoxicity data [27]. Regulators and scientists are tasked with deriving Predicted-No-Effect Concentrations (PNECs) and other benchmarks from often vast and inconsistent scientific literature [28]. A persistent challenge has been the lack of a standardized, transparent, and comprehensive method to evaluate the inherent scientific quality, or reliability, of individual studies [27] [28]. Without such a framework, evaluations are frequently subject to expert judgment, which can introduce inconsistency, bias, and a lack of reproducibility into critical regulatory decisions [28].

The need for a fit-for-purpose tool is acute. Existing methods, such as the widely used Klimisch method, have been criticized for being non-specific, lacking detailed criteria for ecotoxicology, and leaving excessive room for interpretation [28]. While other tools like the Criteria for Reporting and Evaluating Ecotoxicity Data (CRED) have emerged, a gap remained for a framework specifically designed to assess Risk of Bias (RoB)—a core component of internal validity—within ecotoxicity studies for toxicity value development [27] [28].

The Ecotoxicological Study Reliability (EcoSR) Framework has been developed to address this critical need [27]. It represents a significant advancement by integrating the classic RoB assessment approach from human health with reliability criteria specific to ecotoxicology, offering a systematic, two-tiered process for appraising study quality [27].

The EcoSR Framework: Methodology and Workflow

The EcoSR Framework is designed as a flexible, systematic tool to enhance the transparency and consistency of ecotoxicity study appraisals [27]. Its primary objective is to evaluate a study's internal validity by assessing its risk of bias, thereby determining its suitability for use in quantitative toxicity value development [27].

Core Two-Tiered Architecture

The framework operates through two sequential tiers, allowing for an efficient screening process followed by a detailed assessment.

Tier 1: Preliminary Screening (Optional). This initial step is a high-level screen to rapidly identify studies with major, critical flaws that would unequivocally exclude them from further use in a quantitative assessment. Criteria may include the absence of a control group, a completely inappropriate test organism or endpoint for the assessment goal, or fatal methodological errors [27].

Tier 2: Full Reliability Assessment. This is the core of the EcoSR Framework. It involves a detailed, criterion-by-criterion appraisal of the study's design, conduct, and reporting. The framework builds upon established RoB assessment principles and integrates key criteria from existing ecotoxicology appraisal methods used by regulatory bodies [27]. Assessors evaluate specific elements related to test design, substance characterization, exposure conditions, statistical analysis, and result reporting.

Experimental Protocol for Applying the EcoSR Framework

The application of the EcoSR Framework follows a standardized protocol to ensure consistency:

- A Priori Customization: Before evaluation begins, the assessment goals are defined, and the framework is tailored if necessary. This ensures the relevance criteria are aligned with the specific regulatory or research question (e.g., assessing chronic toxicity for a freshwater algal species) [27].

- Study Screening (Tier 1): The study abstract and methods section are reviewed against pre-defined exclusion criteria. Studies passing this screen move to Tier 2.

- Full Evaluation (Tier 2): The full study is obtained and reviewed. Each reliability criterion is assessed (e.g., "Was the test substance adequately characterized?" "Were exposure concentrations verified analytically?"). Judgments (e.g., Low/High/Unclear RoB) are made and supported by explicit notes from the study text.

- Overall Judgment & Documentation: An overall reliability judgment (e.g., reliable, unreliable, or reliable with restrictions) is synthesized from the individual criteria assessments. All judgments and justifications are documented in a transparent audit trail.

The following workflow diagram illustrates this structured evaluation process.

Diagram: Two-Tiered Workflow of the EcoSR Framework for Study Appraisal.

Comparative Analysis of Ecotoxicity Study Appraisal Frameworks

The EcoSR Framework enters a field with existing methodologies for evaluating study quality. The table below provides a comparative analysis of EcoSR against two primary alternatives: the long-established Klimisch method and the more recent CRED evaluation method.

Table 1: Comparison of Key Frameworks for Ecotoxicity Study Appraisal

| Feature | EcoSR Framework | Klimisch Method | CRED Evaluation Method |

|---|---|---|---|

| Primary Focus | Assessing Risk of Bias (RoB) and internal validity for toxicity value development [27]. | General categorization of reliability for regulatory use, often tied to Good Laboratory Practice (GLP) [28]. | Evaluating reliability and relevance for use in hazard identification and risk characterization [28]. |

| Core Methodology | Two-tiered (screening + full assessment). Integrates RoB approach with ecotox-specific criteria [27]. | A 4-point scoring system (1=reliable without restriction, 4=not reliable) based on broad criteria [28]. | Detailed checklist of 20 reliability and 13 relevance criteria with extensive guidance [28]. |

| Key Strengths | Emphasizes internal validity; systematic RoB assessment; flexible, a priori customization; designed for quantitative benchmark derivation [27]. | Simple, fast, and widely recognized in historical regulatory contexts [28]. | Very comprehensive and transparent; strong focus on relevance; includes reporting recommendations to improve future studies [28]. |

| Noted Limitations | Newer framework with less established track record of regulatory application. | Non-specific, lacks essential criteria, leaves room for interpretation, potential bias towards GLP studies [28]. | Can be time-consuming to apply; may be more detailed than needed for some screening purposes. |

| Regulatory Alignment | Builds on criteria from regulatory body methods; designed to fit various chemical classes [27]. | Historically embedded in several EU frameworks, though criticized [28]. | Developed to improve consistency across and within regulatory frameworks [28]. |

| Outcome | Judgment on reliability/RoB for specific quantitative use. | A single reliability score (1-4). | Separate judgments on reliability and relevance, with detailed documentation. |

Performance Evaluation: Addressing Key Challenges in Ecotoxicology

The EcoSR Framework is designed to address specific, recurrent challenges in interpreting ecotoxicity data. Its structured approach provides tangible benefits in key areas where traditional methods may falter.

Managing Biological Variability and Interpreting Control Data

A fundamental challenge in ecotoxicology is distinguishing a true treatment-related effect from natural biological variability [26]. Sublethal endpoints like reproduction or growth are inherently variable [26]. The EcoSR Framework's rigorous assessment of experimental design and statistical analysis directly addresses this. For instance, it critically appraises whether the study used an adequate number of replicates and appropriate statistical power to detect an effect against background "noise" [27]. This complements the growing advocacy for using Historical Control Data (HCD)—compilations of control group results from past similar studies—to contextualize findings [26]. While HCD helps define the "normal" range of variability, the EcoSR Framework ensures the primary study itself was conducted with sufficient rigor to make such a comparison meaningful.

Evaluating Data from Diverse Test Systems

Modern ecotoxicology utilizes a vast array of tests, from in vitro bioassays and biomarker measurements to whole-organism and complex mesocosm studies [29]. A key strength of the EcoSR Framework is its flexibility and customizability [27]. Its criteria can be adapted to appraise non-standard tests that are increasingly important for understanding sublethal effects and mixture toxicity [29]. This is a significant advantage over methods like Klimisch, which are often criticized for being biased towards standard guideline tests [28]. The framework's emphasis on internal validity principles (e.g., exposure verification, blinding, confounding factors) allows it to be applied across different test levels, from cellular to ecosystem, ensuring reliable data is identified regardless of the test system's complexity.

Table 2: Application of EcoSR Principles to Different Test Types

| Test Type | Key EcoSR Evaluation Focus | Common Reliability Pitfalls Addressed |

|---|---|---|

| In Vitro Bioassay | Substance solubility and stability in medium; verification of nominal concentrations; appropriateness of cell viability controls; specificity of the endpoint measured. | Cytotoxicity interference with specific endpoint; solvent toxicity; inaccurate concentration due to sorption to labware. |

| Whole-Organism Chronic Test | Adequate control performance (e.g., survival, growth); analytical verification of exposure concentrations; randomization of test organisms; appropriateness of statistical model for endpoint (e.g., count, continuous data). | High control variability masking effects; test substance degradation leading to underestimated exposure; pseudo-replication. |

| Mesocosm / Field Study | Characterization of site conditions; documentation of confounding environmental factors; adequacy of sampling design and replication in space/time. | Effects attributable to environmental variables other than the test substance; insufficient statistical power due to low replication. |

Implementing rigorous reliability assessments requires more than a framework. The following table outlines key resources and tools that constitute an essential toolkit for researchers and assessors applying the EcoSR or similar methodologies.

Table 3: Research Reagent Solutions for Ecotoxicity Study Appraisal

| Tool / Resource | Function in Reliability Assessment | Key Features / Examples |

|---|---|---|

| Reporting Checklists (e.g., CRED Recommendations) | Provides a benchmark for what constitutes a well-reported study. Used proactively by researchers or reactively by assessors to identify missing information [28]. | The CRED checklist includes 50 criteria across 6 categories (general info, test design, substance, organism, exposure, statistics) [28]. |

| Chemical Databases & QSAR Tools | Provides supporting data on substance properties and predicted toxicity, aiding in the evaluation of test substance characterization and result plausibility. | ECOSAR: Predicts aquatic toxicity [30]. CompTox Dashboard: Aggregates experimental toxicity data from sources like ToxValDB [31]. Use requires professional judgement on applicability [30] [31]. |

| Historical Control Data (HCD) Repositories | Enables contextualization of control group results from a single study against the background of "normal" laboratory variability [26]. | Can be compiled internally by laboratories or accessed via collaborative initiatives. Critical for interpreting highly variable sublethal endpoints. |

| Statistical Analysis Software | Enables the assessor to independently verify reported statistical analyses or re-analyze data if raw data are available. | Software like R or specialized packages (e.g., drc for dose-response analysis) are essential for checking NOEC/LOEC, ECx values, and confidence intervals. |

| Study Management & Documentation Platforms | Facilitates the transparent and consistent documentation of the appraisal process, linking judgments to text excerpts. | Tools like systematic review software (e.g., CADIMA, Rayyan) or structured spreadsheets are vital for creating the audit trail mandated by frameworks like EcoSR. |

The introduction of the EcoSR Framework marks a progressive step towards standardizing and improving the critical appraisal of ecotoxicity studies. By specifically integrating a Risk of Bias assessment with ecotoxicology-specific criteria, it fills a methodological gap between human health assessment tools and the needs of ecological risk assessors [27]. Its development aligns with broader movements in toxicology towards greater transparency, reproducibility, and systematicity in evidence evaluation.

For researchers, adopting the reporting standards implied by frameworks like EcoSR and CRED during study design and publication will increase the regulatory utility and impact of their work [28]. For regulators and risk assessors, applying a structured, transparent tool like EcoSR promotes consistency, reduces subjective bias, and builds defensibility in decisions that rely on the best available science [27] [28]. Ultimately, the widespread adoption of such frameworks will strengthen the scientific foundation of environmental protection measures, from chemical registration under programs like REACH to the derivation of water quality standards worldwide [31] [28]. Future refinement and field-validation of the EcoSR Framework will further solidify its role in advancing reliable ecotoxicological science.

Within the critical task of ecological risk assessment, the reliability and relevance of individual ecotoxicity studies are foundational for developing robust toxicity values and making informed regulatory decisions [10]. The inherent variability of biological test systems, especially for key endpoints like reproduction, makes distinguishing true treatment-related effects from background noise a significant challenge [26]. To ensure conclusions are based on the best available science, a systematic, transparent, and consistent approach to evaluating study quality is essential [27]. This guide details a structured two-tiered framework—comprising a Preliminary Screening (Tier 1) and a Full Reliability Assessment (Tier 2)—designed to appraise the internal validity and risk of bias in ecotoxicological studies.

Various frameworks have been developed to assess the reliability and relevance of (eco)toxicity data. The table below compares key frameworks, highlighting the distinct position of the modern two-tiered approach.

| Framework Name & Primary Scope | Core Methodology | Key Strengths | Primary Limitations | Relation to Tiered Approach |

|---|---|---|---|---|

| Klimisch et al. (1997) Score (Human & Eco) | Assigns studies to four reliability categories (1=reliable to 4=unreliable) based on standardized guidelines and reporting [10]. | Simple, widely recognized, provides a single score for ranking. | Lack of transparency in scoring; poor separation of reliability and relevance criteria; can be subjective [10]. | Inspired later, more transparent systems. Lacks a formal screening tier. |

| ECETOC (2009) / ECHA (2011) (Eco) | Criteria-based checklist focusing on test methodology, reporting, and data analysis. Results in a reliability category [10]. | More detailed and transparent than Klimisch. Developed for regulatory use. | Primarily designed for data submitted under REACH; may not fully capture biases in all study designs [10]. | Functions as a full assessment. The tiered approach incorporates and expands on such criteria. |

| EFSA (2009) (Eco) | Detailed checklist addressing reliability and relevance separately. Uses a "traffic light" (red/amber/green) system for internal validity criteria [10]. | Clear separation of reliability vs. relevance; visual output highlights specific weaknesses. | Can be complex and time-consuming for all studies; no rapid screening pre-phase [10]. | Its structured checklist is analogous to a comprehensive Tier 2. The tiered approach adds a Tier 1 screening step. |

| Toxicological data Reliability Assessment Tool (ToxRTool) (Human) | Multi-criteria tool with weighted scoring across 20 criteria, generating a percentage reliability score [10]. | Quantitative, reproducible score; reduces subjectivity. | Weightings may not be universally appropriate; primarily for human health studies [10]. | Demonstrates the move towards quantitative scoring, a potential output of Tier 2. |

| EcoSR Framework (Eco) | Two-tiered system: Optional Tier 1 (screening) and mandatory Tier 2 (full assessment). Integrates risk of bias appraisal with ecotoxicity-specific criteria [27]. | Promotes efficiency by screening out clearly unreliable studies; transparent, systematic, and tailored to ecotoxicology [27]. | A newer framework requiring broader validation and regulatory uptake. | This is the focal framework of the step-by-step guide below. |

The Scientist's Toolkit: Essential Research Reagent Solutions

The consistent application of a reliability assessment framework depends on both methodological tools and reference materials. The following toolkit is essential for conducting robust evaluations.

| Item / Solution | Primary Function in Reliability Assessment | Key Considerations for Use |

|---|---|---|

| OECD Test Guidelines | The international standard for test methodologies (e.g., OECD 201 for algae, OECD 211 for daphnia). Studies adhering to validated guidelines are typically higher reliability starting points [26]. | Verify the specific guideline version used and any reported deviations. |

| Historical Control Data (HCD) | A compiled dataset of control group results from previous studies using the same method and species. Critical for contextualizing the "normal" range of variability in the concurrent control [26]. | Must be derived from studies conducted under comparable conditions (e.g., lab, strain, husbandry). Lack of guidance on its use is a current limitation [26]. |

| Statistical Analysis Software | For re-analyzing study data if needed, or for applying specific statistical models (e.g., dose-response modeling for ECx values, survival analysis) [26]. | Understanding the assumptions and appropriateness of the statistical tests used in the original study is a key assessment criterion. |

| Data Extraction & Management Tool | A structured database or sheet to consistently record extracted study details, metrics, and appraisal scores. Ensures transparency and reproducibility of the assessment process. | Should be designed to capture all elements outlined in the Tier 1 and Tier 2 criteria. |

| Reference Toxicity Controls | Data from tests with standard reference substances (e.g., potassium dichromate for fish toxicity). Used to verify the health and sensitivity of the test organisms in the study being appraised. | Absence or failure of reference toxicity controls can indicate systematic test system problems, affecting reliability. |

Step-by-Step: Tier 1 - Preliminary Screening

The objective of Tier 1 is a rapid, binary evaluation to identify studies that are clearly unsuitable for use in a risk assessment, thereby conserving resources for deeper analysis of potentially useful studies [27]. This screening focuses on critical "knock-out" criteria related to fundamental validity.

Experimental Protocol for Tier 1 Screening:

- Define the Assessment Question: Clearly state the ecological receptor, endpoint (e.g., apical mortality, reproduction, growth), and exposure scenario of interest. This defines relevance, which is assessed in Tier 2 [10].

- Extract Basic Study Information: Record the test substance, test organism (species, life stage), study type (acute/chronic), key measured endpoints, and reported outcome (e.g., LC50, NOEC).

- Apply Knock-Out Criteria: Evaluate the study against the following sequential criteria. A "Yes" to any question typically terminates the appraisal and classifies the study as "Unacceptable" for the current assessment purpose.

- Criterion 1 - Test Substance Identification: Is the chemical identity of the test substance undefined, ambiguous, or of unknown purity (>20% impurities)? [10]

- Criterion 2 - Test System Relevance: Was an irrelevant test system used (e.g., a soil invertebrate study for an aquatic exposure assessment)? This is a rapid relevance filter.

- Criterion 3 - Absence of Control: Is there no concurrent control group reported? A control is mandatory for establishing baseline effects [26].

- Criterion 4 - Unacceptable Mortality: For chronic studies, did control group mortality exceed the test guideline's validity limits (e.g., >20% for daphnia reproduction) [26]?

- Criterion 5 - Critical Methodology Omission: Is there a clear, fatal flaw in methodology that invalidates the endpoint (e.g., no renewal of test solution in a volatile chemical test)?

- Decision Point: If the study passes all knock-out criteria, it proceeds to Tier 2 for full reliability assessment. The outcome is documented as "Passed Tier 1 Screening."

Tier 1 Preliminary Screening Workflow

Step-by-Step: Tier 2 - Full Reliability Assessment

Tier 2 is a comprehensive, criteria-based assessment of a study's internal validity and risk of bias (RoB). It moves beyond simple checklists to evaluate how methodological choices might systematically skew the results [27].

Experimental Protocol for Tier 2 Assessment:

- Preparatory Customization: Before assessment, tailor the framework's criteria and their weighting based on the specific assessment goal (e.g., prioritizing certain endpoints like reproduction) and chemical class [27].

- Detailed Data Extraction: Systematically extract detailed information from the study report into a pre-defined template. Key domains include:

- Test Substance & System: Concentration verification, solvent/vehicle use and controls.

- Test Organisms: Source, acclimation, age/size, feeding.

- Experimental Design: Number of concentrations, replicates, randomization, blinding.

- Exposure Regime: Duration, medium, renewal, measured concentrations.

- Endpoint Measurement: Methodology, timing, and definition (e.g., how was immobility defined?).

- Statistical Methods & Data Reporting: Appropriateness of tests, raw data availability.

- Risk of Bias Appraisal per Domain: For each extracted domain, judge the potential for bias. The EcoSR framework builds on classic RoB approaches, asking: "Could this methodological aspect have caused a systematic deviation from the true effect?" [27] Rate each as "Low," "Medium," or "High" RoB, providing explicit justification.

- Example - Selection Bias (Randomization): "Organisms were non-randomly assigned to test vessels." → High RoB.

- Example - Performance Bias (Blinding): "Endpoint assessor was aware of treatment groups." → Medium RoB.

- Example - Attrition Bias (Missing Data): "Two replicates from the high-dose group were excluded due to aeration failure, not related to toxicity." → High RoB.

- Contextualization with Historical Data: Compare the study's control group response to HCD. If the control is an extreme outlier (e.g., extremely low reproduction), it may indicate an underlying problem, increasing the RoB for the entire study [26].

- Overall Reliability Grading & Documentation: Synthesize domain-specific RoB ratings into an overall reliability grade (e.g., High, Medium, Low). This grade should reflect the confidence that the study's results are truthful for its experimental conditions. Document all judgments transparently.

Tier 2 Full Reliability Assessment Process

A study that successfully navigates both tiers receives a final reliability grade and a clear statement of its relevance to the specific assessment question [10]. This output is the essential input for a Weight-of-Evidence (WoE) analysis, where multiple studies are combined. A highly reliable study will carry more weight than a less reliable one. The transparent documentation from this process allows risk managers and other scientists to understand the basis for inclusion or weighting of each data point, leading to more robust and defensible ecological risk assessments and toxicity value development [27].

The two-tiered EcoSR framework addresses a critical gap by providing a structured, ecotoxicology-focused tool that promotes efficiency and transparency [27]. Its systematic application helps researchers and regulators distinguish true chemical effects from the natural variability inherent in biological test systems [26], ultimately supporting more scientifically sound and ethical decision-making in chemical safety evaluation.

The reliability and relevance of traditional ecotoxicity studies are increasingly scrutinized due to their time-consuming nature, high cost, ethical constraints, and challenges in cross-species extrapolation [32]. Within this context, computational toxicology has emerged as a transformative field, offering tools to predict chemical hazards while aligning with global regulatory pushes to reduce animal testing [33] [34]. At the core of this shift are Quantitative Structure-Activity Relationship (QSAR) models and advanced machine learning (ML) algorithms. These in silico methods do not merely serve as alternatives but provide a framework for enhancing the reliability of ecotoxicological assessments by enabling rapid screening, mechanistic insight, and data gap filling for thousands of untested chemicals [33] [35]. This comparison guide objectively evaluates the performance of foundational QSAR approaches against modern ML and deep learning (DL) alternatives, providing researchers with a clear analysis of their predictive power, applicability, and limitations in the pursuit of more reliable and relevant environmental safety science.

Performance Comparison of Modeling Approaches

The predictive landscape in computational toxicology features a hierarchy of models, from traditional regression-based QSAR to sophisticated graph neural networks. Their performance varies significantly based on the endpoint, data quality, and biological complexity.

Table 1: Performance Comparison of QSAR, q-RASAR, and Traditional ML Models

| Model Type | Typical Algorithms | Key Advantage | Reported Performance (Example) | Major Limitation |

|---|---|---|---|---|

| Traditional QSAR | Multiple Linear Regression (MLR) | Interpretability, compliance with OECD principles. | For trout toxicity: R² ~0.71-0.76 [33] | Limited ability to capture complex non-linear relationships. |

| q-RASAR | MLR with similarity descriptors | Higher accuracy than QSAR by integrating read-across. | For trout toxicity: R² ~0.81-0.87, lower error [33] | Performance depends on the quality and density of the training set. |

| Classical Machine Learning | Random Forest (RF), Support Vector Machine (SVM), XGBoost | Handles non-linear data; good general performance. | For reproductive toxicity: RF AUC ~0.85-0.89 [36] | Dependent on manual feature engineering; descriptors may not capture full structural context. |

| Deep Learning (Graph-Based) | GCN, GAT, MPNN, CMPNN | Automatic feature learning from molecular structure. | Best for ecotoxicity: GCN AUC 0.982-0.992 [32]; CMPNN for reprotox AUC 0.946 [36] | "Black-box" nature; requires large datasets and computational resources. |

Table 2: Cross-Species and Cross-Endpoint Predictive Performance

| Prediction Scenario | Model Strategy | Reported Performance | Key Insight |

|---|---|---|---|

| Single-Species (e.g., Fish) | Graph Convolutional Network (GCN) | High AUC (0.982 - 0.992) [32] | Excellent performance when training and testing within the same species. |

| Cross-Species (Train on Algae/Crustacean, Predict for Fish) | GCN/Graph Attention Network (GAT) | AUC reduced by ~17% [32] | Significant performance drop highlights species-specific toxicodynamic differences. |

| Cross-Species for Unseen Chemicals | Deep Neural Network (DNN) | Moderate AUC (0.821) [32] | More challenging but valuable for prioritizing chemicals with no analogous test data. |