A Comprehensive Guide to Meta-Analysis Techniques for Ecotoxicity Data: From Methodology to Validation

This article provides a systematic guide to meta-analysis techniques for synthesizing ecotoxicity data, tailored for researchers, scientists, and drug development professionals.

A Comprehensive Guide to Meta-Analysis Techniques for Ecotoxicity Data: From Methodology to Validation

Abstract

This article provides a systematic guide to meta-analysis techniques for synthesizing ecotoxicity data, tailored for researchers, scientists, and drug development professionals. It covers the foundational role of meta-analysis in quantifying environmental risks and informing policy, as evidenced by its application to pollutants like organochlorine pesticides and microplastics[citation:3][citation:4]. The methodological core details the step-by-step process, including systematic review protocols (PRISMA), statistical models for effect size calculation, and the use of tools like Meta-Mar[citation:2][citation:6]. It addresses critical challenges such as managing data heterogeneity, assessing publication bias, and validating results against measured concentrations[citation:3][citation:9]. Furthermore, the guide explores advanced integrations with machine learning for toxicity prediction and frameworks for the critical appraisal of methodological quality[citation:1][citation:3]. The conclusion synthesizes key takeaways and outlines future directions, including the need for standardized data and method validation to enhance reliability in biomedical and environmental risk assessment.

The Power and Purpose of Meta-Analysis in Ecotoxicology

This document provides detailed application notes and protocols for conducting a meta-analysis within the specialized field of ecotoxicity research. As a core quantitative synthesis methodology, meta-analysis transcends narrative reviews by statistically aggregating results from multiple independent studies. It is particularly vital for evaluating complex environmental stressors—such as biodegradable microplastics (BMPs) and combined stressors like temperature and microplastics—where individual study outcomes may be variable or seemingly contradictory [1] [2].

Framed within a broader thesis on advanced evidence synthesis for ecological risk assessment, these protocols address the urgent need for robust, transparent, and reproducible methods. The objective is to move from qualitative summaries to quantitative, evidence-based conclusions that can inform regulatory frameworks, identify critical knowledge gaps, and guide the design of safer materials [1]. The following sections detail a standardized workflow, from protocol registration to advanced visualization, equipping researchers with the tools to generate high-quality, defensible synthetic evidence.

Detailed Methodological Protocols

The execution of a rigorous meta-analysis follows a staged, pre-defined protocol. Adherence to this structured process minimizes bias, enhances reproducibility, and ensures the synthesis addresses a clear research question [3].

Protocol Development and Registration

Before any data collection, a detailed protocol must be drafted and registered in a public repository. This commits the research plan to writing, reducing the risk of selective reporting.

- Key Elements: The protocol should include the rationale, explicit eligibility criteria, planned search strategies, data extraction variables, and intended statistical synthesis methods [4].

- Registration Platforms: For environmental health topics, platforms like PROSPERO are recommended. Registration provides a time-stamped record, promotes transparency, and helps avoid duplication of effort [3] [4].

Formulating the Research Question and Eligibility Criteria

A focused research question is the foundation of a successful meta-analysis. The PICO framework (Population, Intervention/Exposure, Comparator, Outcome) is adapted for ecotoxicity research [3].

- Population (P): The biological subject (e.g., aquatic invertebrates, freshwater fish).

- Intervention/Exposure (I): The environmental stressor (e.g., exposure to polybutylene succinate (PBS) microplastics, combined exposure to microplastics and elevated temperature) [1] [2].

- Comparator (C): The control condition (e.g., organisms not exposed to the stressor).

- Outcome (O): The measured biological endpoint (e.g., oxidative stress, growth rate, mortality, reproductive output) [1] [2]. Example: "In freshwater invertebrates (P), does exposure to biodegradable microplastics (I) compared to no exposure (C) significantly affect growth, reproduction, and mortality (O)?" [1] [2].

Eligibility criteria (inclusion/exclusion) must be defined a priori. For example, a protocol may include only peer-reviewed, experimental studies published in English after 2014 that report means, standard deviations, and sample sizes for both control and exposed groups [2].

Systematic Literature Search and Screening

A comprehensive, reproducible search is critical to capture all relevant evidence.

- Search Strategy: Develop a strategy using Boolean operators (

AND,OR). Combine terms for the exposure (e.g., "biodegradable microplastic", "polyhydroxyalkanoate") and population (e.g., "aquatic organism", "Daphnia magna") across multiple databases (e.g., Web of Science, Scopus) [1] [3] [2]. Use both controlled vocabulary (e.g., MeSH terms) and keywords. - Screening Process: Follow the PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) flow diagram. Screening should be conducted in duplicate by independent reviewers to minimize error and bias [3]. The process involves:

- Removing duplicates.

- Screening titles and abstracts against eligibility criteria.

- Assessing the full text of potentially relevant studies.

- Data Extraction: Design a standardized form to extract relevant data from included studies. Key items include: study author/year, species, exposure characteristics (polymer type, size, concentration), control conditions, outcome data (mean, SD, sample size), and exposure duration. Extraction should also be performed in duplicate [3].

Quantitative Data Synthesis and Analysis

This is the core statistical component of the meta-analysis.

- Effect Size Calculation: The standardized mean difference (e.g., Hedges' g) is commonly used in ecotoxicology to compare continuous outcomes (e.g., growth, enzyme activity) between exposed and control groups across studies with different measurement scales [1]. Hedges' g includes a correction for small sample bias.

- Statistical Model Selection: A random-effects model is typically appropriate for ecological data, as it assumes the true effect size varies between studies due to differences in species, experimental conditions, etc. The model estimates both the overall mean effect and the variance of effects across studies (tau²) [1].

- Heterogeneity Assessment: Quantify the inconsistency of effect sizes across studies using the I² statistic. I² values of 25%, 50%, and 75% are often interpreted as low, moderate, and high heterogeneity, respectively [1]. High I² indicates a need to explore sources of variation.

- Subgroup Analysis & Meta-Regression: To investigate heterogeneity, pre-specified subgroup analyses (e.g., by polymer type: PLA vs. PHB; or by taxonomic group) or meta-regressions (using continuous moderators like particle size or exposure concentration) can be conducted [1].

- Sensitivity Analysis and Publication Bias: Assess the robustness of findings by sequentially removing each study. Evaluate potential publication bias using funnel plots and statistical tests like Egger's test [1].

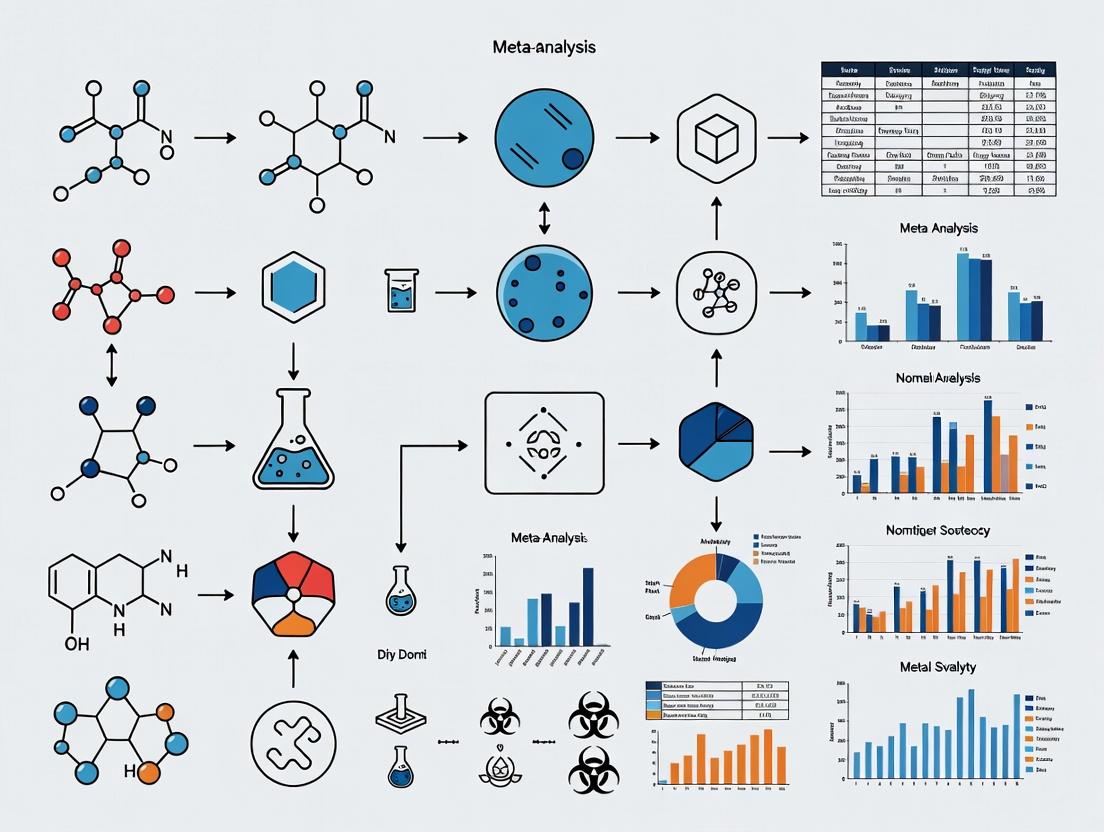

The following workflow diagram summarizes this multi-stage protocol:

Application in Ecotoxicity: Quantitative Data Synthesis

The following tables synthesize hypothetical quantitative findings based on the patterns observed in recent ecotoxicological meta-analyses [1] [2]. They demonstrate how meta-analysis clarifies overall effect trends and identifies key moderating variables.

Table 1: Overall Ecotoxicological Effects of Biodegradable Microplastics (BMPs) on Aquatic Organisms [1]

| Biological Endpoint | Number of Effect Sizes (k) | Pooled Hedges' g (95% CI) | Interpretation | Heterogeneity (I²) |

|---|---|---|---|---|

| Oxidative Stress | 206 | 0.645 (0.421, 0.869) | Significant Increase | 78.5% |

| Behavioral Alteration | 158 | -2.358 (-3.101, -1.615) | Significant Impairment | 85.2% |

| Reproductive Output | 142 | -1.821 (-2.344, -1.298) | Significant Inhibition | 81.7% |

| Growth | 125 | -0.864 (-1.201, -0.527) | Significant Inhibition | 76.3% |

| Survival/Mortality | 86 | -0.312 (-0.705, 0.081) | Non-Significant Effect | 72.9% |

Note: A negative Hedges' g indicates a harmful effect (reduction in the endpoint).

Table 2: Subgroup Analysis of BMP Effects by Polymer Type [1]

| Polymer Type | Primary Affected Endpoint(s) | Magnitude of Effect | Key Notes |

|---|---|---|---|

| PBS (Polybutylene Succinate) | Growth, Behavior | High | Consistently shows negative impacts. |

| PHB (Polyhydroxybutyrate) | Reproduction, Survival | High to Moderate | Associated with significant reproductive toxicity. |

| PLA (Polylactic Acid) | Variable | Low to Moderate | Toxicity is strongly size-dependent; less evident at environmentally relevant concentrations. |

Visualization of Meta-Analytic Data

Effective visualization is crucial for interpreting and communicating complex meta-analytic results. Advanced plots move beyond basic forest and funnel plots.

Table 3: Advanced Visualization Tools for Meta-Analysis [5]

| Plot Type | Primary Purpose | Application in Ecotoxicology |

|---|---|---|

| Rainforest Plot | Enhances traditional forest plots by visually weighting study contributions and highlighting subgroups. | Display effect sizes for different species or polymer types, with point size reflecting study weight [1] [5]. |

| GOSH Plot | Diagnoses heterogeneity and identifies outlier studies by plotting effect sizes from all possible study subsets. | Explore if a specific cluster of studies (e.g., those using a particular test species) drives the overall effect [5]. |

| Network Plot | Visualizes the comparisons between different exposures (treatments) in a network meta-analysis. | Map and compare the relative toxicity of multiple plastic types (e.g., conventional PE vs. various BMPs) when direct comparisons are lacking. |

| Interactive Dashboard (e.g., Shiny App) | Allows users to dynamically explore data by filtering subgroups or adjusting model parameters. | Enable stakeholders to interrogate results, e.g., to see the effect of microplastics specifically on fish at different temperatures [5] [2]. |

The following diagram illustrates the logical relationship between different visualizations and their role in the analytical process:

Diagram Specifications for Accessibility

All visualizations must adhere to accessibility standards to ensure information is perceivable by all users [6] [7] [8].

- Color Contrast: Use the specified palette (

#4285F4,#EA4335,#FBBC05,#34A853,#FFFFFF,#F1F3F4,#202124,#5F6368). For any text within a diagram element, explicitly set afontcolorthat provides sufficient contrast against the node'sfillcolor[6]. - WCAG Compliance: Aim for a minimum contrast ratio of 4.5:1 for normal text and 3:1 for large-scale text or graphical elements against their background [7] [8].

- Max Width: Diagrams should be rendered at a maximum width of 760px for optimal readability.

The Scientist's Toolkit: Essential Research Reagents & Software

Table 4: Key Reagent Solutions and Software for Ecotoxicology Meta-Analysis

| Item Name | Category | Function/Benefit | Example/Note |

|---|---|---|---|

| PRISMA 2020 Checklist | Reporting Guideline | Ensures transparent and complete reporting of the systematic review/meta-analysis [3]. | Critical for manuscript preparation and peer review. |

| Reference Management Software (e.g., Rayyan, Covidence) | Screening Tool | Manages citations, facilitates blind duplicate screening, and resolves conflicts [3]. | Rayyan is a free, web-based tool ideal for collaborative screening. |

Meta-Analysis Statistical Software (e.g., R metafor, Meta-Mar) |

Analysis Software | Performs all statistical calculations: effect size pooling, heterogeneity estimation, meta-regression, and generates plots [9]. | Meta-Mar is a free, online platform with an AI assistant, suitable for users without advanced coding skills [9]. |

Interactive Visualization Library (e.g., R shiny, D3.js) |

Communication Tool | Creates dynamic, web-based dashboards for exploring meta-analytic data interactively [5]. | Allows stakeholders to filter results by species, stressor, or endpoint. |

| Digital Object Identifier (DOI) for Protocol | Registration | Provides a permanent, citable link to the pre-registered protocol, ensuring transparency and priority [4]. | Obtainable from registries like PROSPERO or the Open Science Framework. |

The Critical Role of Meta-Analysis in Ecotoxicology and Regulatory Science

Meta-analysis provides a quantitative framework for synthesizing evidence across disparate ecotoxicological studies, transforming subjective narrative reviews into objective, statistically robust conclusions. In regulatory science, this methodology is critical for hazard identification and risk assessment, offering a transparent means to evaluate whether an entire body of evidence indicates a chemical poses a threat to environmental or human health [10]. The transition from qualitative, weight-of-evidence approaches to quantitative evidence integration addresses key challenges in ecotoxicology, including high data heterogeneity, ostensibly discordant study results, and the need to inform policy with consolidated scientific evidence [10] [11].

This document provides detailed application notes and protocols, framing meta-analysis as an indispensable technique within a broader thesis on ecotoxicity data research. It is designed for researchers, scientists, and drug development professionals seeking to implement rigorous evidence synthesis for environmental safety assessments.

Core Components of Ecotoxicological Meta-Analysis

A robust meta-analysis in ecotoxicology involves a multi-stage process designed to minimize bias and maximize transparency. The core components are systematically applied to convert fragmented research into actionable insight.

1. Systematic Review & Data Harmonization: The foundation is a comprehensive, protocol-driven literature search (e.g., following PRISMA guidelines) [1]. Data from eligible studies are extracted and harmonized, which often requires converting diverse reported outcomes (e.g., mortality, growth inhibition, enzyme activity) into a common effect size metric, such as Hedges' g or the log response ratio [1].

2. Statistical Synthesis & Heterogeneity Assessment: Effect sizes are pooled using statistical models. The random-effects model is typically preferred in ecological contexts as it accounts for both within-study variance and the true variability in effect sizes between studies (heterogeneity) [1]. The degree of heterogeneity (e.g., quantified by I²) is critically assessed; high values signal that the overall effect may not be generalizable and necessitate investigation into underlying drivers [10].

3. Subgroup Analysis & Meta-Regression: To explain heterogeneity and extract more nuanced conclusions, analysts employ subgroup analyses (e.g., by chemical class, species, or exposure duration) and meta-regression. Meta-regression statistically explores whether continuous or categorical study-level covariates (e.g., chemical concentration, particle size, exposure time) significantly influence the observed effect size [10] [1].

4. Sensitivity Analysis & Bias Evaluation: The robustness of findings is tested through sensitivity analyses, examining if results change upon removing specific studies or using different statistical methods. Potential for publication bias (the tendency for statistically significant results to be published more often) is evaluated using funnel plots or statistical tests [1].

Application Notes: Case Studies in Hazard Identification & Regulation

The following case studies demonstrate the practical application of meta-analysis to resolve contradictory evidence and directly inform regulatory categories.

Application Note 1: Neurotoxic Hazard of Trimethylbenzene Isomers

- Objective: To determine whether trimethylbenzene (TMB) isomers represent a neurotoxic hazard by quantitatively synthesizing ostensibly discordant animal studies on pain sensitivity [10].

- Challenge: Initial studies showed conflicting results—effects were observed immediately post-exposure, resolved after 24 hours, and reappeared 50 days later following an external stressor (foot-shock) [10].

- Meta-Analytic Resolution: A meta-analysis and meta-regression were performed, accounting for confounders like isomer type, testing time, and laboratory. The analysis revealed that when all studies were considered together, the pooled effect size was statistically significant. This supported the conclusion that TMBs are a potential neurotoxic hazard, demonstrating that the apparent discordance was due to differences in experimental timing and the application of stressors [10].

Table 1: Characteristics of Animal Studies on TMB and Pain Sensitivity [10]

| Study | Exposure Duration | Test Agent | Key Test Times Post-Exposure | External Stressor Applied? |

|---|---|---|---|---|

| Korsak and Rydzyński (1996) | Sub-chronic (90 days) | 1,2,4- & 1,2,3-TMB | Immediate, 2 weeks | No |

| Douglas et al. (1993) | Sub-chronic (90 days) | C9 Fraction (~55% TMB) | 24 hours | No |

| Gralewicz et al. (1997) | Short-term (4 weeks) | 1,2,4-TMB | 50 & 51 days | Yes (Day 51) |

| Wiaderna et al. (1998) | Short-term (4 weeks) | 1,2,3-TMB | 50 & 51 days | Yes (Day 51) |

Protocol 1: Quantitative Meta-Analysis for Hazard Identification

- Literature Search: Execute a structured search in PubMed, Web of Science, and TOXLINE using defined chemical names and health endpoints [10].

- Screening & Inclusion: Apply PECO/PICO criteria. For TMBs, inclusion required: animal toxicology studies, defined TMB exposure, measurement of thermal pain sensitivity (hot plate test), and subchronic/short-term exposure duration [10].

- Data Extraction: Extract mean, standard deviation (SD), and sample size (N) for control and each exposure group. If SD is missing, estimate from standard error, confidence intervals, or p-values.

- Effect Size Calculation: Compute the standardized mean difference (e.g., Hedges' g) for each comparison between an exposed group and its control.

- Statistical Synthesis: Pool effect sizes using a random-effects model. Assess statistical heterogeneity using Cochran's Q and I² statistics.

- Meta-Regression: Model the influence of covariates (e.g., time of testing, isomer, dose) on the effect size to explain heterogeneity [10].

- Interpretation: A pooled effect size whose confidence interval does not include zero provides quantitative support for hazard identification.

TMB Meta-Analysis Workflow for Hazard Identification

Application Note 2: Quantifying Risks of Biodegradable Microplastics

- Objective: To perform the first quantitative synthesis of the ecotoxicological impacts of biodegradable microplastics (BMPs) on aquatic organisms [1].

- Challenge: A growing but fragmented body of literature on BMPs showed variable effects across polymers, species, and endpoints, making overall risk difficult to assess.

- Meta-Analytic Resolution: Analysis of 717 endpoints from 28 studies showed BMPs significantly increased oxidative stress (Hedges' g = 0.645) and impaired behavior (g = -2.358), reproduction (g = -1.821), and growth (g = -0.864). Survival effects were not significant. Subgroup analysis revealed polymer-specific risks (e.g., PBS and PHB impaired growth; PHB and PGA reduced reproduction) and that PLA toxicity was strongly size-dependent [1]. This synthesis provided clear evidence that BMPs pose non-negligible ecological risks, urging their inclusion in regulatory frameworks.

Table 2: Summary of Overall Ecotoxicological Effects of Biodegradable Microplastics (BMPs) [1]

| Endpoint | Hedges' g (Random-Effects Model) | 95% Confidence Interval | Interpretation |

|---|---|---|---|

| Oxidative Stress | 0.645 | Positive CI | Significant increase |

| Behavior | -2.358 | Negative CI | Significant impairment |

| Reproduction | -1.821 | Negative CI | Significant inhibition |

| Growth | -0.864 | Negative CI | Significant inhibition |

| Survival | Not Significant | Includes zero | No significant effect |

Protocol 2: Systematic Review & Meta-Analysis for Emerging Contaminants

- Search Strategy: Follow PRISMA. Search Web of Science/Scopus with terms: e.g.,

("biodegradable microplastic*" AND "aquatic organism*"). Define time frame [1]. - Screening: Two independent reviewers screen titles/abstracts, then full texts, based on pre-defined inclusion (e.g., in vivo aquatic exposure, BMP tested, relevant endpoint measured) and exclusion (e.g., reviews, non-BMP particles) criteria.

- Data Coding: Extract data into a standardized form: author, year, species, BMP polymer type, size, concentration, exposure duration, endpoint, and statistical results (mean, SD, N for treatment and control).

- Effect Size Calculation: For continuous data (e.g., enzyme activity, length), calculate Hedges' g. For binary data (e.g., mortality), calculate log odds ratio.

- Advanced Synthesis: Perform subgroup analysis by polymer type, taxon, and particle size. Use meta-regression to test the influence of continuous variables like exposure concentration.

- Reporting: Present overall and subgroup pooled effects. Discuss major sources of heterogeneity and environmental implications of the findings [1].

Meta-Analysis Workflow for Biodegradable Microplastic Ecotoxicity

Application Note 3: Differentiating Pesticide Regulatory Categories

- Objective: To identify whether environmental fate and ecotoxicity data can scientifically differentiate between Low-Risk Active Substances (LRAS), Candidates for Substitution (CfS), and conventional synthetic chemicals (ScC) in the EU [12].

- Challenge: Regulatory categorization has significant implications for market access and risk indicators but needed robust scientific validation.

- Meta-Analytic Resolution: A meta-analysis of regulatory data showed clear distinctions. LRAS had the shortest median degradation half-life (DT₅₀) in soil (1.78 days) and highest median EC₅₀ (least toxic) for algae. CfS were the most persistent (DT₅₀ 80.93 days) and toxic (lowest EC₅₀) [12]. This provided strong empirical support for the EU's regulatory framework and suggested that specific ecotoxicological thresholds (e.g., algal EC₅₀ > 10 mg/L) could serve as screening indicators for identifying new LRAS [12].

Table 3: Meta-Analysis of Regulatory Data for EU Pesticide Categories [12]

| Parameter | Low-Risk (LRAS) | Synthetic Chemicals (ScC) | Candidates for Substitution (CfS) |

|---|---|---|---|

| Median Soil DT₅₀ (days) | 1.78 | 19.74 | 80.93 |

| Median Water/Sediment DT₅₀ (days) | 7.23 | Data shown in study | Data shown in study |

| Median Algal EC₅₀ (mg/L) - P. subcapitata | 10.3 | 1.094 | 0.147 |

| Median Aquatic Plant EC₅₀ (mg/L) - L. gibba | 100 | 1.1 | 0.154 |

| Regulatory Implication | Preferred, low weight in risk indicators | Standard approval | Targeted for phase-out, high weight in risk indicators |

Protocol 3: Meta-Analysis of Regulatory Ecotoxicity Data

- Data Source Compilation: Use official regulatory databases (e.g., EU Pesticides Database) to list all approved active substances. Obtain all related assessment reports (e.g., EFSA Conclusion Documents) [12].

- Parameter Extraction: Systematically extract numerical values for key regulatory parameters: degradation half-lives (DT₅₀) in soil and water, and acute ecotoxicity endpoints (EC₅₀/LC₅₀) for standard test organisms (algae, aquatic invertebrates, fish).

- Categorization and Cleaning: Classify each substance into its regulatory category (LRAS, CfS, ScC). Exclude substances with incomplete data.

- Descriptive & Comparative Statistics: Calculate median and range for each parameter by category. Use non-parametric tests (e.g., Kruskal-Wallis) to determine if differences between categories are statistically significant.

- Indicator Development: Based on the distributions, propose science-based threshold values (e.g., "LRAS typically have an algal EC₅₀ > X mg/L") that could streamline future regulatory evaluations [12].

Table 4: Key Research Reagent Solutions and Resources for Ecotoxicological Meta-Analysis

| Resource Name | Type | Primary Function / Utility |

|---|---|---|

| ECOTOX Knowledgebase | Curated Database | A comprehensive, publicly available source of single-chemical toxicity data for aquatic and terrestrial species. Essential for data mining and initial evidence gathering [13]. |

| PRISMA Guidelines | Reporting Framework | Provides a standardized checklist and flow diagram for conducting and reporting systematic reviews and meta-analyses, ensuring transparency and completeness [1]. |

| R Statistical Software | Analysis Software | The primary environment for advanced meta-analysis. Packages like metafor, meta, and robumeta are specifically designed for calculating effect sizes, fitting complex models, and generating publication-quality plots. |

| Web of Science / Scopus | Bibliographic Database | Core platforms for executing comprehensive, reproducible systematic literature searches across multidisciplinary scientific literature. |

| Cochrane Handbook | Methodology Guide | The definitive guide to the methodology of systematic reviews, offering in-depth guidance on statistical methods, risk of bias assessment, and interpretation, applicable beyond clinical fields. |

Synthesis Workflow for Regulatory Decision Support

The integration of meta-analysis into the regulatory science workflow transforms fragmented data into a structured evidence base for decision-making.

From Data to Decision: Meta-Analysis in Regulatory Science

Meta-analysis is a critical, transformative tool in ecotoxicology and regulatory science. It moves the field beyond qualitative synthesis by providing a transparent, statistical framework to integrate evidence, resolve apparent contradictions, quantify overall effects, and identify key moderators of toxicity. As demonstrated through applications in neurotoxic hazard assessment, emerging contaminant evaluation, and pesticide regulation, meta-analysis directly strengthens the scientific foundation of environmental protection policies. Its systematic approach is indispensable for managing the complexity of modern ecotoxicological data and ensuring that regulatory decisions are built upon a robust, objective, and comprehensive assessment of the available science.

Application Notes: Core Concepts in Ecotoxicological Meta-Analysis

Meta-analysis provides a quantitative framework for synthesizing results from independent ecotoxicological studies, enabling researchers to discern general patterns of chemical effects, quantify overall toxicity, and identify sources of variability. Within this framework, three statistical pillars are paramount: effect sizes, which measure the magnitude and direction of a toxicological response; heterogeneity, which quantifies the consistency of effects across studies; and confidence intervals, which express the precision of the pooled estimate [14] [15].

In ecotoxicology, these concepts are applied to translate disparate experimental outcomes—such as reductions in growth, survival, or reproduction—into a common metric for synthesis. For instance, a meta-analysis on plastic toxicity revealed that microplastics significantly reduce insect survival (effect size: -1.17) and growth (effect size: -0.69) [16]. This quantitative synthesis is critical for ecological risk assessment (ERA), moving beyond qualitative reviews to provide regulators with robust, statistically defensible evidence on contaminant impacts across species and ecosystems [17] [15].

Understanding and managing heterogeneity—the variation in effect sizes beyond random sampling error—is a central challenge. In environmental studies, heterogeneity arises from legitimate biological and methodological diversity (e.g., differences in species sensitivity, plastic polymer type, exposure concentration, or test duration) [16] [18]. Rather than merely a statistical nuisance, investigating heterogeneity can reveal key moderators of toxicity. A meta-analysis on microplastic-heavy metal co-toxicity, for example, used machine learning to identify heavy metal concentration and exposure time as critical drivers of variable toxic effects [18]. Confidence intervals contextualize the findings by providing a range of plausible values for the true effect. Narrow intervals indicate greater precision, often stemming from a larger number of studies or consistent results, while wide intervals suggest uncertainty and call for more research [15] [19]. The following table synthesizes key effect size metrics and heterogeneity statistics from recent ecotoxicological meta-analyses.

Table 1: Summary of Key Metrics from Recent Ecotoxicological Meta-Analyses

| Study Focus & Citation | Primary Effect Size Metric | Key Pooled Effect Size (Hedges' g or log RR) | Heterogeneity Statistic (I²) | Major Identified Moderators of Heterogeneity |

|---|---|---|---|---|

| Plastic toxicity to insects [16] | Hedges' g | Survival: -1.17; Growth: -0.69 | Not explicitly reported | Plastic type (micro- vs. nanoplastic), concentration, exposure duration |

| Biodegradable microplastic toxicity to aquatic organisms [1] | Hedges' g | Behavior: -2.358; Oxidative Stress: +0.645 | High heterogeneity across endpoints | Polymer type (e.g., PBS, PHB, PLA), particle size, exposure concentration |

| Microplastic & temperature stress on freshwater invertebrates [2] | Log Response Ratio (lnRR) | Growth: -0.24; Reproduction: -0.18 | Significant heterogeneity reported | Species (e.g., Daphnia magna), feeding mode, geographical context of study |

| Transcriptional biomarkers in metal-exposed bivalves [14] | Log Response Ratio (lnRR) | Overall response: 0.50 (65% increase) | Modeled via Bayesian hierarchical models | Transcript type (e.g., mt, hsp70), tissue type, exposure time |

Experimental Protocols for Meta-Analysis

Protocol for Systematic Literature Review and Data Extraction

A rigorous, reproducible literature search forms the foundation of a credible meta-analysis. The following protocol is adapted from established methodologies in the field [18] [14] [1].

Objective: To comprehensively identify, screen, and extract relevant quantitative data from peer-reviewed ecotoxicology studies for statistical synthesis.

Materials & Software:

- Bibliographic Databases: Web of Science, Scopus, Google Scholar.

- Reference Management Software: EndNote, Zotero, or Mendeley.

- Screening Tool: Rayyan.ai or Covidence for blinded screening.

- Data Extraction Form: Custom-designed in Microsoft Excel, Google Sheets, or specialized meta-analysis software.

Procedure:

- Define the PICO/S Framework: Formulate the research question using Population (e.g., freshwater invertebrates), Intervention/Exposure (e.g., polystyrene microplastics > 1 mg/L), Comparator (control conditions), and Outcome (e.g., growth rate, mortality). Specify Study designs (e.g., laboratory toxicity tests).

- Develop Search Strategy:

- Identify core search terms from the PICO elements (e.g., "microplastic", "Daphnia*", "growth", "mortality").

- Utilize Boolean operators (AND, OR, NOT) and database-specific syntax (e.g., asterisk * for truncation).

- Apply the search string to title, abstract, and keyword fields. An example from a recent review is:

("temperature*" OR "climate change") AND ("Microplastic*") AND ("Freshwater" OR "lakes") AND ("invertebrat*")[2]. - Set a publication date range (e.g., from database inception to present).

- Execute Search & Manage Records: Run the search across all selected databases. Merge results and remove duplicates using reference management software.

- Screen Studies:

- Title/Abstract Screening: Two independent reviewers assess records against inclusion/exclusion criteria. Resolve conflicts through discussion or a third reviewer.

- Full-Text Screening: Retrieve and assess the full text of potentially relevant studies. Maintain a log of excluded studies with reasons.

- Extract Data:

- Extract descriptive information: author, year, test species, contaminant type/concentration, exposure duration, endpoint measured.

- Extract quantitative data for effect size calculation: mean, standard deviation (SD or SE), and sample size (n) for both treatment and control groups. If not directly reported, calculate from figures using software like WebPlotDigitizer or extract from test statistics (e.g., t-value, p-value) [14] [15].

- Extract data on potential moderators: taxonomic group, particle size, polymer type, temperature, pH, etc. [16] [18].

- All extractions should be performed independently by two reviewers to ensure accuracy.

Protocol for Calculating Effect Sizes and Assessing Heterogeneity

This protocol outlines the core statistical synthesis process, applicable in software like R (with metafor or meta packages), Comprehensive Meta-Analysis, or RevMan.

Objective: To compute a standardized metric of toxicological effect for each study, pool them into an overall estimate, and quantify the consistency of effects across the included studies.

Materials & Software:

- Statistical software (R, Python, Stata, or dedicated meta-analysis software).

- Dataset containing extracted means, SDs, and sample sizes.

Procedure:

- Calculate Individual Study Effect Sizes:

- For continuous data (e.g., length, weight, enzyme activity), the Hedges' g is the recommended standardized mean difference. It includes a correction for small sample bias:

g = (Mean_treatment - Mean_control) / SD_pooled * J, whereJis the correction factor [16] [1]. - For proportional or count data (e.g., survival counts), the log Response Ratio (lnRR) is often used:

lnRR = ln(Mean_treatment / Mean_control)[2] [14]. Its variance is also calculated for weighting. - Calculate the variance and standard error for each effect size estimate.

- For continuous data (e.g., length, weight, enzyme activity), the Hedges' g is the recommended standardized mean difference. It includes a correction for small sample bias:

- Model Selection and Pooling:

- Test for Heterogeneity: Compute Cochran's Q statistic and the I² statistic. I² describes the percentage of total variation across studies that is due to heterogeneity rather than chance (e.g., I² > 50% suggests substantial heterogeneity) [2] [1].

- Choose Meta-Analytic Model: If heterogeneity is low (I² < 25-30%), a fixed-effect model can be used, assuming a single true effect size. In ecotoxicology, substantial heterogeneity is common; therefore, a random-effects model (e.g., DerSimonian-Laird, restricted maximum likelihood) is typically more appropriate, as it assumes true effects vary across studies and estimates the average effect [14].

- Pool Effect Sizes: Compute the weighted average of individual effect sizes, where the weight assigned to each study is typically the inverse of its variance (more precise studies receive greater weight). Report the pooled effect size with its 95% confidence interval.

- Investigate Heterogeneity (Moderator/Subgroup Analysis): If significant heterogeneity exists (I² is high), conduct analyses to explain it.

- Categorical Moderators: Use subgroup analysis or meta-regression with a mixed-effects model to compare pooled effects between groups (e.g., microplastics vs. nanoplastics; different polymer types) [16] [2].

- Continuous Moderators: Use meta-regression to test if effect size correlates with a continuous variable (e.g., exposure concentration, log-transformed particle size, temperature) [18] [14].

Protocol for Constructing and Interpreting Confidence Intervals

Confidence intervals (CIs) are fundamental for inference, and the method of calculation can impact regulatory decisions [15] [19].

Objective: To calculate a range of plausible values for the true pooled effect size or benchmark dose and to select an appropriate method based on the data structure.

Materials & Software: Statistical software (R, with packages like drc for benchmark dose modeling).

Procedure:

- For Pooled Effect Sizes:

- The 95% CI around a pooled effect from a random-effects model is typically calculated as:

Pooled Estimate ± 1.96 * Standard Error. - Visually inspect CIs on a forest plot. If the CI for an overall effect does not cross the line of no effect (e.g., Hedges' g = 0 or lnRR = 0), it is statistically significant at the α=0.05 level.

- The 95% CI around a pooled effect from a random-effects model is typically calculated as:

- For Benchmark Dose (BMD) and Related Metrics:

- In dose-response analysis, the Benchmark Dose (BMD) is a dose that produces a predetermined benchmark response (BMR, e.g., a 10% effect). The lower confidence limit of the BMD (BMDL) is often used as a point of departure in risk assessment [19].

- Comparison of CI Methods for BMD [19]:

- Delta Method: An analytic approximation based on the variance-covariance matrix. It is computationally fast but can be unreliable for nonlinear models, often producing excessively narrow intervals.

- Likelihood-Ratio (LR) Method: Determines the interval based on values where the log-likelihood drops by a specified amount (e.g., χ²/2) from its maximum. It is more reliable than the delta method for nonlinear models and is suitable for routine analysis.

- Bootstrap Method: A resampling technique that estimates the sampling distribution of the BMD empirically. It is computationally intensive (requiring thousands of runs) but is highly flexible, robust for complex models, and integrates well with probabilistic risk assessment. It is considered the gold standard when computational resources allow.

- Recommendation: For regulatory ecotoxicology where BMD modeling is applied, the likelihood-ratio method provides a good balance of reliability and efficiency for calculating confidence limits [19].

Interpretation: A recent meta-analysis on chronic toxicity data reformatted common endpoints like the No Observed Effect Concentration (NOEC) into effective concentrations (e.g., EC₅). It found median adjustment factors (e.g., NOEC/1.2 ≈ EC₅) and highlighted that the median percent effect occurring at the NOEC was 8.5% [15]. This underscores that traditional hypothesis-testing endpoints (NOEC, LOEC) correspond to variable effect levels, and their CIs (or conversion to point estimates with CIs) are crucial for accurate risk interpretation.

Visualizations

Visualization 1: Meta-Analysis Workflow for Ecotoxicity Data

Visualization 2: Comparison of Confidence Interval Calculation Methods

The Researcher's Toolkit

Table 2: Essential Tools for Ecotoxicological Meta-Analysis

| Tool Category | Specific Tool / Software | Primary Function in Meta-Analysis | Key Notes / Relevance |

|---|---|---|---|

| Bibliographic & Screening | Web of Science, Scopus, Google Scholar | Primary literature databases for systematic searching. | Use complex Boolean queries. Google Scholar for grey literature checks [18] [14]. |

| Rayyan.ai, Covidence | Platform for blinded title/abstract and full-text screening by multiple reviewers. | Manages PRISMA flow, reduces screening bias. | |

| Data Extraction & Management | Microsoft Excel, Google Sheets | Custom spreadsheet for structured data extraction. | Pre-pilot the form. Include fields for all potential moderators [18]. |

| WebPlotDigitizer | Extracts numerical data from published graphs and figures. | Essential when means/SDs are not reported in text [14]. | |

| Statistical Synthesis | R with metafor, meta, drc packages |

Comprehensive statistical environment for all meta-analytic calculations, modeling, and graphing. | metafor is highly flexible for complex models and meta-regression. drc for dose-response and BMD analysis [16] [19]. |

| Comprehensive Meta-Analysis (CMA) | User-friendly commercial software for conducting meta-analysis. | Good for teams less familiar with programming. | |

| Specialized Analysis | Machine Learning Libraries (e.g., scikit-learn in Python, caret in R) |

Identifying complex, non-linear moderators of heterogeneity. | Used in advanced analyses to pinpoint key toxicity drivers (e.g., XGBoost model in [18]). |

Bayesian Statistical Software (e.g., Stan, JAGS, brms in R) |

Fitting hierarchical models to account for multiple levels of variability. | Suitable for complex data structures and incorporating prior knowledge [14]. |

Historical Context & The Emergence of Systematic Synthesis

The call for rigorous evidence synthesis in environmental science was powerfully foreshadowed by Rachel Carson's Silent Spring, which itself constituted a narrative synthesis of disparate studies to warn of pesticide dangers [20]. The formal scientific impetus for such synthesis was articulated in the 19th century, with Lord Rayleigh (1880s) emphasizing that science requires not just accumulating facts but "digestion and assimilation of the old," and George Gould (1898) envisioning a system where a researcher could gain knowledge of "the experience of every other man in the world" within an hour [20]. The term "systematic review" appears in the medical literature as early as 1867 [20]. The modern evolution accelerated in the late 20th century, driven by the need to minimize bias, increase statistical power, and organize growing bodies of evidence, culminating in structured frameworks like those developed by the Cochrane Collaboration [20]. In ecotoxicology, this evolution has transitioned from narrative reviews to quantitative meta-analyses, now routinely used to inform critical policy decisions [21].

Current Landscape & Methodological Challenges in Ecotoxicology

Recent mapping of the field reveals significant growth but also critical methodological shortcomings. An analysis of 105 meta-analyses on organochlorine pesticides—inspired by the research wave following Silent Spring—synthesized 3,911 primary studies [21]. A quantitative evaluation of their methodological quality yielded concerning results, as summarized below.

Table 1: Methodological Quality Assessment of Organochlorine Pesticide Meta-Analyses (n=105) [21]

| Quality Dimension | Percentage of Meta-Analyses with Deficiency | Key Implications for Ecotoxicity Research |

|---|---|---|

| Low Overall Methodological Quality | 83.4% | Undermines reliability of synthesized evidence for regulation. |

| Common Use in Policy Documents | Commonly cited | Poor-quality synthesis may directly misinform environmental policy. |

| Geographic Bias in Production | Limited from developing nations | Lack of synthesis where pesticides are still in use for disease control. |

| Taxonomic Bias | Paucity of wildlife meta-analyses | Despite ample primary evidence, synthesis gaps exist for key taxa. |

| Impact of Reporting Guidelines | Positive correlation with quality | Adherence to protocols is a readily implementable improvement. |

Concurrently, the application of meta-analysis has expanded to novel stressors. A 2025 global meta-analysis on plastic toxicity to insects found microplastics significantly impaired all measured health traits, with survival (effect size: -1.17) and growth (-0.69) most affected [16]. Another 2025 meta-analysis on interactive stressors revealed that elevated temperature exacerbates microplastic toxicity in freshwater invertebrates for growth, reproduction, and stress endpoints, though not for mortality [2]. These studies demonstrate the method's power but also inherit the field's overarching quality challenges.

Application Notes: A Standardized Protocol for Ecotoxicity Meta-Analysis

To address these quality gaps, the following protocol adapts systematic review guidelines from regulatory toxicology [22] and evidence synthesis best practices [20] for ecotoxicity data research.

Protocol: Six-Step Systematic Review for Ecotoxicity Data Synthesis

Step 1: Problem Formulation & Protocol Registration Define the PECO/T statement (Population, Exposure, Comparator, Outcome, Time/Taxa). Specify primary and secondary research questions. Pre-register the review protocol on a platform like PROSPERO or the Open Science Framework to minimize bias.

Step 2: Systematic Literature Search & Study Selection

- Search Strategy: Utilize multiple databases (e.g., Web of Science, Scopus, ECOTOX [23]). Develop search strings using Boolean operators combining terms for stressor, taxa, and endpoints [2].

- Inclusion/Exclusion Criteria: Define criteria a priori. For example: 1) Empirical studies exposing organisms to the stressor(s) of interest; 2) Inclusion of an unexposed control group; 3) Reported quantitative data on ecologically relevant endpoints (survival, growth, reproduction, behavior); 4) Full text in English (for feasibility, though this introduces bias) [23] [2].

- Screening: Conduct blinded screening by two independent reviewers at title/abstract and full-text levels. Resolve conflicts via consensus or a third reviewer.

Step 3: Data Extraction & Coding Extract data into a standardized, pilot-tested form. Key fields include: study ID, test species, life stage, exposure system (lab/field), exposure concentration/duration, endpoint, mean/ variance measures for control and treatment groups, sample size, and moderators (e.g., chemical type, particle size, temperature) [16] [2].

Step 4: Study Quality & Risk of Bias (RoB) Assessment Use a domain-based RoB tool tailored to ecotoxicity studies (e.g., based on criteria from the EPA's evaluation guidelines [23]). Assess bias from selection, confounding, exposure characterization, outcome measurement, and selective reporting. Do not use quality scores as weights in meta-analysis; instead, use RoB for sensitivity/subgroup analysis [22].

Step 5: Evidence Synthesis & Meta-Analysis

- Effect Size Calculation: Calculate a standardized effect size (e.g., Hedge's g, log response ratio) for each comparison to account for different measurement scales.

- Statistical Model: Use random-effects models by default due to expected heterogeneity between studies. Assess heterogeneity using the I² statistic.

- Moderator & Subgroup Analysis: Explore sources of heterogeneity (e.g., taxon, plastic type [16], temperature regime [2]) via meta-regression or subgroup analysis if sufficient studies exist.

Step 6: Confidence Rating & Reporting Rate the overall confidence in the body of evidence. Prepare the report following PRISMA guidelines, ensuring all data and analytical code are publicly archived (e.g., on GitHub/Zenodo [21] [16]).

Systematic Review Workflow for Ecotoxicology

The Scientist's Toolkit: Reagents & Materials for Meta-Analytic Research

This toolkit comprises essential digital and methodological "reagents" for executing the protocol above.

Table 2: Research Reagent Solutions for Ecotoxicity Meta-Analysis

| Tool/Resource | Primary Function | Application in Protocol Step |

|---|---|---|

| EPA ECOTOX Database [23] | Comprehensive repository of curated ecotoxicity studies from open literature. | Step 2: Primary database for identifying relevant studies on pesticide effects. |

| Rayyan, Covidence | Web tools for blinded screening and selection of studies by multiple reviewers. | Step 2: Managing the systematic screening process, conflict resolution. |

| PRISMA Checklist & Flow Diagram | Reporting guidelines ensuring transparent and complete reporting of the review. | Step 6: Framework for structuring the final review manuscript. |

| R Statistical Environment (metafor, robvis packages) | Software for all statistical analyses, including effect size calculation, meta-analysis, meta-regression, and risk-of-bias visualization. | Step 5 & 4: Core computational engine for synthesis and quality visualization. |

| GitHub / Zenodo | Platform for version control, public archiving of data, and sharing analytical code to ensure reproducibility [21] [16]. | Step 6: Public deposition of all digital materials supporting the review. |

| PECO/T Framework | Structured format for defining the review question (Population, Exposure, Comparator, Outcome/Time). | Step 1: Ensuring a focused, answerable research question. |

| Risk of Bias (RoB) Tool for Ecotoxicity | Customized tool based on EPA study acceptance criteria [23] (e.g., control adequacy, exposure verification). | Step 4: Critical appraisal of internal validity of included primary studies. |

Case Applications & Visualizing Complex Interactions

Case 1: Synthesizing Effects of Interactive Stressors

A 2025 meta-analysis on microplastics and temperature in freshwater invertebrates provides a model [2]. After systematic search and selection, data extraction captured effect sizes for growth, mortality, reproduction, and stress. The key finding was a significant interaction where elevated temperature amplified the negative effects of microplastics on growth, reproduction, and physiological stress, but not on mortality [2]. This illustrates the protocol's power to disentangle complex, non-additive effects relevant to real-world multi-stressor environments.

Case 2: Pathway Analysis for Mechanistic Insight

Beyond calculating summary effects, meta-analysis can synthesize evidence on mechanistic pathways. The physiological pathway diagram below, derived from synthesized evidence [16] [2], illustrates how stressors like microplastics, potentially exacerbated by temperature, lead to population-level ecological impacts.

Physiological Pathways from Microplastic Stress to Population Impact

Future Directions & Integration into Regulatory Risk Assessment

The evolution points toward deeper integration into regulatory frameworks. The U.S. EPA provides guidelines for evaluating open literature toxicity data in risk assessments [23], and the Texas Commission on Environmental Quality (TCEQ) has developed formal guidance for systematic reviews in toxicity factor development [22]. The future lies in:

- Automation & Machine Learning: For screening and data extraction from primary studies.

- Living Systematic Reviews: Continually updated syntheses as new evidence emerges.

- Formal Adoption by Agencies: Using pre-registered, high-quality meta-analyses as a primary evidence stream in regulatory decision-making, moving beyond the current reliance on single guideline studies.

- Closing Geographic & Taxonomic Gaps: Actively supporting the production of systematic reviews in developing countries and for under-synthesized taxa [21].

The trajectory from Silent Spring to systematic reviews represents the maturation of environmental evidence synthesis from persuasive narrative to a quantifiable, transparent, and indispensable scientific discipline for informing global environmental policy.

Bridging Laboratory Data and Real-World Ecological Risk Assessment

The central challenge in modern ecological risk assessment (ERA) lies in translating controlled, single-stressor laboratory toxicity data into predictions about the multifactorial and variable conditions of real-world ecosystems. This translation is a core component of a broader thesis on meta-analysis techniques for ecotoxicity data research. Meta-analysis provides the statistical and conceptual framework to quantitatively synthesize disparate laboratory studies, account for variability, and derive more robust, generalizable insights into contaminant effects. By systematically aggregating data across chemicals, species, and experimental conditions, researchers can bridge the gap between simplified lab models and complex environmental exposures, ultimately supporting more predictive and protective risk assessments for pharmaceuticals, pesticides, and industrial chemicals [15].

Application Notes: Integrating Data for Predictive Risk Assessment

Meta-analysis serves as a critical tool for reconciling diverse laboratory findings and quantifying overall effect magnitudes. For instance, a global meta-analysis on plastic pollution revealed that microplastics significantly impair insect health, with an average reduction in survival (Hedges' g = -1.17) and growth (Hedges' g = -0.69) [16]. This synthesis demonstrates how meta-analysis can move beyond qualitative summaries to provide quantitative, comparable metrics of hazard. These synthesized effect sizes are more reliable for informing risk characterization than individual, potentially conflicting studies.

From Lab Point Estimates to Real-World Protection Goals

A persistent issue in ERA is the use of different effect metrics from toxicity tests. Point estimates like the EC20 (Effect Concentration for 20% of organisms) and hypothesis-testing results like the NOEC (No Observed Effect Concentration) are not directly comparable. A pivotal meta-analysis established adjustment factors to bridge this gap, showing that the median NOEC corresponds to an ~8.5% effect level. The study derived a median adjustment factor of 1.2 to convert a NOEC to an approximate EC5—a level often considered within background population variability [15]. This standardization is vital for applying laboratory data to real-world scenarios where protecting population-level sustainability is the goal.

Advanced Analytics for Spatial and Integrated Risk

Real-world risk is spatially heterogeneous. Advanced meta-analytic techniques, such as Self-Organizing Maps (SOM), can integrate large geospatial datasets to identify patterns and "hotspots" of contamination. Research on soil heavy metals used SOM to reveal complex spatial distributions driven by industrial and agricultural sources, with cadmium identified as a primary risk driver [24]. Furthermore, structural equation modeling (SEM) within an analytic framework can disentangle the contributions of multiple stressors (e.g., anthropogenic activity, soil properties) on observed toxicity, moving toward causal understanding rather than mere correlation [24].

Assessing Complex Real-World Interactions: Multiple Stressors

Laboratory studies traditionally focus on single chemicals, but ecosystems face multiple, simultaneous stressors. Meta-analysis is uniquely suited to investigate interactions. A synthesis of studies on freshwater invertebrates found that elevated temperature significantly exacerbates the sublethal toxicity of microplastics on growth, reproduction, and physiological stress responses [2]. This highlights a critical pathway for bridging lab data: using meta-regression to analyze how environmental covariates (e.g., temperature, pH) modify chemical toxicity, thereby refining lab-derived estimates for specific field conditions.

Table 1: Summary of Key Meta-Analysis Findings in Ecotoxicology

| Stressors Studied | Key Synthesized Findings | Implication for Real-World ERA | Primary Source |

|---|---|---|---|

| Microplastics (Insects) | Significant negative effects on survival (-1.17), growth (-0.69), and reproduction (-0.47). | Quantifies pervasive hazard of emerging pollutants to terrestrial invertebrate communities. | [16] |

| Microplastics & Temperature (Freshwater Invertebrates) | Elevated temperature amplifies negative effects of microplastics on growth and reproduction. | Climate change must be integrated as a multiplier in chemical risk assessments. | [2] |

| Toxicity Endpoints (Freshwater Chronic Tests) | Median NOEC equates to ~8.5% effect; Adjustment factor of 1.2 converts NOEC to ~EC5. | Enables standardization and more protective use of diverse laboratory data. | [15] |

| Heavy Metals in Soil (Spatial Analysis) | Cd, Pb, Cr are primary risk drivers; spatial patterns link to industrial/agricultural sources. | Guides targeted, cost-effective remediation and monitoring efforts. | [24] |

Detailed Experimental Protocols

Protocol 1: Standardized Evaluation of Ecotoxicity Studies for Meta-Analysis

Purpose: To consistently screen, evaluate, and extract data from primary literature for inclusion in a meta-analysis dossier [25]. Procedure:

- Literature Search & Screening: Execute systematic searches in databases (e.g., Web of Science, Scopus) using defined Boolean strings for chemicals, organisms, and endpoints. Apply pre-defined inclusion/exclusion criteria (e.g., peer-reviewed, controlled experiment, relevant endpoints reported) to titles/abstracts [2].

- Dossier Creation & Data Extraction: For each included study, create a standardized dossier. Extract data into predefined fields: chemical identity/purity, test organism (species, life stage), experimental design (control, replication, exposure duration), endpoint type (mortality, growth, reproduction), and results (mean, variance, sample size for control and treatment groups) [25].

- Quality Assessment: Evaluate each study against predefined criteria for internal validity:

- Exposure Characterization: Was dose/concentration, route, duration, and media chemistry clearly documented and measured? [25]

- Biological Relevance: Were test organisms appropriate surrogates and were the measured endpoints linked to ecological fitness? [26]

- Experimental Design: Were controls properly employed and was the study powered adequately (e.g., sufficient replication)? [25]

- Statistical Reporting: Are the data reported with sufficient detail (e.g., exact n, measure of variance) to calculate effect size? [15]

- Data Codification: Code extracted data for moderators (e.g., chemical class, taxon, exposure time, temperature) for subsequent meta-regression analysis [16] [2].

Table 2: Standardized Ecotoxicity Study Evaluation Criteria

| Assessment Category | Key Questions for Review | Adequacy Indicator |

|---|---|---|

| Test Substance & Exposure | Is purity/stability reported? Are exposure concentration, duration, and route clearly defined and verified? | Explicit, measured values; use of appropriate solvents/controls. |

| Test Organism | Is species, source, life stage, and health status documented? Is it a relevant surrogate? | Use of standard test species (e.g., Daphnia magna, fathead minnow) or justified alternative. |

| Experimental Design | Was a control group used? Was replication adequate (n≥3)? Was randomization applied? | Presence of negative control; replication stated; blind scoring if subjective. |

| Endpoint Measurement | Is the endpoint clearly defined and measurable? Is it linked to individual fitness or population sustainability? | Objective measures (e.g., length, count, survival) versus subjective scores. |

| Statistical Analysis & Reporting | Are raw data or summary statistics (mean, SD/SE, n) reported for each group? Is the statistical test appropriate? | Data presented allows for effect size calculation; use of recognized statistical methods. |

Protocol 2: Laboratory Toxicity Testing for Core ERA Endpoints

Purpose: To generate standardized toxicity data for the ecological effects characterization phase of ERA [26]. Avian Acute Oral Toxicity Test (OECD TG 223):

- Test Organisms: Use healthy, young-adult birds (e.g., Northern Bobwhite quail, Mallard duck). Acclimate for at least 5 days.

- Dosing: Prepare the test substance in a suitable vehicle. Administer a single oral dose via gavage to graded dose groups (typically 5-6). Include a vehicle-control group.

- Observation: Monitor birds for mortality and signs of toxicity (e.g., lethargy, ataxia) at 1, 2, 4, 8, 24, and 48 hours post-dosing, then daily for 14 days.

- Endpoint Calculation: Record time-to-death. At study termination, calculate the median lethal dose (LD₅₀) using probit or logit analysis [26].

Freshwater Invertebrate Acute Immobilization Test (Daphnia sp.):

- Test Organisms: Use neonates (<24 hours old) from a healthy culture of Daphnia magna or D. pulex.

- Exposure: Prepare a geometric series of at least 5 concentrations of the test substance in reconstituted standard freshwater. Disperse solutions into test vessels, with 10-20 neonates per vessel and at least 4 replicates per concentration.

- Incubation & Observation: Maintain tests at 20±1°C with a 16:8 light:dark cycle. Do not feed. Record the number of immobile (non-motile) daphnids after 24 and 48 hours.

- Endpoint Calculation: Calculate the median effective concentration (EC₅₀ for immobilization) at 48h using appropriate statistical methods [26].

Honey Bee Acute Contact Toxicity Test:

- Test Organisms: Use healthy adult worker honey bees (Apis mellifera) from outdoor hives.

- Treatment: Anesthetize bees briefly with CO₂. Apply 1.0 µL of the test substance in acetone topically to the dorsal thorax. Treat control bees with acetone only.

- Housing & Observation: Place bees in cages with sucrose solution ad libitum. Maintain at 33±1°C and high humidity. Record mortality at 4, 24, 48, and 72 hours post-treatment.

- Endpoint Calculation: Calculate the median lethal dose (LD₅₀) at 24 and 48h [26].

Protocol 3: Conducting a Quantitative Meta-Analysis

Purpose: To statistically synthesize effect sizes from multiple ecotoxicity studies. Procedure:

- Effect Size Calculation: For each independent comparison within the dossier, calculate a standardized effect size (e.g., Hedges' g, log response ratio). Use means, standard deviations, and sample sizes from treatment and control groups [16].

- Model Fitting: Employ weighted random-effects meta-analysis models, as heterogeneity among ecotoxicity studies is expected. Weight each effect size by its inverse variance.

- Heterogeneity & Moderator Analysis: Quantify total heterogeneity (I² statistic). Use meta-regression to test if moderators (e.g., chemical class, plastic polymer type [16], exposure temperature [2], trophic level) explain significant variance.

- Sensitivity & Bias Assessment: Conduct sensitivity analyses (e.g., leave-one-out). Assess publication bias using funnel plots and statistical tests (e.g., Egger's regression).

Visualizing Pathways and Workflows

Figure 1: Integrative Workflow from Lab Data to Field Risk Assessment via Meta-Analysis

Figure 2: Interactive Pathways of Microplastic & Temperature Toxicity [2]

Figure 3: Methodology for Standardizing Toxicity Endpoints Using Adjustment Factors [15]

The Scientist's Toolkit: Essential Reagents & Materials

Table 3: Key Research Reagent Solutions for Ecotoxicity Testing & Analysis

| Item/Category | Function in Research | Example Application in Protocols |

|---|---|---|

| Reference Toxicants | To validate the health and sensitivity of test organism cultures. | Potassium dichromate (for Daphnia), Diazinon (for bees). Used in periodic quality control tests. |

| Standardized Test Media | To provide consistent, defined water chemistry for aquatic tests, eliminating confounding variability. | Reconstituted freshwater (e.g., EPA "hard water" or OECD M4 medium) for fish and invertebrate tests [26]. |

| Vehicle/Solvent Controls | To dissolve poorly soluble test substances without causing toxicity, establishing a proper baseline. | Acetone, methanol, dimethyl sulfoxide (DMSO). Used at minimal, non-toxic concentrations (e.g., ≤0.1% v/v). |

| Analytical Grade Test Substances | To ensure exposure is to the chemical of interest at known purity, critical for dose-response accuracy. | High-purity (>98%) active ingredients for pesticide or pharmaceutical testing. Purity must be documented [25]. |

| Live Test Organism Cultures | To provide consistent, healthy organisms of known age and history, ensuring reproducible results. | Cultures of Daphnia magna, Chironomus dilutus, fathead minnows, or Apis mellifera bees maintained under standardized conditions. |

| Meta-Analysis Software | To perform statistical synthesis, including effect size calculation, heterogeneity testing, and meta-regression. | R packages (metafor, robumeta), Comprehensive Meta-Analysis (CMA) software. Essential for Protocol 3. |

| Data Extraction & Management Tools | To systematically create and manage dossiers for the meta-analysis process. | Spreadsheet software (e.g., with predefined templates) or systematic review platforms (e.g., CADIMA, Rayyan) [25] [2]. |

A Step-by-Step Guide to Designing and Executing Rigorous Ecotoxicity Meta-Analyses

Framing the Research Question and Developing a Protocol

A well-constructed research question is the foundational pillar of any rigorous scientific investigation. This is particularly critical in the field of ecotoxicology, where researchers synthesize evidence from diverse studies to assess the impacts of contaminants like microplastics, heavy metals, and pharmaceuticals on organisms and ecosystems [2]. A precisely framed question dictates the entire meta-analytic process, from literature search strategy to data synthesis and interpretation. Within the broader thesis on meta-analysis techniques, this protocol provides a structured framework for formulating research questions and developing robust, reproducible methodologies for synthesizing ecotoxicity data, ultimately aiming to inform environmental risk assessment and policy.

Application Notes: Framing the Ecotoxicity Research Question

A research question in ecotoxicity meta-analysis should be specific, measurable, and biologically meaningful. It must clearly define the Population (the organisms or systems studied), Exposure (the contaminant and its characteristics), Comparator (the control or baseline condition), and Outcomes (the measured biological endpoints), often abbreviated as PECO.

Example from Current Research: A 2025 meta-analysis investigating combined stressors framed its central question as: "How do microplastic pollution and elevated temperatures combine to affect key physiological and ecological processes, such as growth, reproduction, mortality, and stress responses, in freshwater invertebrates?" [2]. This question explicitly defines:

- Population: Freshwater invertebrates.

- Exposure: Microplastics and elevated temperature.

- Comparator: Conditions without microplastics and at ambient temperature.

- Outcomes: Growth, reproduction, mortality, stress responses.

This clarity guides every subsequent step of the review protocol.

Detailed Experimental Protocol for Ecotoxicity Meta-Analysis

The following protocol is adapted from established systematic review methodologies and recent applications in environmental science [2].

Protocol Registration and Development

Before beginning, document the protocol. While no single mandatory registry exists for ecological reviews, publishing a protocol in an open repository (e.g., Open Science Framework) or as a journal article is considered best practice. Key protocol elements should include [27]:

- Rationale and research question(s).

- Search strategy (databases, date ranges, syntax).

- Study eligibility (inclusion/exclusion) criteria.

- Procedures for study selection, data extraction, and risk of bias assessment.

- Planned data synthesis and statistical analysis methods.

Literature Search Strategy

The goal is to perform a comprehensive, unbiased search to identify all relevant studies.

- Information Sources: Primary databases include Web of Science Core Collection and Scopus. Supplemental searches may include PubMed/MEDLINE, GreenFILE, and specialized databases like ECOTOX. Hand-searching reference lists of included studies and relevant reviews is also recommended [2].

- Search Syntax: Use a structured Boolean query combining terms for:

- Stressor: (e.g., "microplastic", "nanoplastic", "polyethylene", "polystyrene").

- Population: (e.g., "freshwater invertebrate", "Daphnia", "benthic macroinvertebrate").

- Exposure Modifier (if applicable): (e.g., "temperature", "warm", "climate change").

- Outcome: (e.g., "mortality", "growth", "reproduction", "oxidative stress").

Table 1: Example Search Syntax for Web of Science [2]

| Concept | Example Search Terms |

|---|---|

| Stressor | ("microplastic*" OR "nanoplastic*" OR "polyethylene" OR "polystyrene") |

| Population | ("freshwater invertebrate*" OR "Daphnia magna" OR "Cladocera" OR "benthic") |

| Exposure Modifier | ("temperature*" OR "warm*" OR "thermal stress" OR "climate change") |

| Combined | Combine groups with AND; use * for truncation. |

- Time Frame: Justify the start date. For emerging contaminants, searches may start from the year the contaminant was first identified in the environment. For example, a microplastic review may start from 2014, when freshwater microplastics research became a coherent field [2].

Study Screening and Eligibility

A two-stage screening process (title/abstract, then full-text) against pre-defined criteria is used [2].

Table 2: Study Inclusion and Exclusion Criteria

| Criterion | Inclusion | Exclusion |

|---|---|---|

| Study Type | Primary research articles reporting quantitative experimental data. | Reviews, commentaries, editorials, modeling-only papers. |

| Language | English (for feasibility, but note potential language bias). | Non-English articles without translatable data. |

| Population | Laboratory or field studies on defined freshwater invertebrate species. | Studies on vertebrates, plants, microorganisms, or marine/terrestrial taxa. |

| Exposure | Studies testing the defined contaminant(s), with a clear control group. | Studies with co-exposure to irrelevant contaminants or no clear control. |

| Outcome | Reports at least one quantitative endpoint (mean, variance, sample size) for a relevant biological response (e.g., survival, growth). | Only qualitative descriptions or irrelevant endpoints (e.g., behavioral with no link to fitness). |

- Workflow: The screening process should be visualized using a PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) flow diagram.

Data Extraction & Management

Use a standardized, pre-piloted form in a spreadsheet or systematic review software (e.g., CADIMA, Rayyan).

- Bibliographic Data: Author, year, journal, DOI.

- Study Characteristics: Test organism (species, life stage), test system (lab/field/mesocosm), exposure duration.

- Exposure Details: Contaminant type, size, polymer, concentration; modifier levels (e.g., temperature).

- Outcome Data: Essential for meta-analysis: Mean, measure of variance (Standard Deviation, Standard Error, Confidence Interval), and sample size (n) for both control and treatment groups. Raw data is preferred [2].

- Effect Modifiers: Data on water chemistry (pH, hardness), organism feeding mode (filter feeder, shredder), which may explain heterogeneity.

Table 3: Key Data Extraction Items

| Category | Specific Item to Extract | Format/Units |

|---|---|---|

| Study ID | First author & publication year | Text |

| Population | Test species; life stage; feeding mode | Text |

| Exposure | Contaminant concentration | mg/L, particles/L |

| Exposure Modifier | Temperature | °C |

| Outcome | Mean survival in control group | %, Proportion |

| Outcome | Standard Deviation (SD) in treatment group | Same as mean |

| Sample Size | Number of replicates (n) in control | Integer |

| Notes | Any unusual experimental conditions | Text |

Quantitative Data Synthesis & Analysis

The core of the meta-analysis involves calculating effect sizes and statistically pooling them.

- Effect Size Calculation: For continuous data (e.g., growth, reproduction), calculate the Hedges' g (a bias-corrected standardized mean difference). For proportional data (e.g., mortality), calculate the log response ratio (lnRR) or odds ratio. These metrics standardize results across studies for comparison.

- Model Fitting: Use weighted random-effects or mixed-effects models. Random-effects models account for both within-study variance and between-study heterogeneity. Use restricted maximum-likelihood (REML) estimation.

- Heterogeneity Analysis: Quantify heterogeneity using the I² statistic (percentage of total variation due to between-study differences). An I² > 50% suggests substantial heterogeneity.

- Subgroup Analysis & Meta-Regression: If heterogeneous, explore sources of variation. Pre-specified subgroups (e.g., by species, feeding mode, polymer type) can be compared. Meta-regression tests the influence of continuous moderators (e.g., concentration, temperature) on the effect size [2].

- Sensitivity & Bias Assessment: Conduct sensitivity analyses (e.g., leave-one-out analysis). Assess publication bias visually with funnel plots and statistically with Egger's regression test. Evaluate study quality/risk of bias using tailored tools.

Visualizing the Conceptual Framework and Analysis Workflow

A clear conceptual diagram illustrates the hypothesized relationships between stressors and biological outcomes, guiding the analysis.

Table 4: Key Research Reagent Solutions and Materials for Ecotoxicity Meta-Analysis

| Item/Category | Function/Purpose | Example/Note |

|---|---|---|

| Bibliographic Databases | To perform comprehensive, reproducible literature searches. | Web of Science, Scopus, PubMed. Using multiple databases minimizes missed studies [2]. |

| Systematic Review Software | To manage screening, deduplication, and consensus among reviewers. | Rayyan, CADIMA, Covidence. |

| Statistical Software with Meta-Analysis Packages | To calculate effect sizes, fit meta-analytic models, and create forest/funnel plots. | R (metafor, meta packages), Stata (metan). R is preferred for its flexibility and open-source nature. |

| Data Extraction Form | To ensure consistent, accurate, and complete data collection from heterogeneous studies. | Custom-designed spreadsheet or form, piloted before full use. |

| Reporting Guidelines | To ensure the review is conducted and reported transparently and completely. | PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses) checklist and flow diagram. |

| Chemical/Environmental Databases | To standardize and verify chemical nomenclature and properties. | U.S. EPA CompTox Chemicals Dashboard, PubChem. |

Within a thesis focused on meta-analysis techniques for ecotoxicity data research, systematic literature searching forms the indispensable foundation. A rigorous, transparent, and reproducible search protocol is critical for minimizing bias and ensuring the resulting synthesis accurately reflects the available evidence on chemical hazards, such as per- and polyfluoroalkyl substances (PFAS) or emerging contaminants like biodegradable microplastics (BMPs) [28] [1]. This document provides detailed application notes and protocols for conducting systematic searches, framed explicitly for ecological risk assessment and toxicological meta-analysis.

Core Principles and Quantitative Frameworks

Defining the Research Scope: PECO/PICO Criteria

A precisely formulated research question is essential. In environmental toxicology, the PECO framework (Population, Exposure, Comparator, Outcome) is widely adopted [28]. For a meta-analysis on ecotoxicity, this translates to:

- Population: The aquatic or terrestrial organisms under study (e.g., Daphnia magna, zebrafish).

- Exposure: The chemical stressor, its form, and concentration (e.g., PFPrA, biodegradable microplastic particles).

- Comparator: The control group or baseline exposure condition.

- Outcome: The measured toxicological endpoint (e.g., survival, growth, reproduction, oxidative stress) [1].

Establishing these criteria a priori guides all subsequent steps, including search string development and study screening.

Quantitative Foundations: Effect Size Measures for Ecotoxicity

Meta-analysis quantitatively synthesizes results using effect sizes. The choice of effect size is dictated by the type of data reported in primary studies. Common measures in ecotoxicology include [29]:

Table 1: Common Effect Size Measures in Ecotoxicological Meta-Analysis

| Effect Size Type | Common Measures | Use Case Example | Key Consideration |

|---|---|---|---|

| Comparative | Log Response Ratio (lnRR), Standardized Mean Difference (SMD/Hedges' g) | Comparing mean outcome (e.g., body length, enzyme activity) between an exposed and control group. | lnRR is preferred for continuous, ratio-based data; SMD is unitless and useful for combining different endpoints. |

| Binary | Odds Ratio (OR), Risk Ratio (RR) | Comparing proportions (e.g., survival vs. mortality, incidence of a lesion). | Requires data on the number of events and total subjects in each group. |

| Correlation | Fisher's z-transformation of correlation coefficient (Zr) | Synthesizing relationships between continuous variables (e.g., exposure concentration and biomarker level). |

A critical methodological advance is the use of multilevel meta-analytic models to account for non-independence among multiple effect sizes extracted from the same study, a common scenario in ecotoxicology [29].

Application Protocol: A Stepwise Guide

This protocol integrates the PRISMA reporting guideline and systematic review frameworks from environmental health research into a cohesive workflow for ecotoxicity meta-analysis [22] [1].